📝 Paper Summary

Humanoid Robot Control

Physics-based Motion Imitation

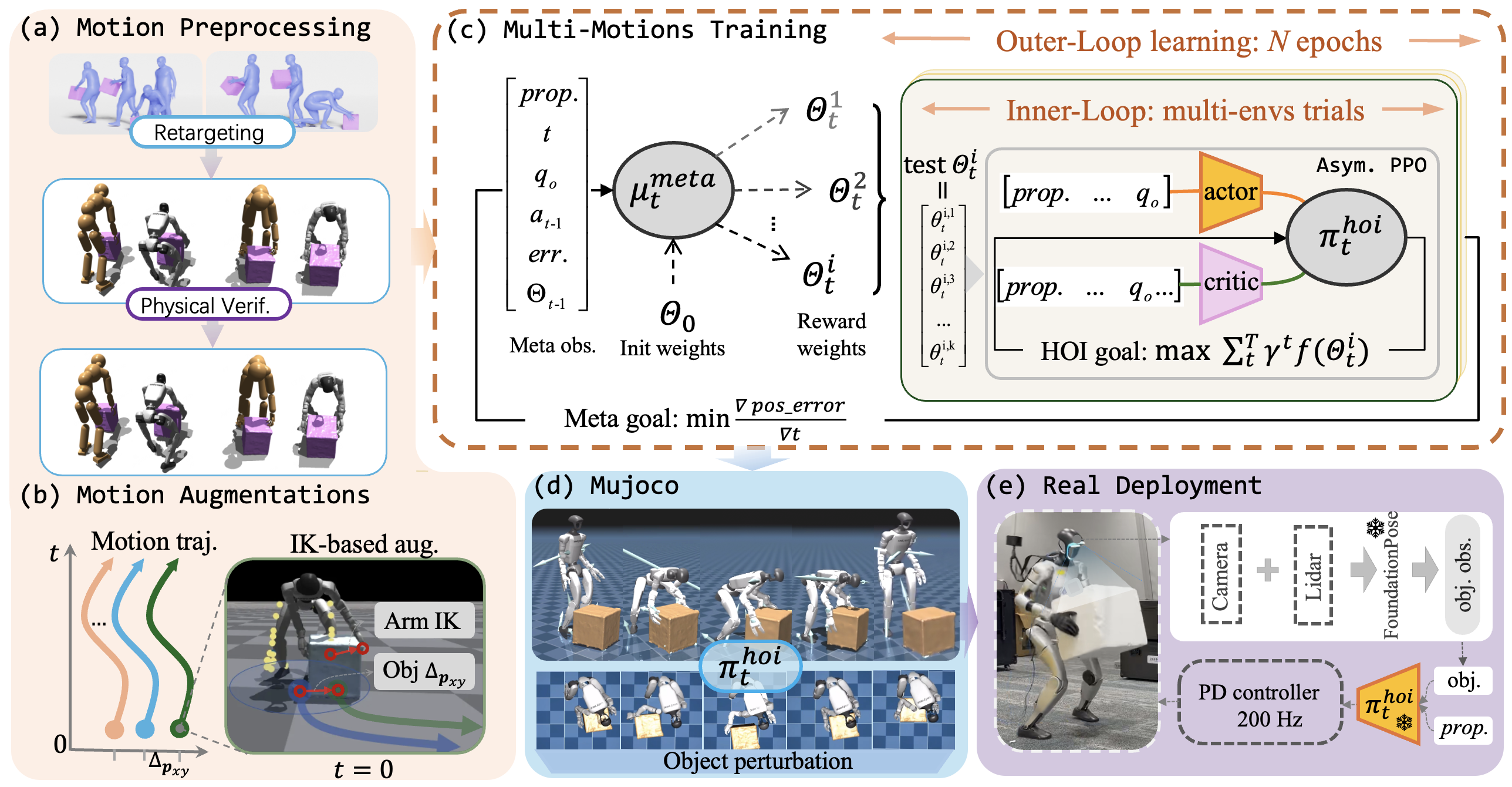

InterReal enables humanoid robots to learn complex interactive skills like box-picking by combining motion augmentation to handle object disturbances and an automatic meta-learner to dynamically tune reward weights.

Core Problem

Existing humanoid controllers excel at locomotion (walking/dancing) but fail at precise Human-Object Interaction (HOI) because they lack fine-grained contact modeling and robustness to real-world sensor noise.

Why it matters:

- Current humanoid robots are limited to non-interactive tasks or rely on teleoperation, restricting their autonomy in industrial applications.

- Manually tuning rewards for complex interaction tasks is notoriously difficult, as objectives (balance vs. tracking vs. interaction) conflict and shift across motion phases.

- Small disturbances in object position perception in the real world can cause standard motion-imitation policies to collapse or fail to grasp objects.

Concrete Example:

When a robot attempts to pick up a box, a small error in the perceived object position (e.g., from sensor noise) causes the hands to miss the grasp, leading to failure. Standard policies trained on perfect data cannot adjust, while InterReal's augmented training handles these offsets.

Key Novelty

InterReal: Physics-based HOI framework with Auto-Reward Learning

- Augments training data by artificially perturbing object positions and solving Inverse Kinematics (IK) to generate valid contact-preserving references, forcing the policy to learn robustness to spatial noise.

- Uses a bi-level optimization where a 'meta-policy' treats the main RL training as an environment, dynamically adjusting reward weights based on tracking errors (e.g., prioritizing balance early, interaction later).

Architecture

Overview of InterReal framework including motion preprocessing, bi-level training loop, and deployment.

Evaluation Highlights

- Achieves highest task success rates on both Box-Picking and Box-Pushing tasks compared to baselines like InterMimic and PHC.

- Lowest tracking error for key metrics (DOF angles, object positions) in simulation, demonstrating superior precision.

- Successful real-world deployment on the Unitree G1 robot using FoundationPose for object tracking, validating sim-to-real robustness.

Breakthrough Assessment

7/10

Solid advancement in humanoid HOI by addressing two critical bottlenecks: reward engineering and contact robustness. Real-world validation on G1 is a strong plus, though the core algorithms (meta-learning for rewards, IK augmentation) are evolutionary rather than revolutionary.