📝 Paper Summary

Model-Based Reinforcement Learning

Physics-Informed World Models

Unsupervised Exploration

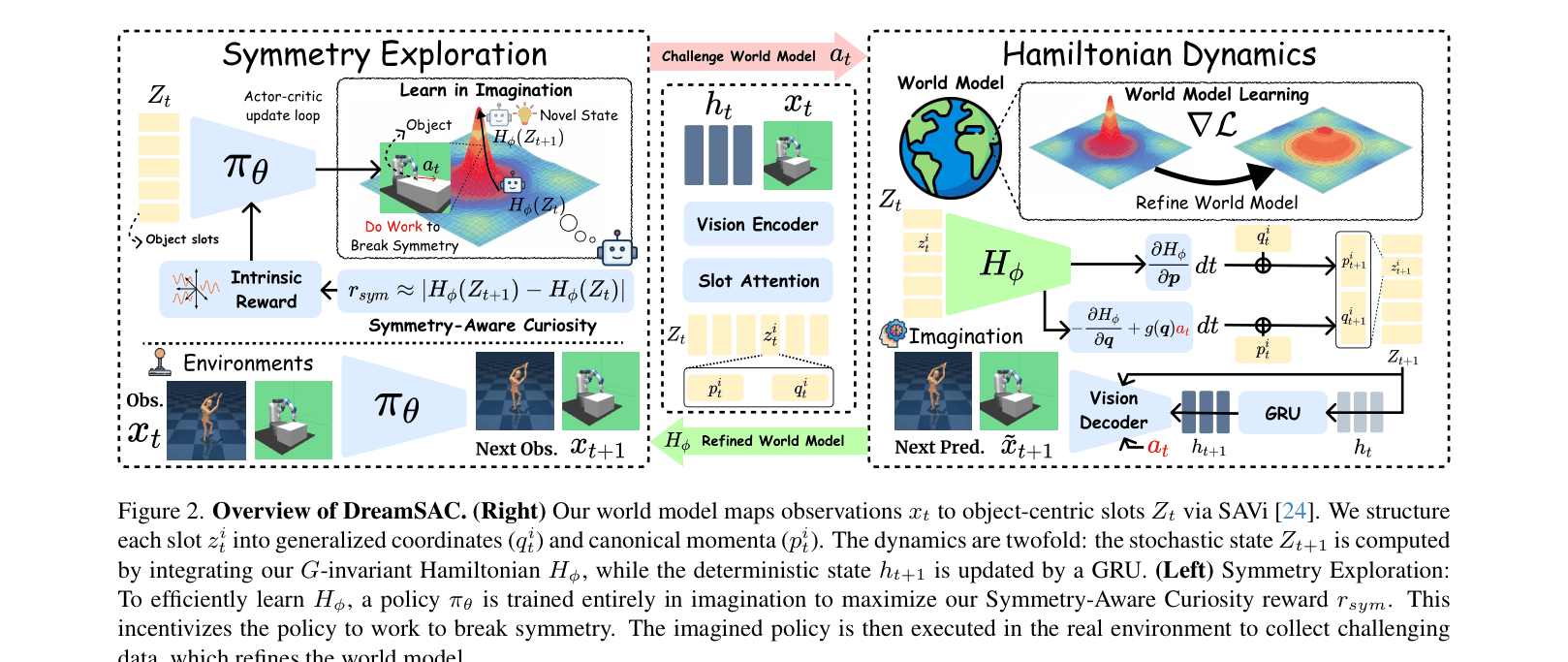

DreamSAC enables world models to extrapolate to new physical scenarios by actively exploring to discover symmetry-based conservation laws and enforcing these invariances via a Hamiltonian dynamics prior.

Core Problem

Standard world models learn statistical pixel correlations rather than underlying physical laws, causing them to fail when extrapolating to novel viewpoints or physical parameters (e.g., changed gravity or friction).

Why it matters:

- Agents in open worlds face scenarios with dynamics different from training data (e.g., handling objects with unknown mass)

- Passive learning from visual data often captures spurious correlations rather than causal physical rules like conservation of energy

- Existing physics-structured models (like HNNs) struggle to learn directly from high-dimensional pixels in end-to-end RL settings

Concrete Example:

A world model trained on standard gravity might learn that 'objects fall at rate X'. If gravity changes to 1.5x, the model fails to predict the faster fall because it memorized the rate rather than the law of gravitation. DreamSAC detects the parameter shift via Hamiltonian error and adapts.

Key Novelty

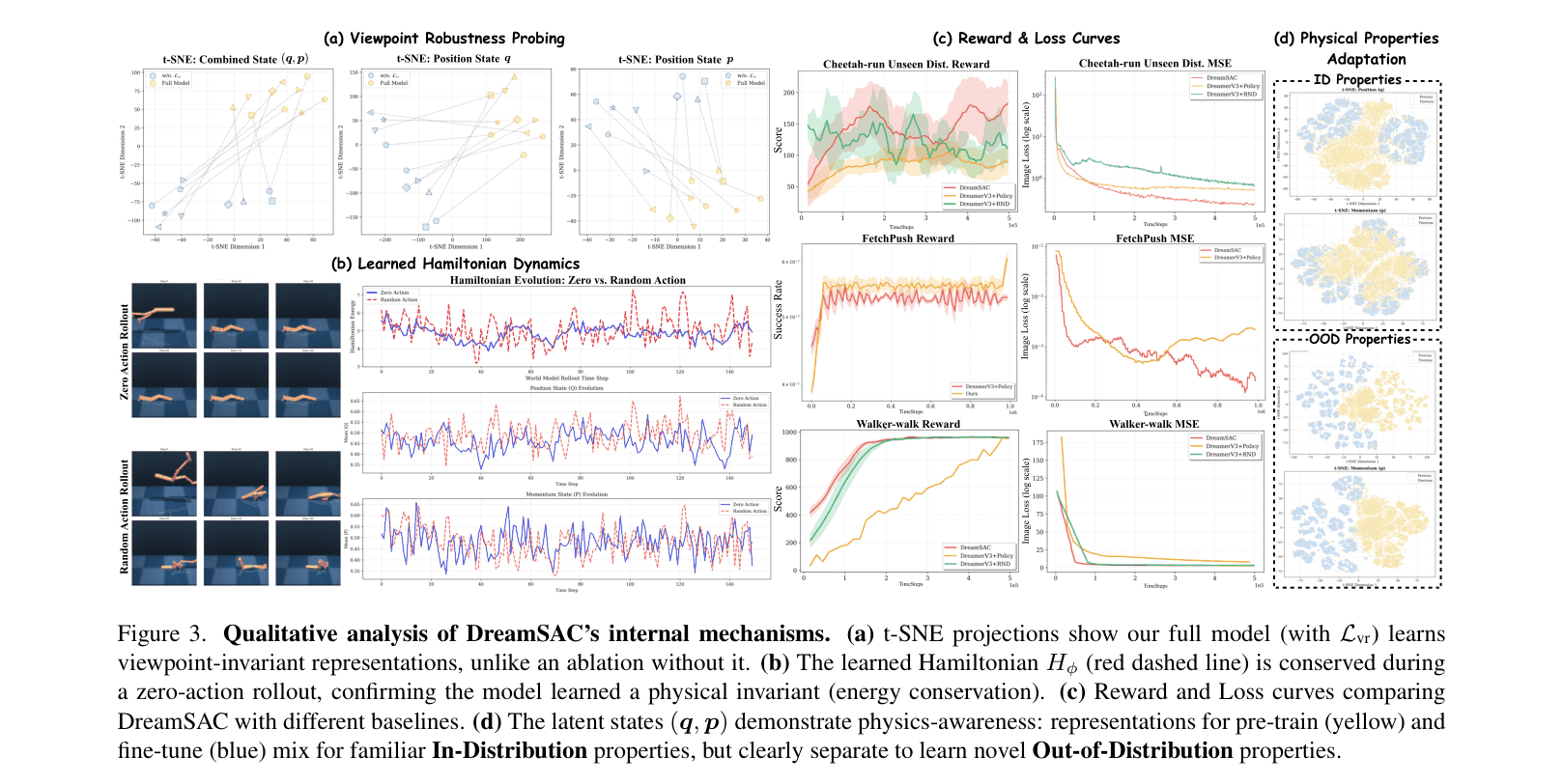

Active Symmetry Discovery via Hamiltonian Dynamics

- **Symmetry Exploration:** An intrinsic motivation strategy that rewards the agent for 'doing work' (changing the system's energy), effectively probing the environment to identify where conservation laws hold and where they break.

- **Hamiltonian World Model:** Replaces the standard recurrent dynamics with a physics-constrained Hamiltonian prior. It uses a contrastive loss to strip viewpoint details from the latent state, ensuring the learned dynamics depend only on physical invariants.

Architecture

The DreamSAC pipeline split into Symmetry Exploration (Left) and World Model learning (Right).

Evaluation Highlights

- +163% higher reward than DreamerV3 on Cheetah-run with unseen gravity (1.5x), demonstrating robust parametric extrapolation.

- Reduces image prediction error (MSE) by >10x compared to DreamerV3 on Acrobot (H=16), proving superior long-term dynamics modeling.

- Achieves ~97% success rate on FetchReach with unseen goals, outperforming standard baselines (~92%) by generalizing better to structural changes.

Breakthrough Assessment

8/10

Significantly advances physical grounding in MBRL by successfully combining active exploration with structured Hamiltonian learning from pixels, solving major extrapolation failure modes of standard models.