📊 Experiments & Results

Evaluation Setup

Four MuJoCo-v5 environments (HalfCheetah, Hopper, Walker2d, Ant) under induced sensor drift

Benchmarks:

- HalfCheetah-v5 (Locomotion)

- Hopper-v5 (Locomotion (fragile))

- Walker2d-v5 (Locomotion)

- Ant-v5 (Locomotion)

Metrics:

- Detection Rate (at various drift intensities)

- Survival Gap (Time to collapse - Time to detection)

- Statistical methodology: Wilson score 95% confidence intervals; R^2 for power law fits

Key Results

| Benchmark | Metric | Baseline | This Paper | Δ |

|---|---|---|---|---|

| Sinusoidal blindness results demonstrating that periodic drift is invisible to all detectors. | ||||

| All environments | Detection Rate (Sinusoidal Drift) | ~100 | ~0 | -100 |

| Power law fit results showing predictability of thresholds within environments but failure across environments. | ||||

| Within-Environment Fit | R^2 | 0.0 | 0.97 | +0.97 |

| Cross-Environment Fit | R^2 | 0.89 | 0.45 | -0.44 |

| Collapse Before Awareness (CBA) analysis in Hopper. | ||||

| Hopper | Survival Gap (steps) | 0 | -25 | -25 |

Experiment Figures

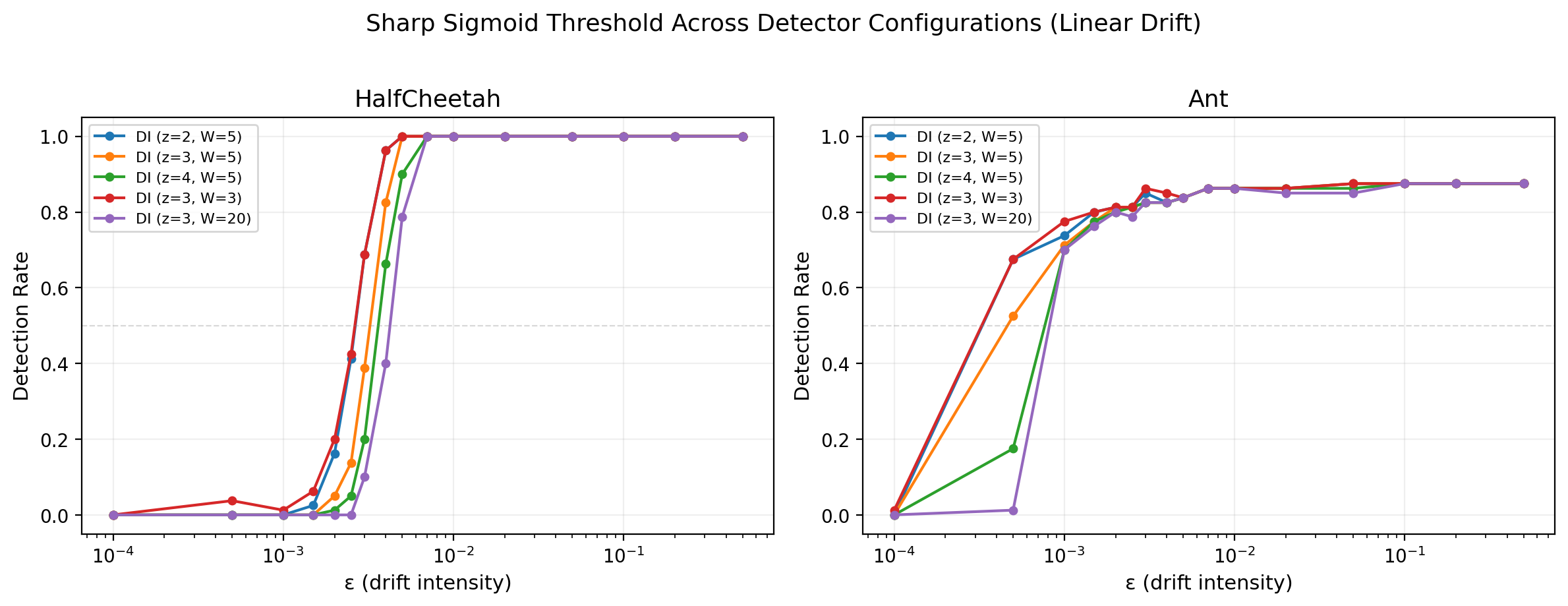

Sigmoid detection curves for linear vs. sinusoidal drift across intensities

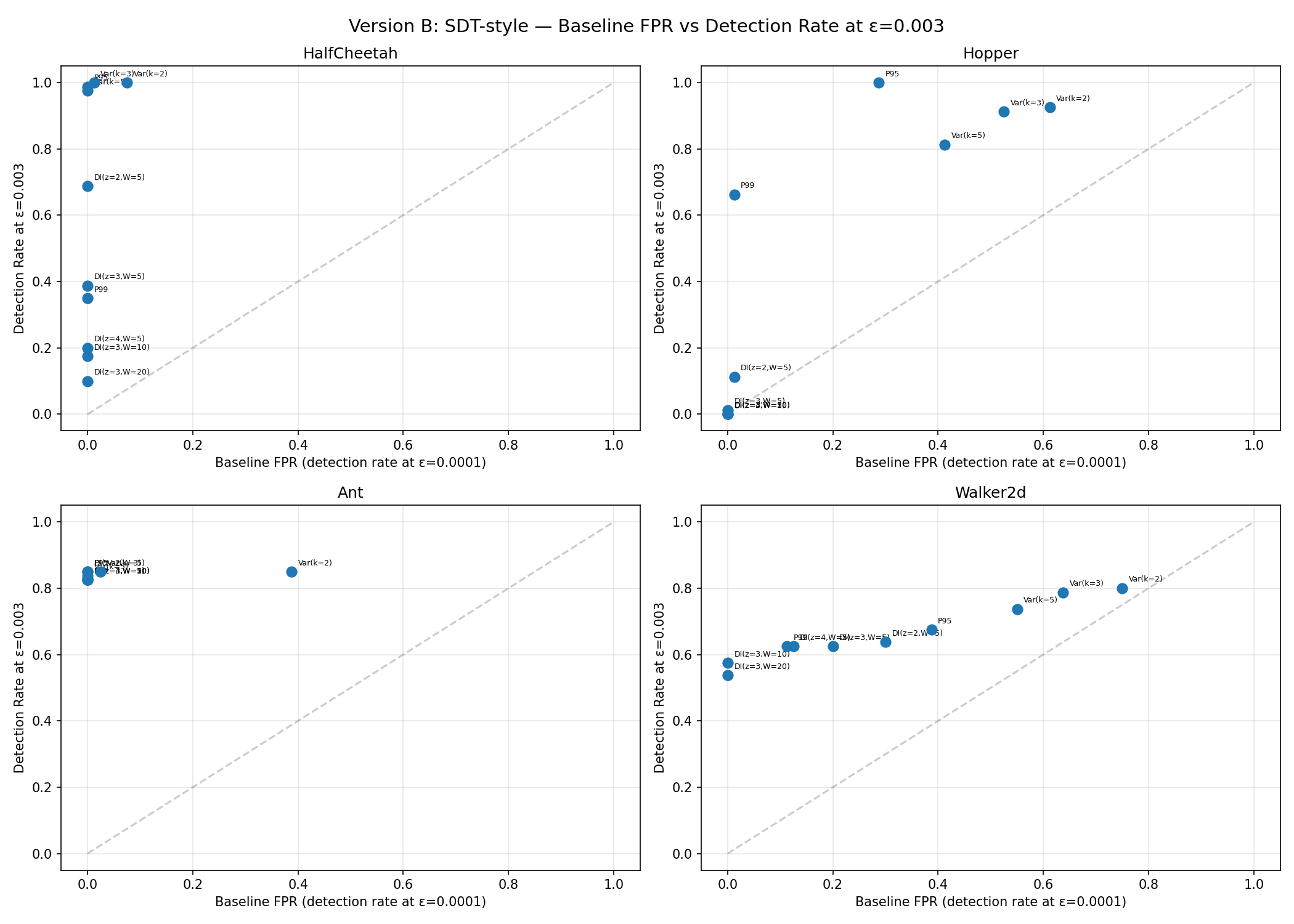

Signal Detection Theory (FPR vs Detection Rate) scatter plot for all detectors

Main Takeaways

- A sharp 'Boiling Frog' detection threshold exists universally; below it, drift is absorbed as normal noise, above it, detection is rapid.

- Sinusoidal drift is invisible because world models minimize prediction error by 'dreaming through' the oscillation, treating it as aleatoric variance.

- The detection threshold ε* is not determined by model quality (MSE) but by an interaction of detector sensitivity and environment-specific noise structure (tail heaviness).

- Increasing world model capacity (Small -> Large) reduces baseline MSE but does NOT change the detection threshold ε*, as detection is relative to the learned noise floor.