📊 Experiments & Results

Evaluation Setup

Causal ablation study on ETT benchmark forecasting tasks

Benchmarks:

- ETT (Multivariate Time Series Forecasting (treated as univariate))

- Synthetic Diagnostic Suite (Feature Taxonomy Validation) [New]

Metrics:

- CRPS (Continuous Ranked Probability Score)

- Delta CRPS (Change in CRPS after ablation)

- Statistical methodology: Not explicitly reported in the paper

Key Results

| Benchmark | Metric | Baseline | This Paper | Δ |

|---|---|---|---|---|

| Single-feature ablation experiments demonstrate the heavy-tailed distribution of feature importance in the mid-encoder layers. | ||||

| ETT | Max Delta CRPS | 0 | 38.61 | +38.61 |

| ETT | Max-to-Median Impact Ratio | 1.0 | 30.5 | +29.5 |

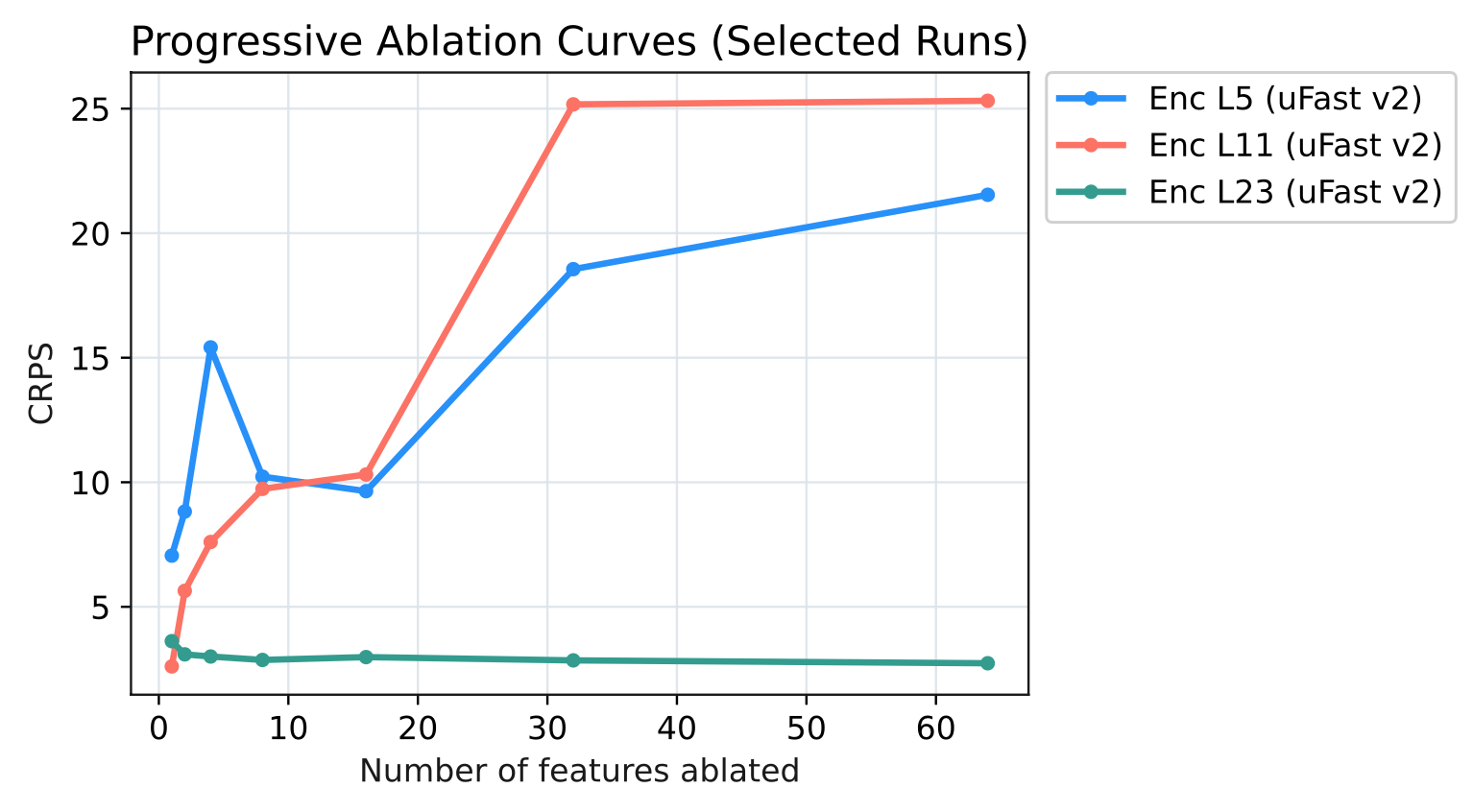

| Progressive ablation reveals a paradoxical improvement in the final encoder layer, contrasting with catastrophic failure in earlier layers. | ||||

| ETT | CRPS | 2.61 | 25.32 | +22.71 |

| ETT | CRPS | 3.62 | 2.73 | -0.89 |

Experiment Figures

Progressive ablation curves (CRPS vs Number of Ablated Features) for Encoder Blocks 5, 11, and 23

Main Takeaways

- Mid-encoder layers (Block 11) act as a 'causal bottleneck', containing the most critical features for forecasting accuracy, specifically those related to level shifts and noise.

- Final encoder layers (Block 23) contain rich semantic features (seasonality, frequency) that are less causally relevant or even redundant; removing them can improve performance on specific benchmarks like ETT.

- Causal importance follows a power law in middle layers, where a tiny fraction of features carries the vast majority of the forecasting capability.