📝 Paper Summary

Formal Verification

Abstract Interpretation

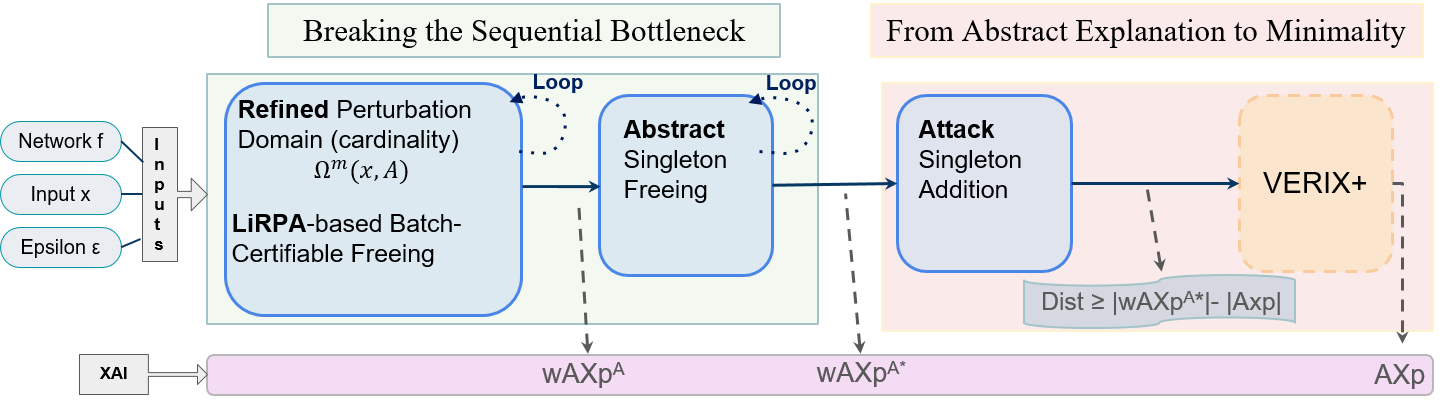

FAME scales provable explanations to large neural networks by using abstract interpretation to certify and discard batches of irrelevant features simultaneously, removing the need for sequential traversal.

Core Problem

Formal explanations (AXp) provide mathematical guarantees but rely on exact verification (NP-hard) and sequential feature elimination, making them intractable for large networks like ResNets.

Why it matters:

- Lack of transparency in neural networks undermines trust in high-stakes environments where reasoning must be verified

- Existing formal methods face a sequential bottleneck (traversal order), preventing effective parallelization and scaling

- Heuristic explanations estimate importance but lack provable correctness, while exact formal methods cannot handle realistic model sizes

Concrete Example:

In a classification task, determining which pixels are essential for a prediction typically requires checking them one by one. Exact solvers might time out verifying a robust feature set for a ResNet on CIFAR-10, whereas FAME's abstract approach can identify the subset efficiently.

Key Novelty

Abstract Batch Certificate via LiRPA

- Defines explanations on an abstract domain (using linear relaxation bounds) rather than the concrete domain, allowing for faster, conservative verification

- Eliminates sequential feature traversal by formulating feature selection as a Knapsack problem, identifying a 'batch' of irrelevant features that can be removed simultaneously

- Leverages the asymmetry of verification: proving features are irrelevant (freeing them) requires handling complex dependencies, which the proposed certificate does via a joint upper bound

Architecture

Conceptual flow of the FAME framework

Evaluation Highlights

- First method to produce abstract formal abductive explanations for a ResNet architecture on CIFAR-10, where exact methods are intractable

- Achieves O(n) time complexity for solving the feature selection Knapsack problem in binary classification settings

- Demonstrates consistent gains in explanation size and runtime compared to the SOTA baseline VERIX+ on medium- to large-scale networks

Breakthrough Assessment

8/10

Significant methodology shift from exact to abstract verification for XAI. Solving the scalability bottleneck of formal explanations (ResNet/CIFAR-10 scale) is a major step forward, though reliance on approximations introduces a precision trade-off.