📝 Paper Summary

Adversarial Attacks on LLMs

Safety Alignment

Scaling Laws

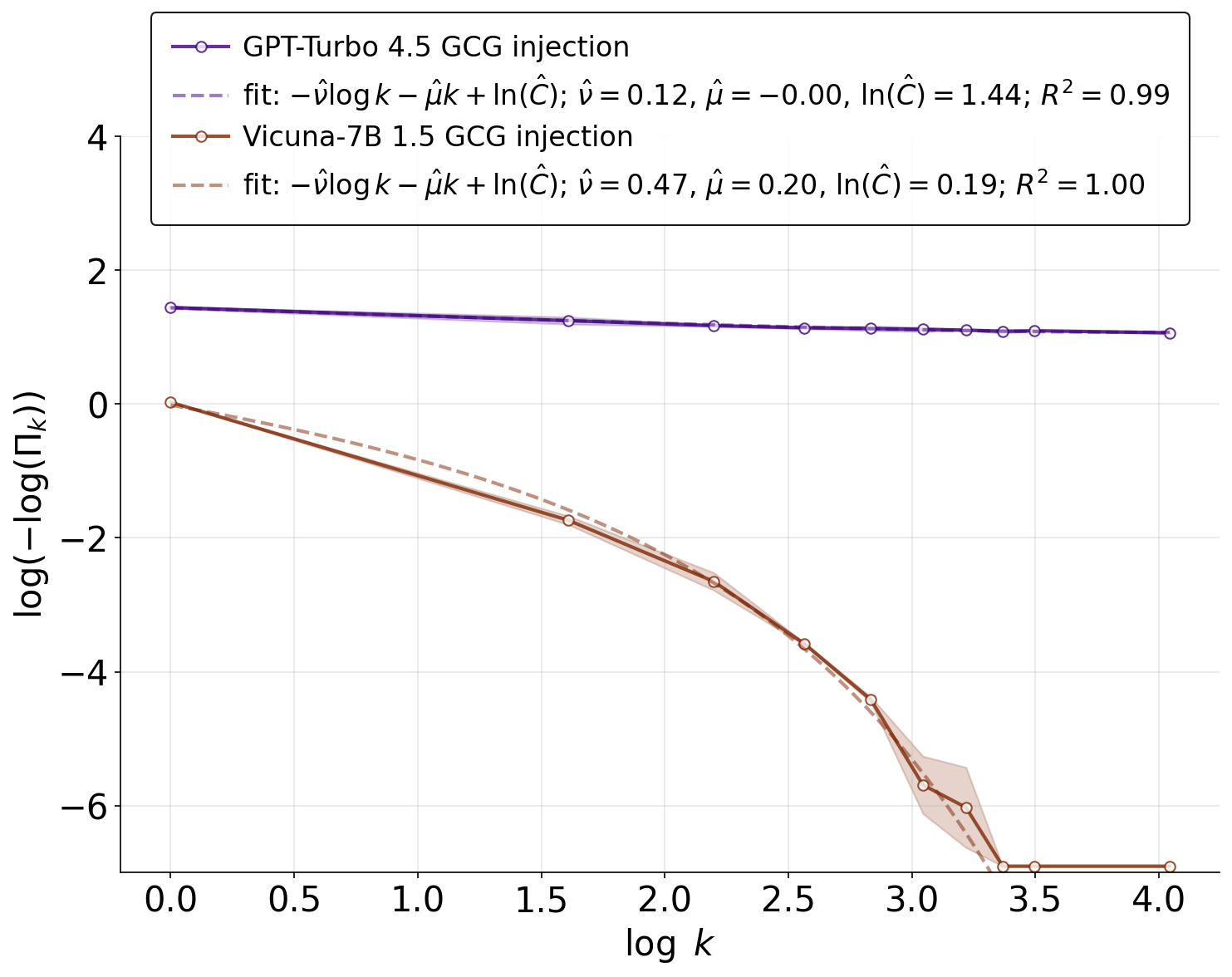

The success rate of jailbreaking LLMs transitions from slow polynomial growth to fast exponential growth as the length of the injected adversarial prompt increases, explained by a phase transition in a spin-glass theoretical model.

Core Problem

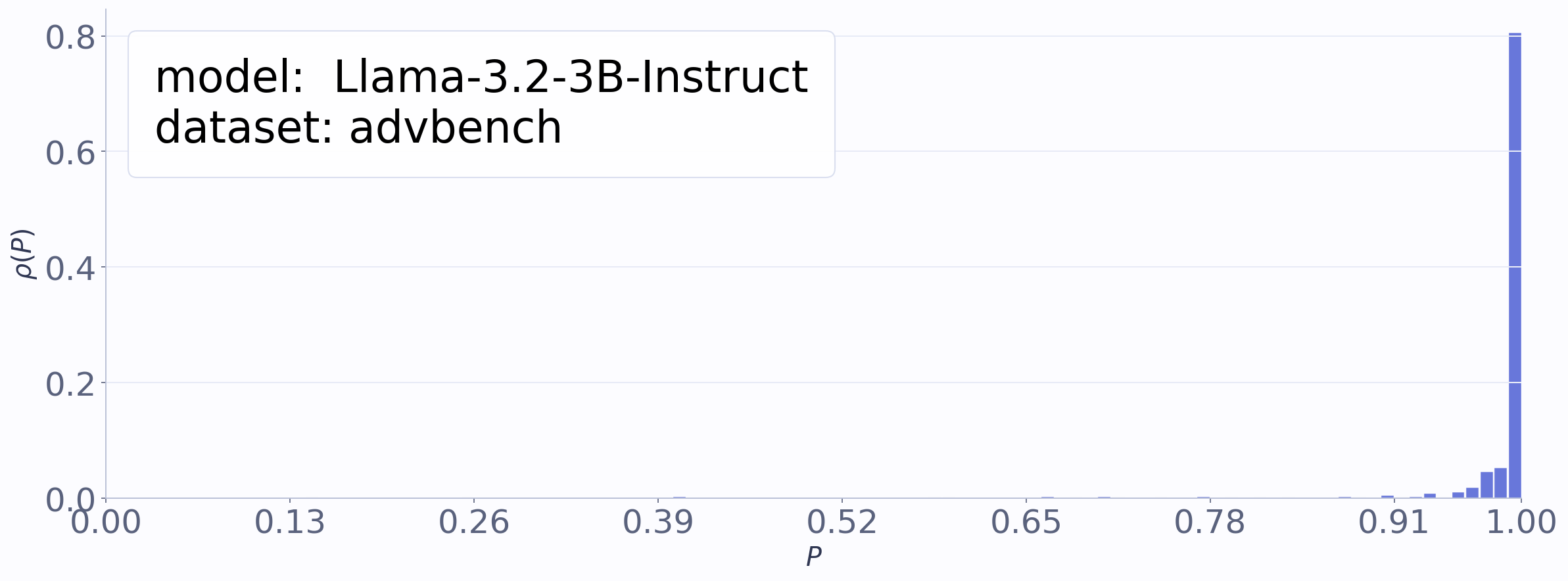

Safety-aligned LLMs can be 'jailbroken' to produce harmful content, but it is unknown how the attack success rate scales with the number of inference-time samples under prompt injection.

Why it matters:

- Understanding scaling laws is crucial for predicting the safety risks of future, more capable models deployed with extensive inference budgets

- Current defenses often assume polynomial scaling of attacks, potentially underestimating the threat of exponential success rates driven by prompt injection

- Reveals a fundamental vulnerability in alignment: strong adversarial perturbations can qualitatively change the model's generation landscape from disordered to ordered unsafe states

Concrete Example:

When a user asks for a 'cocktail' recipe, a model refuses. If an attacker injects a long adversarial suffix and samples the model 100 times, the probability of getting at least one harmful recipe increases exponentially rather than polynomially, drastically reducing the cost of a successful attack.

Key Novelty

SpinLLM: A Spin-Glass Model for Jailbreaking Dynamics

- Models LLM generation as a spin-glass system where 'safe' and 'unsafe' outputs correspond to low-energy clusters in a rugged energy landscape

- Jailbreak prompts act as an external 'magnetic field' tilting the landscape toward unsafe clusters

- Predicts a phase transition: weak fields (short prompts) yield polynomial scaling of attack success, while strong fields (long prompts) trigger an ordered phase leading to exponential scaling

Architecture

Conceptual illustration of the SpinLLM energy landscape. Safe and unsafe generations correspond to different clusters (valleys) in the energy landscape.

Evaluation Highlights

- Empirically confirms the transition from polynomial to exponential scaling of Attack Success Rate (ASR) on Llama-2-7B and Vicuna-7B v1.5

- Demonstrates that stronger models like GPT-4.5 Turbo maintain polynomial scaling (slower ASR growth) under attacks that cause exponential scaling in weaker models

- Analytically derives the specific power-law exponents and exponential decay rates based on spin-glass parameters (temperature, magnetic field)

Breakthrough Assessment

8/10

Provides a rigorous theoretical grounding (spin-glass physics) for empirical scaling laws in adversarial attacks, successfully predicting a distinct phase transition in safety behavior.