📝 Paper Summary

Molecular Computing

Bio-inspired Computing

A chemical reaction network using mass-action kinetics is mathematically proven to solve classification tasks without hidden layers, outperforming spiking neural networks that require hidden layers for the same tasks.

Core Problem

Biochemical networks in cells process information, but it is unclear if they can perform supervised learning efficiently compared to neuronal networks, particularly regarding the necessity of hidden layers.

Why it matters:

- Understanding how biological cells might learn via chemical signals offers a new paradigm distinct from neuronal learning

- Providing mathematical guarantees for learning in chemical computers motivates the design of synthetic biological circuits for computation

- Current implementations of CRN learning lack rigorous theoretical proofs or finite-time guarantees comparable to statistical learning theory

Concrete Example:

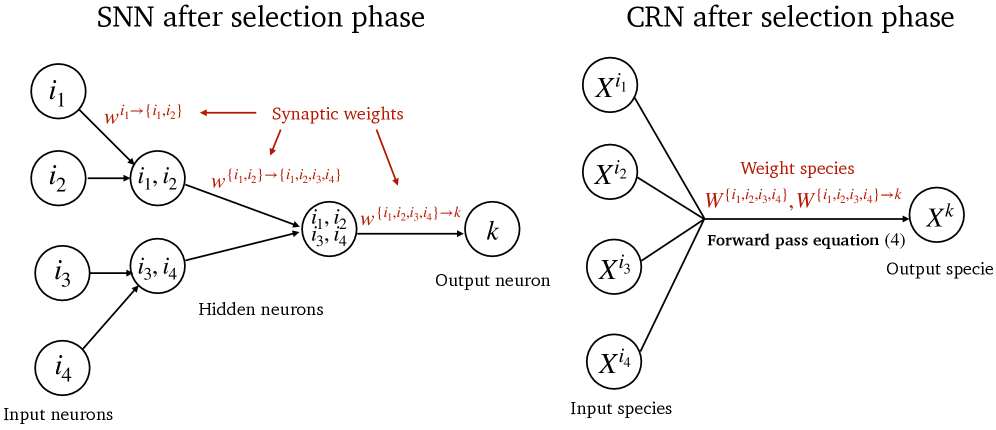

In a Spiking Neural Network (SNN), detecting a correlation between multiple inputs requires a hidden layer to approximate multiplication. In the proposed CRN, the reaction flux naturally computes the product of reactant concentrations, allowing the system to detect complex feature combinations directly without hidden layers.

Key Novelty

Deterministic Mass-Action Learning without Hidden Layers

- Exploits the physics of mass-action kinetics where reaction rates are products of concentrations, naturally implementing high-order feature interactions (multiplication) that neural networks must approximate via layers

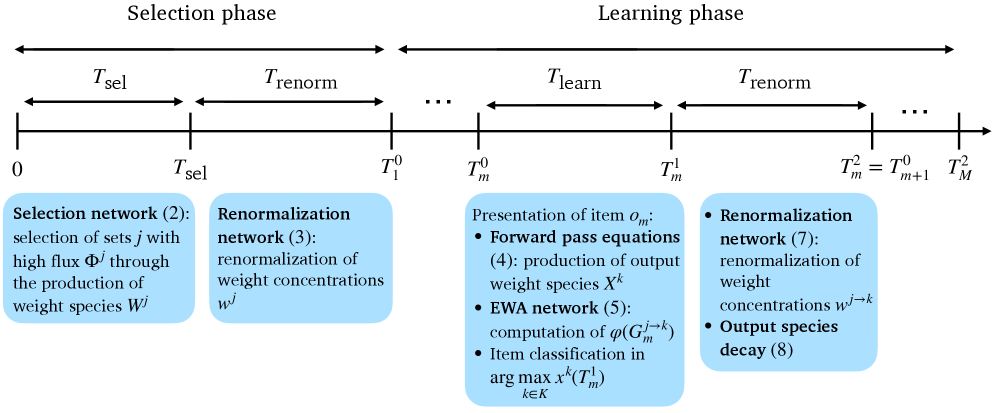

- Embeds an expert aggregation algorithm (Exponentially Weighted Average) directly into chemical rate equations to update virtual 'weights' based on classification success

- Proves that a single-layer CRN can solve tasks requiring multi-layer SNNs, establishing superior theoretical efficiency

Architecture

Timeline and reaction scheme of the Selection and Learning phases

Evaluation Highlights

- Mathematically proves the CRN achieves optimal regret bounds of order M * sqrt(M) without hidden layers

- Demonstrates that CRNs have a Vapnik–Chervonenkis (VC) dimension determined by the number of selected high-flux reactions

- Outperforms Spiking Neural Networks with hidden layers in accuracy and efficiency on handwritten digit classification (numerical experiment)

Breakthrough Assessment

9/10

Provides the first rigorous mathematical proof that chemical networks can be more efficient learners than spiking neural networks by leveraging intrinsic physical laws (mass action) for computation.