📝 Paper Summary

Scientific Machine Learning (SciML)

Physics-Informed Deep Learning

Generative Modeling

Turbulence Modeling

Metamaterial Design

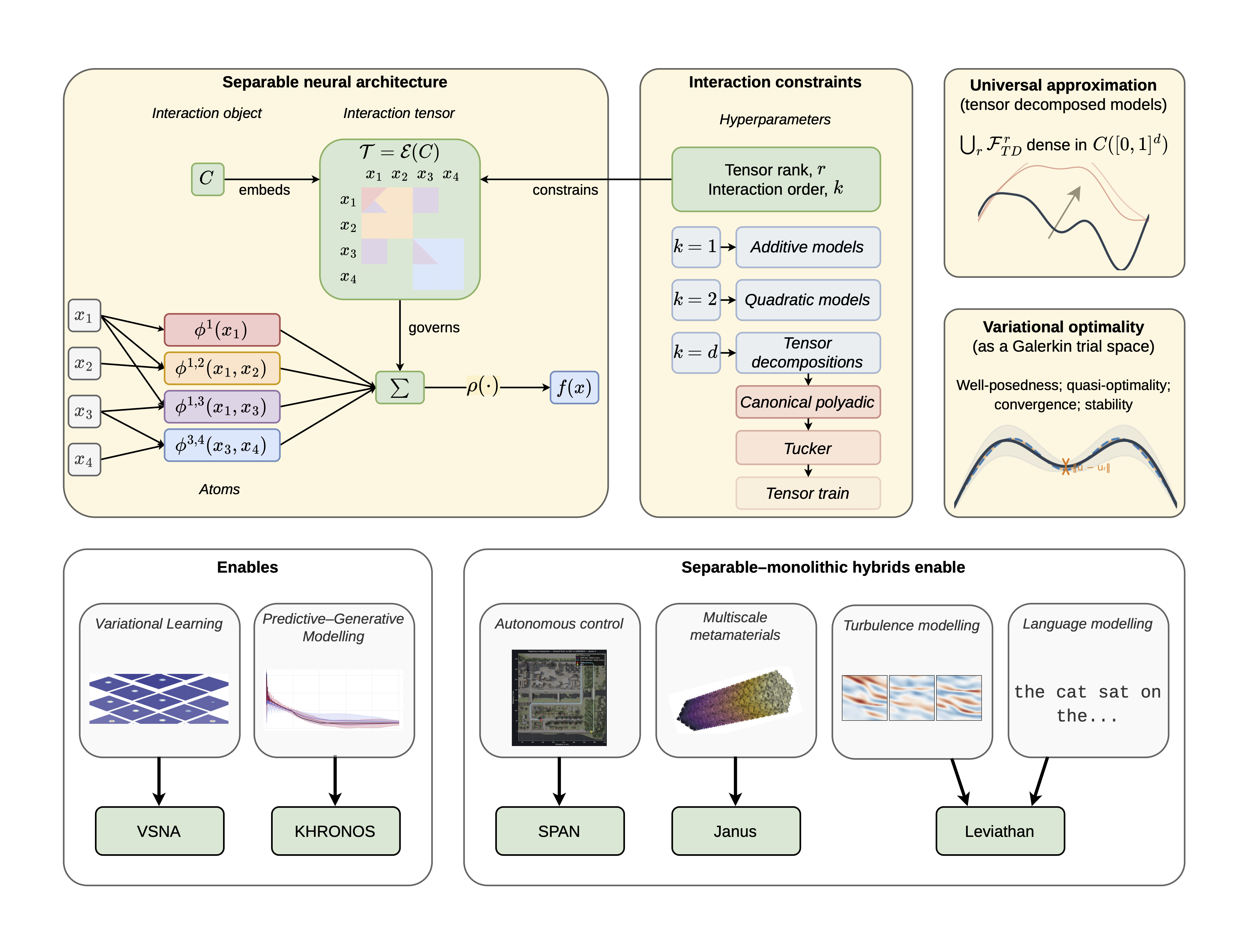

The Separable Neural Architecture (SNA) serves as a unified primitive that exploits latent factorisable structure in physical systems to enable highly compact, invertible, and distributionally accurate modeling across dynamics and materials.

Core Problem

Monolithic architectures (like CNNs and standard Transformers) fail to exploit the factorisable structure inherent in physical systems, leading to excessive parameter counts, opacity, and non-physical drift when modeling chaotic dynamics.

Why it matters:

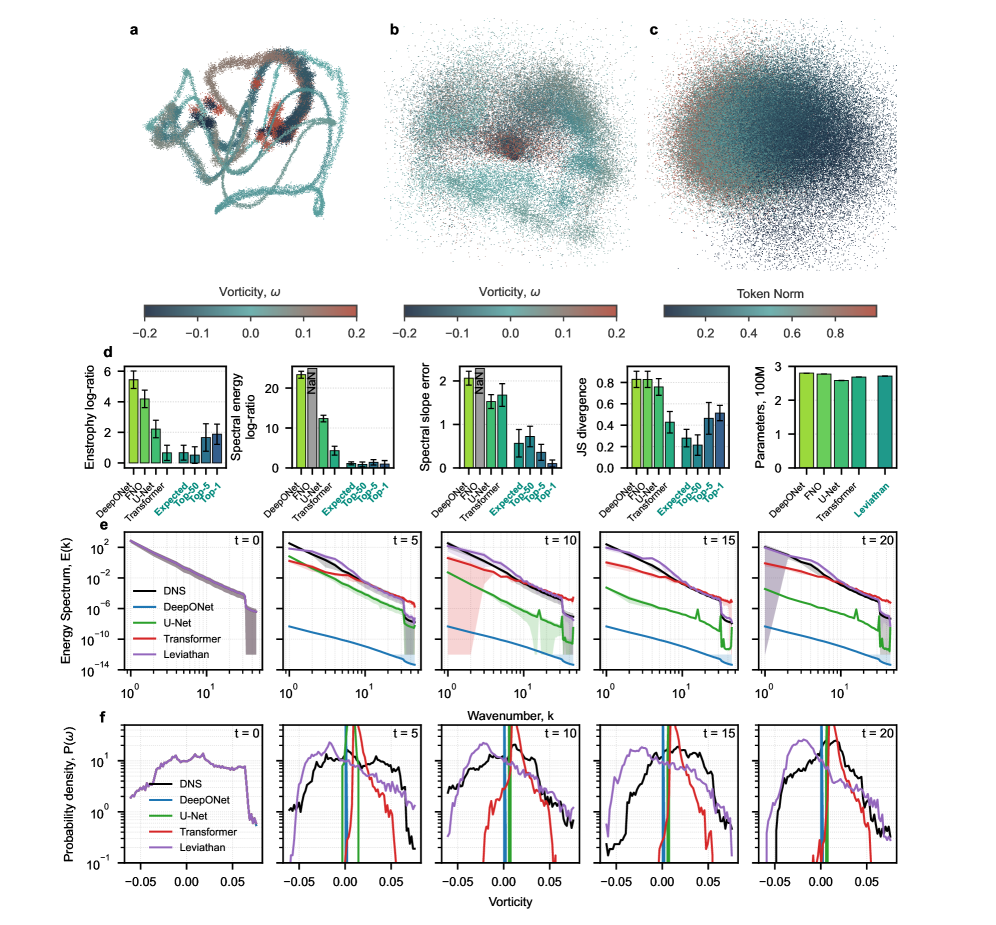

- Chaotic systems (like turbulence) diverge exponentially; deterministic point-wise models fail to capture long-term statistics, leading to 'drift-to-mean' and non-physical states

- Inverse design of materials typically requires expensive surrogate optimization or separate inverse networks, as monolithic forward models are opaque and hard to invert

- Traditional numerical methods (FEM) suffer from the 'curse of dimensionality' in high-dimensional spatiotemporal-parametric fields

Concrete Example:

In modeling directed energy deposition thermal histories, standard CNNs require ~11 million parameters and are black boxes. In contrast, the proposed KHRONOS model uses just ~240 parameters to achieve higher accuracy and allows 50ms analytic inversion to find fabrication parameters.

Key Novelty

Separable Neural Architecture (SNA) as a Primitive

- Formalizes a neural primitive based on tensor decomposition that constructs high-dimensional mappings from low-arity 'atoms' (learnable 1D functions) governed by a sparse interaction tensor

- Introduces 'coordinate-aware' embeddings that preserve physical neighborhood relations, treating continuous physical states as smooth, separable embeddings rather than discrete tokens

- Unifies distinct modeling tasks: acts as a standalone predictor (KHRONOS), a variational trial space for PDEs (VSNA), or a distributional embedding module (Leviathan) within larger systems

Architecture

Conceptual diagram of the Separable Neural Architecture (SNA) as a primitive across three modes: Standalone (KHRONOS), Variational (VSNA), and Composite (Janus/Leviathan/SPAN).

Evaluation Highlights

- KHRONOS achieves state-of-the-art accuracy (R2=0.76) on thermal history prediction with 4-5 orders of magnitude fewer parameters (240 vs ~11M) than CNN baselines

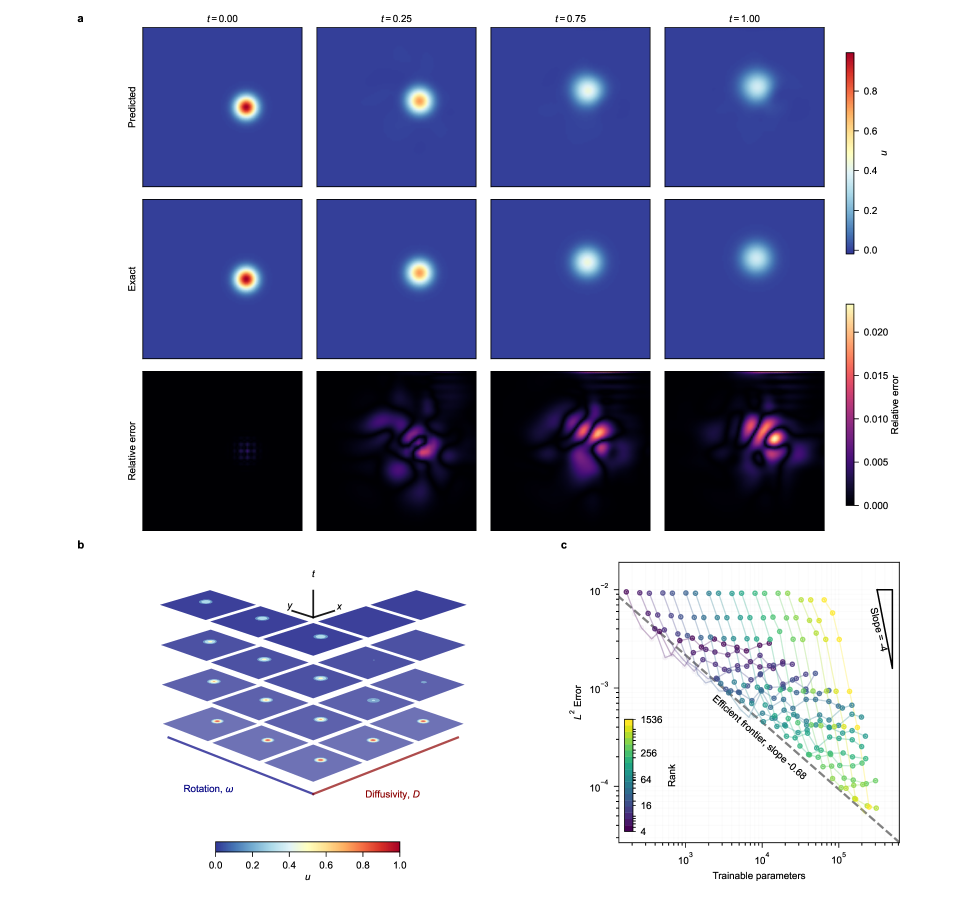

- VSNA solves 6D advection-diffusion PDEs with 3 orders of magnitude fewer parameters than cubic B-spline Finite Element Methods (FEM) for comparable error

- Leviathan preserves physical spectral energy and vorticity distributions over 20-step chaotic turbulence rollouts, whereas deterministic baselines (FNO, DeepONet) suffer catastrophic collapse

Breakthrough Assessment

9/10

Proposes a fundamental structural primitive that unifies additive, quadratic, and tensor models. Demonstrates massive efficiency gains (10,000x compression) and qualitative breakthroughs in chaotic stability where standard operators fail.