📝 Paper Summary

Mechanistic Interpretability

Feature Superposition

Neural Network Geometry

Correlated features in neural networks organize into geometric structures that utilize interference constructively to aid reconstruction, rather than arranging into regular polytopes to suppress interference as noise.

Core Problem

Existing theories of superposition assume features are sparse and uncorrelated, predicting 'interference filtering' geometries (regular polytopes) that fail to explain the semantic clusters and circular structures observed in real language models.

Why it matters:

- Current geometric theories cannot account for structures found in real LLMs (e.g., ordered circles of features like months, anisotropic clusters)

- Understanding feature geometry is critical for dictionary learning (Sparse Autoencoders), knowledge editing, and adversarial robustness

- Treating all interference as noise ignores the efficiency gains available from exploiting data correlations

Concrete Example:

Standard superposition theory predicts features should form a regular polytope to minimize dot products (noise). However, real LLMs represent 'months of the year' as a circle. Standard theory cannot explain why this structure is optimal, as it increases dot products between adjacent months.

Key Novelty

Constructive Interference in Linear Superposition

- Demonstrates that when data has low-rank covariance, interference from correlated features can align with the target signal rather than acting as noise

- Introduces Bag-of-Words Superposition (BOWS), a framework using real text data to study how realistic correlations drive feature geometry beyond idealized independent setups

- Identifies that weight decay and tight bottlenecks favor these 'constructive' low-rank solutions over the sparse, interference-filtering solutions predicted by prior work

Architecture

The Bag-of-Words Superposition (BOWS) data pipeline and Autoencoder setup

Evaluation Highlights

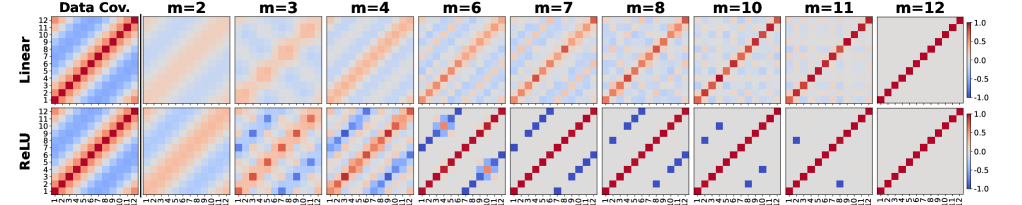

- Synthetic experiments show ReLU Autoencoders abandon interference-filtering (antipodal) structures for constructive interference (circular) structures when bottlenecks are tight (m < 6 for 12 features)

- Formalizes 'linear superposition' where linear decoders can recover features typically thought to require non-linear filtering

- Demonstrates that weight decay biases models toward low-rank solutions ($||W||^2_F \approx m$) over sparse solutions ($||W||^2_F \approx d$)

Breakthrough Assessment

7/10

Provides a significant theoretical correction to the standard view of superposition, explaining empirically observed structures (circles, clusters) that prior theories could not. The BOWS framework offers a valuable testbed.