📝 Paper Summary

Smart Contract Security

Automated Program Repair (APR)

LLM for Code

ContractTinker repairs complex smart contract vulnerabilities by decomposing the task via Chain-of-Thought reasoning and grounding the LLM with static analysis (dependency graphs and program slicing).

Core Problem

Existing repair tools rely on predefined patterns that fail on high-level business logic bugs, while standard LLMs suffer from hallucinations and lack context when repairing complex real-world contracts.

Why it matters:

- Smart contracts manage significant financial assets, making them high-value targets for attackers

- Real-world vulnerabilities often involve complex business logic (e.g., price manipulation) rather than simple low-level bugs like re-entrancy

- Manual repair is labor-intensive and requires deep security expertise that many developers lack

Concrete Example:

A contract might have a price manipulation vulnerability where a function calculates an asset price insecurely. Pattern-based tools miss this because it's logic-specific. A standard LLM might try to fix it but hallucinate variables not present in the code or miss dependencies in other contracts.

Key Novelty

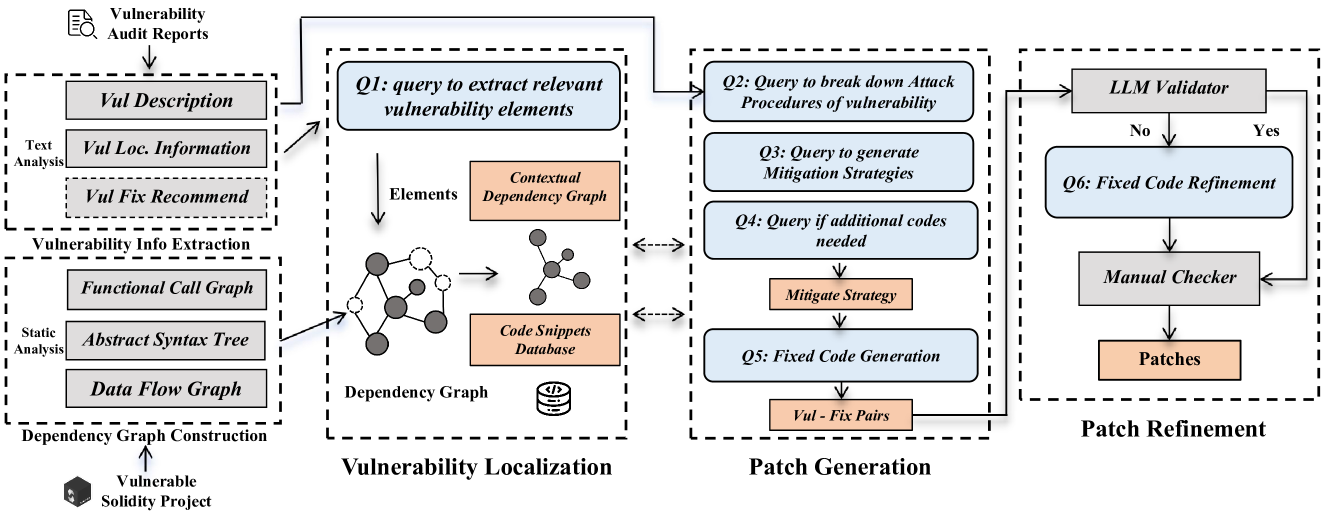

Context-Aware Chain-of-Thought for Repair

- Decomposes the repair process into steps simulating a security expert: Attack Analysis -> Strategy Generation -> Code Patching

- Injects static analysis results (Dependency Graphs, Program Slices) at each reasoning step to ground the LLM's logic in the actual codebase structure

Architecture

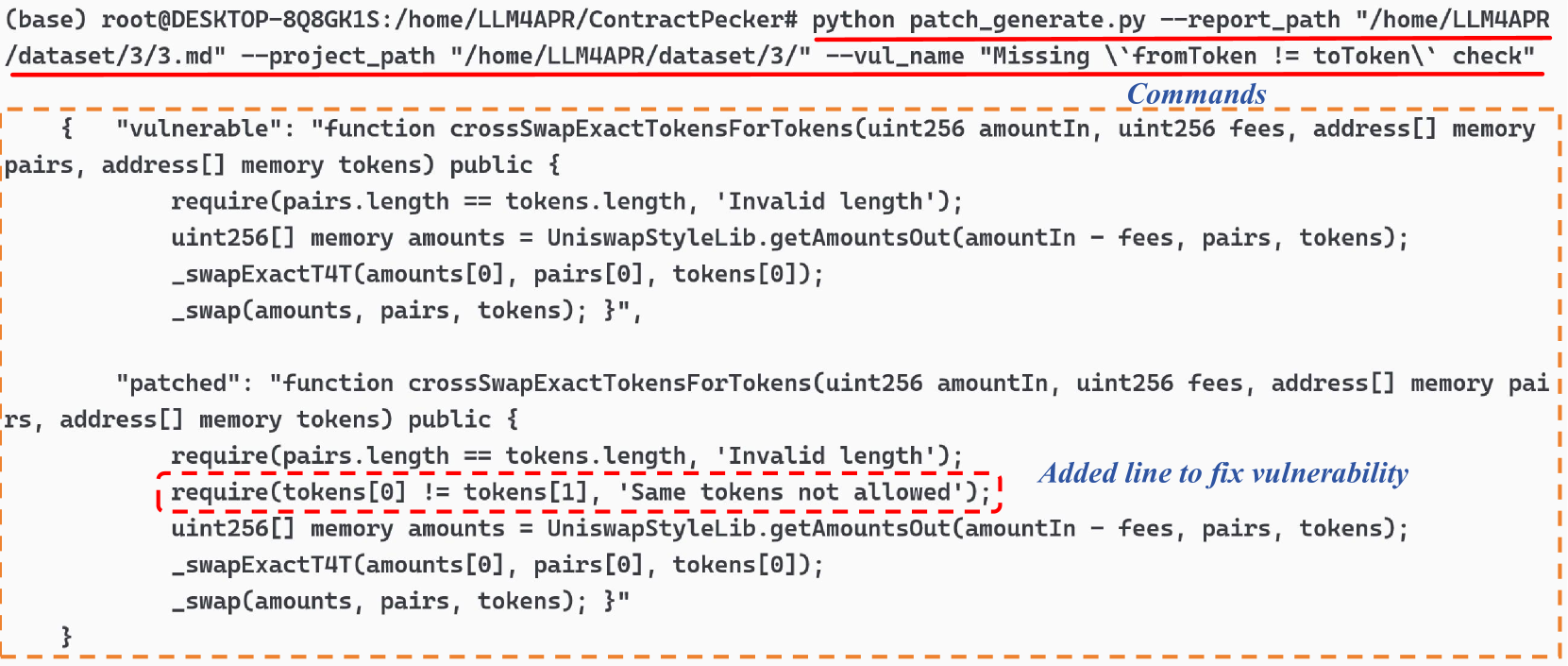

Workflow of ContractTinker: From Audit Report/Project input -> Dependency Analysis -> Vulnerability Localization -> CoT Patch Generation -> Refinement.

Evaluation Highlights

- Repairs 23 out of 48 (48%) high-risk real-world vulnerabilities with valid patches

- Generates patches requiring only minor modifications for an additional 10 vulnerabilities (21%)

- Achieves a high success rate in generating correct mitigation strategies before coding

Breakthrough Assessment

7/10

Effective integration of static analysis with LLM CoT for a hard domain (smart contracts). Sample size (48) is small but realistic for this domain due to data scarcity.