📝 Paper Summary

LLM Reasoning Evaluation

Reasoning Trace Analysis

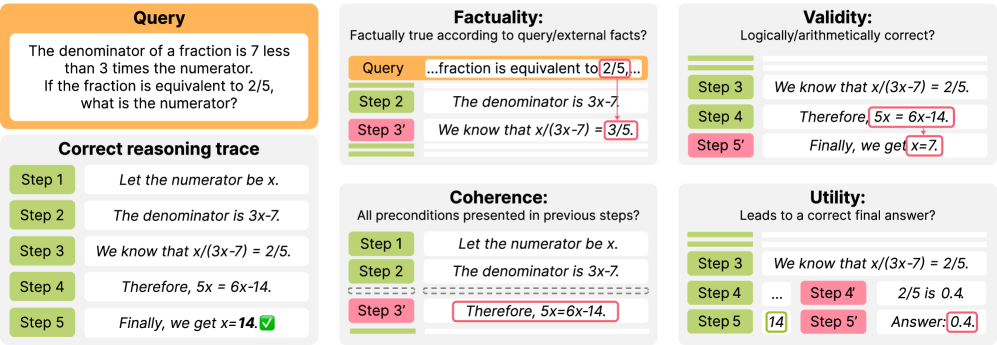

This survey establishes a unified taxonomy for evaluating LLM reasoning traces (Factuality, Validity, Coherence, Utility) and categorizes existing evaluators and datasets based on this framework.

Core Problem

Existing practices for evaluating LLM reasoning traces are highly inconsistent, with fragmented progress across inconsistent criteria and datasets.

Why it matters:

- Answer accuracy is insufficient because correct answers do not guarantee correct reasoning (e.g., false premises leading to right conclusions)

- Rapid proliferation of new evaluators lacks consensus on what actually constitutes a 'good' reasoning step

- High-quality reasoning traces are essential for improving LLMs via verifier-guided search and reinforcement learning

Concrete Example:

A trace might include the step 'Next, we add 42 to 16,' which is valid arithmetic, but if the value '42' was never derived or mentioned previously, the step is 'incoherent' despite being 'valid,' a distinction often missed by monolithic evaluators.

Key Novelty

Unified Taxonomy for Reasoning Evaluation

- Deconstructs reasoning quality into four distinct dimensions: Factuality (grounding), Validity (logical correctness), Coherence (consistency with context), and Utility (contribution to final answer)

- Maps diverse evaluator architectures (from rule-based to model-based) onto these specific criteria rather than treating evaluation as a generic quality score

Architecture

The unified taxonomy of evaluation criteria proposed by the authors.

Evaluation Highlights

- Identifies that uncertainty metrics (e.g., token probability entropy) can serve as criteria-agnostic proxies for factuality, validity, and utility

- Highlights that coherence is inherently subjective compared to validity; steps considered 'necessary' in one dataset (WorldTree V2) may be deemed unnecessary in others

- Notes that utility evaluators (Value Functions) scale best because they can be trained using only final answer correctness without expensive step-wise human annotations

Breakthrough Assessment

7/10

A comprehensive survey that brings necessary structure to a fragmented field. While it doesn't propose a new model, its taxonomy is likely to become a standard reference for future reasoning research.