📝 Paper Summary

Language Model Evaluation

Causal Representation Learning

Scaling Laws

The paper proposes Hierarchical Component Analysis (HCA) to discover a causal hierarchy of latent capabilities in LLMs—from general problem-solving to instruction-following to math reasoning—by leveraging performance heterogeneity across different base models.

Core Problem

Evaluating LLM capabilities is hindered by complex confounding effects (like base model heterogeneity) and interdependencies among skills, making it difficult to understand how specific capabilities causally influence downstream performance.

Why it matters:

- Rigorous causal evaluation usually requires prohibitive costs (retraining models from scratch) to control for confounders.

- Simple leaderboards rank models but fail to explain *why* performance improves or how skills like instruction-following enable complex reasoning.

- Without understanding the causal structure of capabilities, it is unclear which skills to target during post-training to maximize downstream gains.

Concrete Example:

Fine-tuning on instruction data might improve math problem-solving not because the model learned math, but because it learned to format the solution correctly. Standard evaluations cannot distinguish whether an intervention improved the core reasoning skill or just the upstream prerequisite of following instructions.

Key Novelty

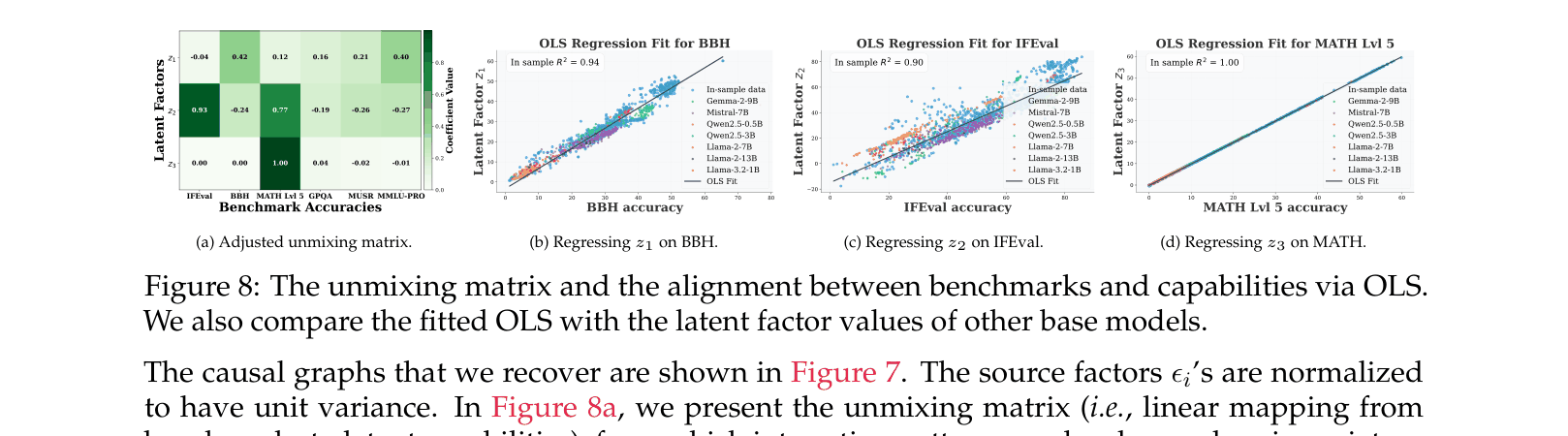

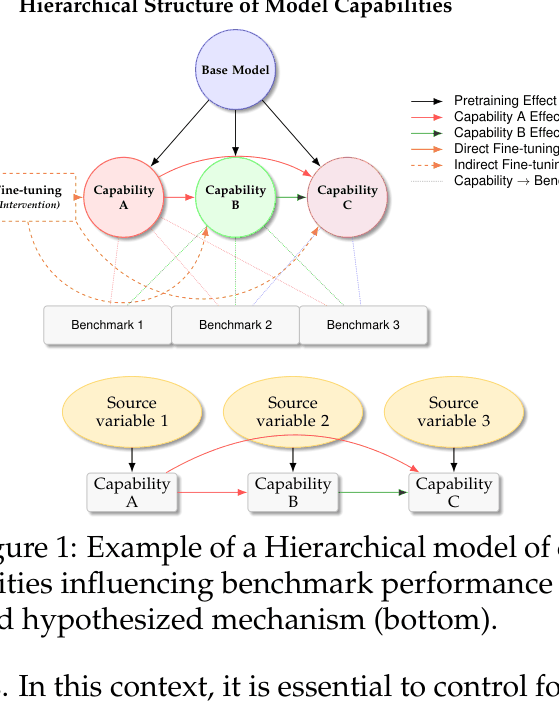

Hierarchical Component Analysis (HCA) for Causal Capability Discovery

- Models benchmark performance as a linear transformation of latent capability factors that are organized in a Directed Acyclic Graph (DAG).

- Treats different base models (e.g., Llama-3 vs. Qwen2.5) as distinct 'domains' or 'views' to identify invariant causal structures, controlling for the base model as a common confounder.

- Uses a novel algorithm (HCA) that combines Independent Component Analysis (ICA) with row-residual extraction to recover the hierarchical structure from observed performance matrices.

Architecture

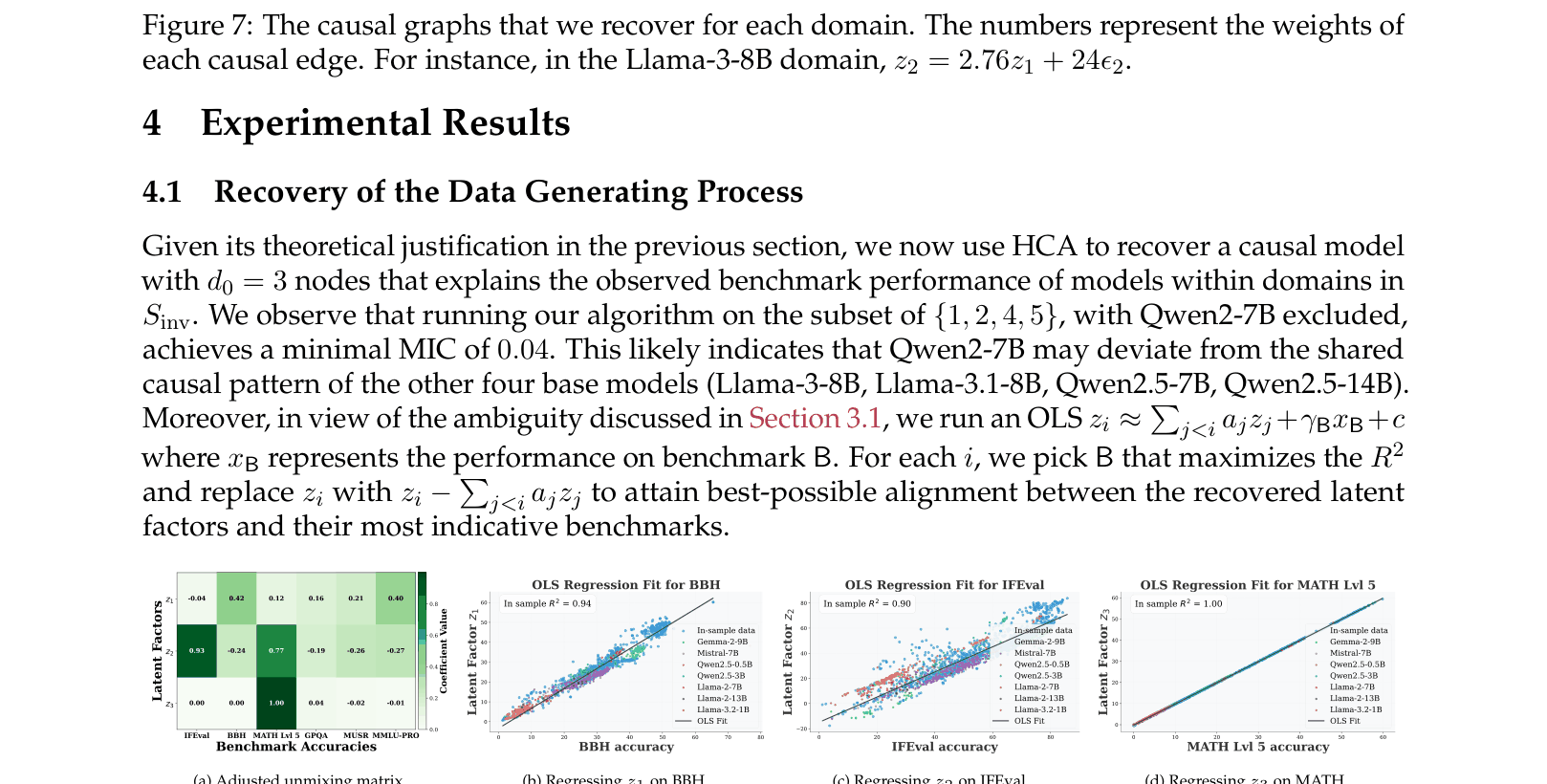

Conceptual diagram of the hierarchical causal model. It shows 'Base Model' as a parent node influencing latent capabilities (A, B, C), which in turn influence Benchmark scores. Fine-tuning is modeled as an intervention.

Evaluation Highlights

- Identified a stable 3-node causal hierarchy across 1500+ models: General Problem Solving → Instruction Following → Math Reasoning.

- Found that performance on the 'General Problem Solving' latent factor correlates strongly with pre-training FLOPs (sigmoid scaling law), while 'Instruction Following' is more malleable via post-training.

- Demonstrated that targeted fine-tuning on instruction-following (IFEval) causally improves math reasoning (MATH) performance across Llama-3 and Qwen2.5 families.

Breakthrough Assessment

8/10

Provides a rigorous theoretical framework for interpreting leaderboard data causally, moving beyond simple rankings to structural understanding of how LLM capabilities depend on each other.