📝 Paper Summary

Tree/graph-based memory

Memory recall

Embodied-RAG structures robot experiences into a hierarchical 'Semantic Forest' combining topological maps and language clusters to enable scalable, multi-resolution navigation and question answering.

Core Problem

Robots generate massive, redundant, and highly correlated multimodal data streams (images, poses) that standard text-based RAG cannot efficiently index or query for navigation.

Why it matters:

- Standard dense metric maps (SLAM) are intractable to scale and lack the high-level semantic abstraction humans use for memory

- Naive RAG treats observations as independent documents, losing the critical spatial and temporal connectivity needed for robot navigation

- Existing scene graphs rely on human-engineered schemas (e.g., room->object) that fail in unstructured or outdoor environments

Concrete Example:

A user asks 'Where can I read a book quietly?'. Naive RAG might retrieve a bench image based on visual similarity but fail to distinguish between a noisy roadside bench and a quiet park bench because it lacks hierarchical spatial context. Embodied-RAG uses the forest structure to prioritize the 'park' node over the 'roadside' node.

Key Novelty

Semantic Forest Memory Structure

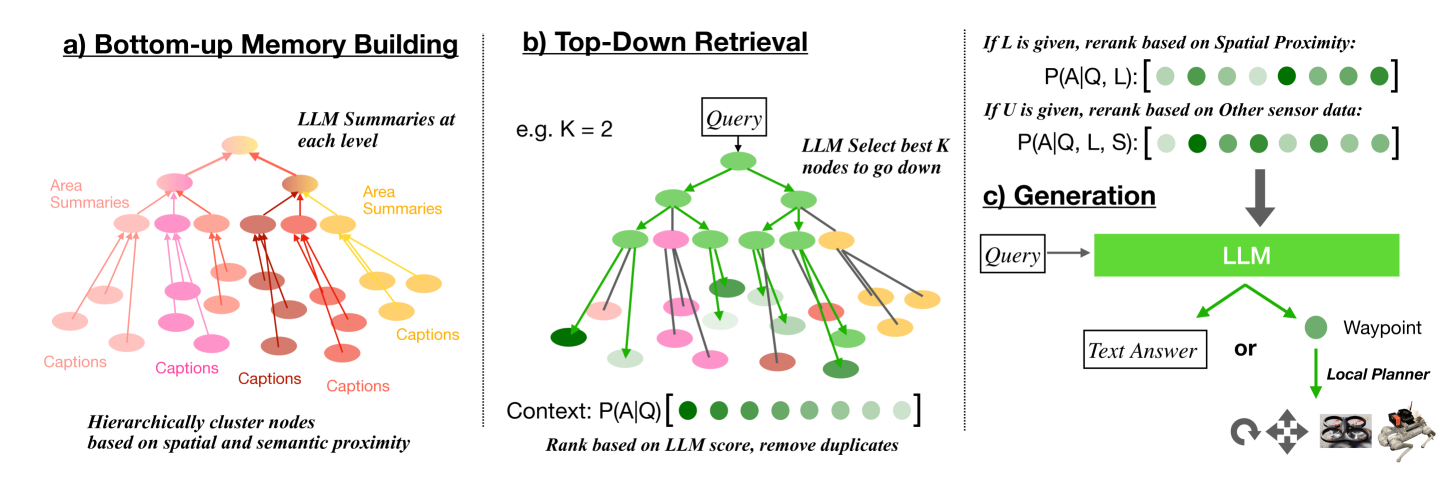

- Constructs a hierarchical memory by clustering robot observations (nodes) based on a hybrid metric of spatial proximity and semantic similarity

- Uses a 'Bottom-up Memory Building' process that summarizes clusters into higher-level abstract nodes using an LLM, creating a navigable tree

- Implements a 'Top-down Retrieval' mechanism modeled after Tree-of-Thoughts, where an LLM guides search from abstract roots down to specific leaves based on reasoning

Architecture

The two-stage pipeline: Bottom-up Memory Building (creating the Semantic Forest) and Top-down Retrieval (querying the forest).

Evaluation Highlights

- Memory building is 7.38x faster than Graph-RAG and 9.76x faster than Light-RAG on the same dataset size

- Successfully handles over 250 explanation and navigation queries across kilometer-level environments in simulation and reality

- Outperforms Naive-RAG, GraphRAG, and LightRAG on explicit, implicit, and global query types across 19 diverse environments

Breakthrough Assessment

7/10

Significant step in adapting RAG for robotics by addressing the specific structure of embodied data (spatial/temporal correlation). Performance gains are strong, though reliance on GPT-4 for summarization may limit onboard real-time constraints.