📝 Paper Summary

LLM Numeracy

Tokenization Strategies

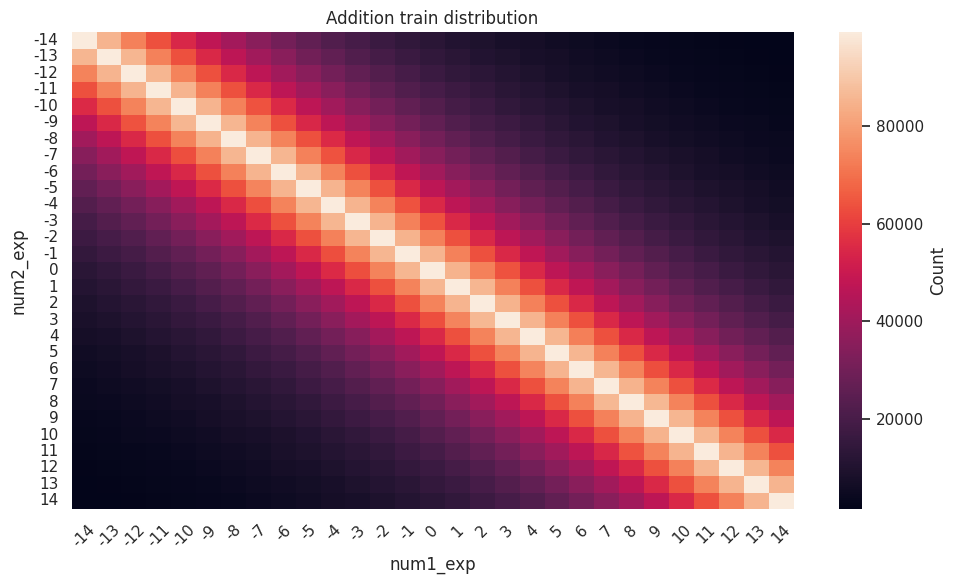

BitTokens encodes numbers into single tokens using their binary IEEE 754 floating-point representation, enabling language models to learn arithmetic algorithms efficiently without extensive reasoning chains.

Core Problem

Current LLMs struggle with basic calculations unless they use excessive reasoning tokens or external tools, and existing single-token encodings (like xVal or FoNE) fail to support efficient learning of arithmetic operations like multiplication.

Why it matters:

- Scientific and engineering tasks require processing large amounts of numerical data where external tool use introduces latency and complexity

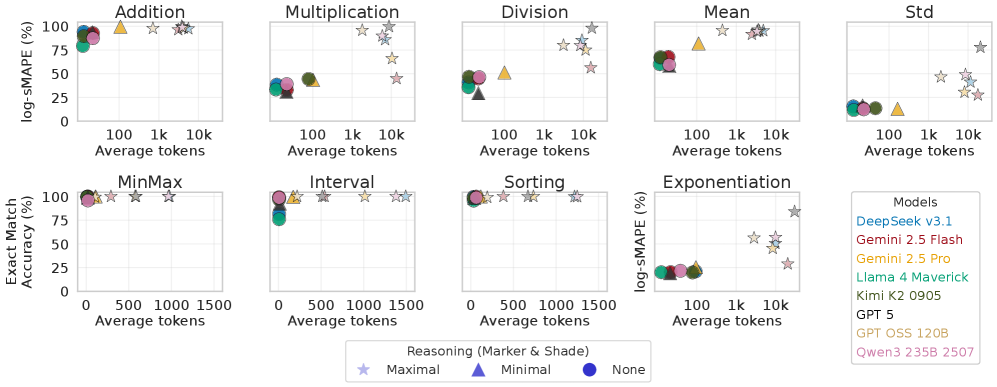

- Reasoning chains are inefficient, requiring thousands of tokens for single calculations (e.g., 5k-30k tokens), limiting the complexity of solvable problems within context windows

- Existing sinusoidal encodings make multiplication computationally intensive and prone to errors because they require non-local decoding and re-encoding

Concrete Example:

Frontier models like Llama-3.1-405B require thousands of reasoning tokens to solve basic multiplication or division. Meanwhile, sinusoidal encodings (FoNE) fail to learn multiplication because the operation requires a complex convolution in the frequency domain, unlike addition which is simple component-wise multiplication.

Key Novelty

BitTokens (IEEE 754 Binary Encoding)

- Encodes numbers as a sequence of bits (sign, exponent, significand) added to a learnable [NUM] token, rather than splitting numbers into digit sequences

- leverages the hierarchical structure of IEEE 754 (logarithmic exponent, linear significand) which aligns with efficient binary arithmetic algorithms already used in hardware

- Enables arithmetic operations to be learned via bit-wise logic gates (XOR, AND) rather than complex frequency convolutions required by sinusoidal methods

Architecture

Conceptual flow of BitToken construction and integration

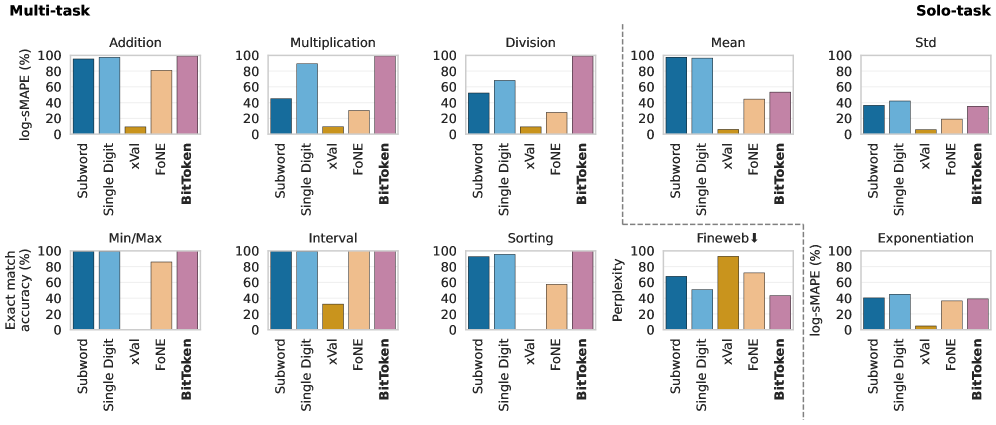

Evaluation Highlights

- Achieves near-perfect performance on addition, multiplication, and division tasks with a small nanoGPT-2 model, significantly outperforming xVal and FoNE

- BitTokens outperforms standard single-digit and subword tokenizers on single-step calculation tasks while using only one token per number

- Demonstrates that frontier LLMs (without BitTokens) require 5,000 to 30,000 reasoning tokens to solve a single calculation effectively

Breakthrough Assessment

8/10

Proposes a fundamental shift in how numbers are represented in LLMs, solving the 'arithmetic gap' for single-token representations where previous methods like xVal and FoNE failed on multiplication.