📝 Paper Summary

Process-based Evaluation

Small Language Models (SLMs)

Commonsense Reasoning

ReTraceQA introduces a manually annotated benchmark revealing that Small Language Models frequently produce correct final answers via flawed reasoning paths, a failure mode current answer-only metrics overlook.

Core Problem

Current evaluation practices for Small Language Models (SLMs) rely almost exclusively on final answer accuracy, neglecting whether the underlying reasoning process is valid.

Why it matters:

- Models can arrive at correct answers through invalid reasoning (false positives), artificially inflating performance metrics

- Existing process-based benchmarks focus on math/science, leaving commonsense reasoning largely underexplored despite requiring different capabilities

- Answer-only metrics mask critical hallucinations and logical errors, making models unreliable for real-world deployment where reasoning transparency matters

Concrete Example:

A model might correctly answer 'A' to a question about arctic animals but justify it by claiming 'wolves are not found in arctic regions' (a hallucination). The final answer is correct, but the reasoning is factually wrong.

Key Novelty

ReTraceQA: First Gold-Standard Commonsense Reasoning Trace Benchmark

- Provides 2,421 expert-annotated reasoning traces from SLMs on commonsense tasks, labeling the exact step where errors occur

- Categorizes errors into Hallucination, Reasoning, and Misinterpretation, distinguishing between factual failures and logical inconsistencies

- Demonstrates that Strong LLMs (like GPT-4o) struggle to localize specific errors in commonsense traces even if they can detect the trace is generally wrong

Architecture

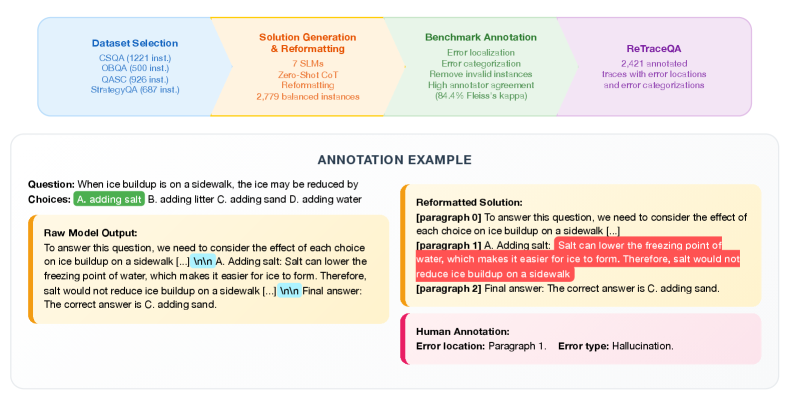

The ReTraceQA annotation pipeline. It illustrates the process from Question -> SLM Trace Generation -> Human Annotation -> Final Benchmark.

Evaluation Highlights

- 14-24% of SLM instances across datasets yield the correct final answer despite having flawed reasoning processes

- SLM performance scores drop by up to 25% when evaluated on reasoning correctness rather than just final answer accuracy

- Hallucination errors constitute the majority of failures (41.9%–62.5%), exceeding logical reasoning errors

Breakthrough Assessment

8/10

Significantly exposes the 'false positive' problem in SLM evaluation for commonsense tasks. The manual annotation of traces provides a high-quality ground truth that is currently rare outside of math domains.