📝 Paper Summary

Knowledge-Enhanced Language Models

Weakly Supervised Pretraining

WKLM (Weakly Supervised Knowledge-Pretrained Language Model) enhances BERT by training it to distinguish correct entity mentions from same-type negative replacements, improving factual knowledge retention.

Core Problem

Standard language model pretraining (like BERT) captures syntax and semantics but struggles to explicitly model entity-centric encyclopedic knowledge.

Why it matters:

- Pretrained models often fail tasks requiring external world knowledge (e.g., specific facts about entities) despite training on large corpora.

- Existing solutions often require complex external knowledge base integrations or memory-heavy architectures.

- Zero-shot fact completion reveals that standard BERT encodes entity-level knowledge only to a limited degree.

Concrete Example:

In a sentence like 'The capital of France is Paris', a standard LM might predict 'Paris' based on collocation. WKLM is explicitly trained to distinguish 'Paris' from other cities (e.g., 'London', 'Berlin') placed in that context, forcing it to learn the factual relationship rather than just linguistic patterns.

Key Novelty

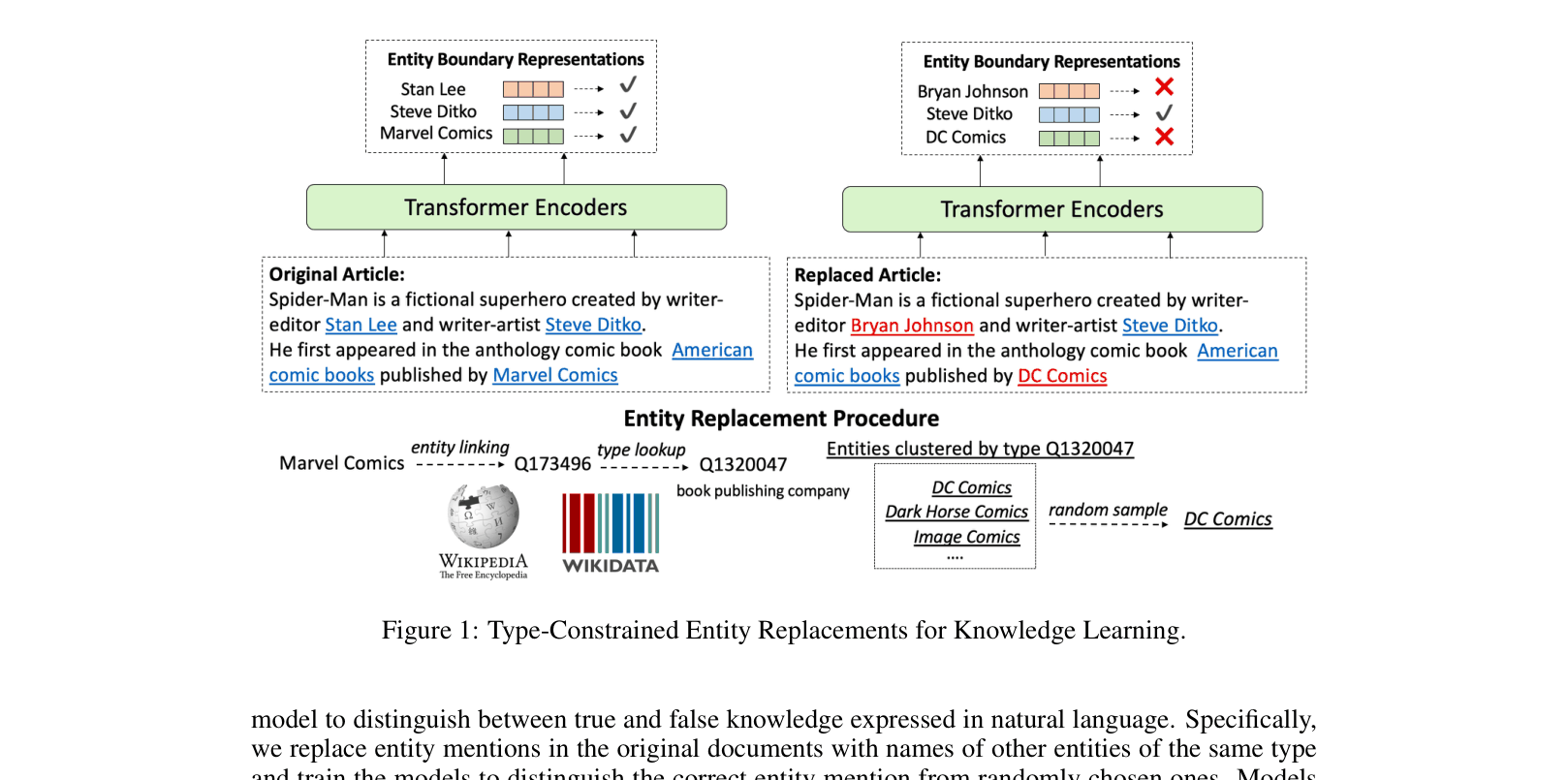

Entity Replacement Training (Weakly Supervised)

- Instead of just masking random tokens, the model identifies entity mentions and replaces them with other entities of the *same type* (e.g., replacing a person with another person).

- The model must determine if an entity in the text is the original correct one or a replacement, effectively training it to fact-check statements.

- This objective is combined with standard Masked Language Modeling (MLM) but requires no external knowledge base architecture changes during fine-tuning.

Architecture

Illustration of the Type-Constrained Entity Replacement strategy.

Evaluation Highlights

- Outperforms BERT-large on Zero-Shot Fact Completion (Hits@10) with significant gains (e.g., +24.8% on 'Capital Of' relation).

- Achieves new state-of-the-art on FIGER fine-grained entity typing with 60.21% accuracy (+5.68% over BERT base).

- Improves open-domain QA performance on WebQuestions, TriviaQA, and Quasar-T by an average of 2.7 F1 score over BERT.

Breakthrough Assessment

7/10

Simple yet highly effective pretraining objective that significantly improves knowledge grounding without architectural changes. Sets SOTA on entity typing and improves QA, though relies on existing BERT architecture.