📝 Paper Summary

Synthetic Data Generation

Reasoning Models

Supervised Fine-Tuning (SFT)

OpenThoughts systematically ablates data curation steps—sourcing, mixing, filtering, and teacher selection—to build a state-of-the-art open reasoning dataset that enables small models to outperform larger distilled baselines.

Core Problem

Training strong reasoning models relies on high-quality SFT data, but the optimal recipes for curating such data (question selection, filtering, teacher choice) remain proprietary and unexplored in open research.

Why it matters:

- Current open-source efforts often rely on heuristics or a single teacher model (DeepSeek-R1) without verifying which data strategies actually yield better downstream performance.

- Exploring the design space of data curation is prohibitively expensive for most researchers due to high inference costs for teacher models.

- Lack of public knowledge prevents the community from reproducing frontier reasoning capabilities like those of o3 or DeepSeek-R1.

Concrete Example:

When filtering questions for a reasoning dataset, standard methods like embedding distance or fastText classification often fail to select the best samples. The paper shows these methods are outperformed by simple heuristics like selecting questions that elicit long responses from an LLM.

Key Novelty

Systematic Ablation of Reasoning Data Pipelines (OpenThoughts)

- Conduct over 1,000 controlled experiments to isolate the impact of each data curation step: question sourcing, mixing strategies, question filtering, answer deduplication, and teacher selection.

- Establish empirical findings that contradict common intuition, such as 'diversity (mixing many sources) hurts performance' and 'stronger benchmarks do not mean better teachers'.

- Scale the best-performing pipeline configuration to create OpenThoughts3-1.2M, a massive open-source reasoning dataset.

Architecture

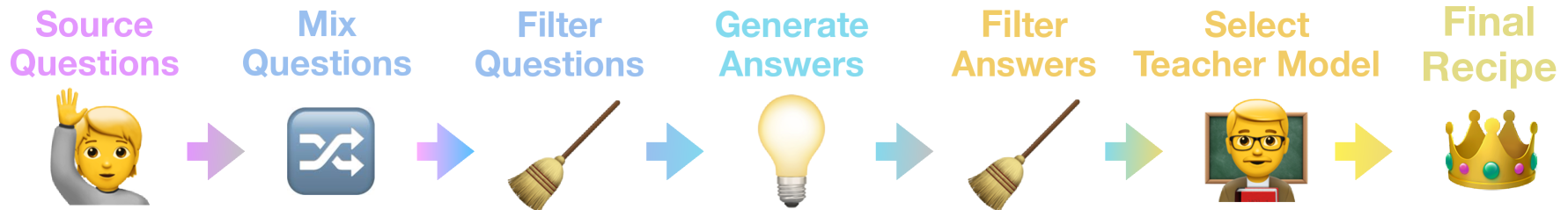

The OpenThoughts3 data curation pipeline, illustrating the sequence of steps from sourcing to final dataset creation.

Evaluation Highlights

- OpenThinker3-7B achieves 53% on AIME 2025, outperforming DeepSeek-R1-Distill-Qwen-7B by 15.3 percentage points.

- On GPQA Diamond, OpenThinker3-7B reaches 54%, surpassing DeepSeek-R1-Distill-Qwen-7B by 20.5 percentage points.

- Sampling 16 answers per question from the teacher increases dataset size and effectiveness more than mixing diverse question sources.

Breakthrough Assessment

9/10

Provides a comprehensive, empirical recipe for reasoning data curation that significantly advances the state of open-source models, outperforming major distilled baselines by large margins.