📝 Paper Summary

Data Cleaning

Table Representation Learning

E-commerce

Tab-Cleaner detects errors in wide, text-rich product catalogs by pre-training a hierarchical Transformer that efficiently models interactions between products, attributes, and long text descriptions without full quadratic attention.

Core Problem

Standard language models fail to capture tabular structures (row/column correlations) and cannot handle the extremely long sequences formed by concatenating rich textual attributes in wide product catalogs.

Why it matters:

- Product catalogs are self-reported by retailers, inevitably containing noisy facts that harm downstream applications like search and recommendation.

- Attributes are strongly correlated (e.g., 'Flavor' vs 'Ingredient'), meaning simple per-column anomaly detection fails.

- Existing table models truncate inputs (e.g., at 512 tokens), causing information loss for wide tables with long descriptions.

Concrete Example:

A 'Tortilla Chips' product listing has 'Spicy Queso' as flavor but 'Cheddar' in another field, or a non-edible 'Sippy Cup' listing erroneously includes a 'Flavor' attribute. Standard models treating this as a flat text sequence either truncate the context needed to spot the contradiction or fail to model the 'flavor' column's specific dependency on 'ingredients'.

Key Novelty

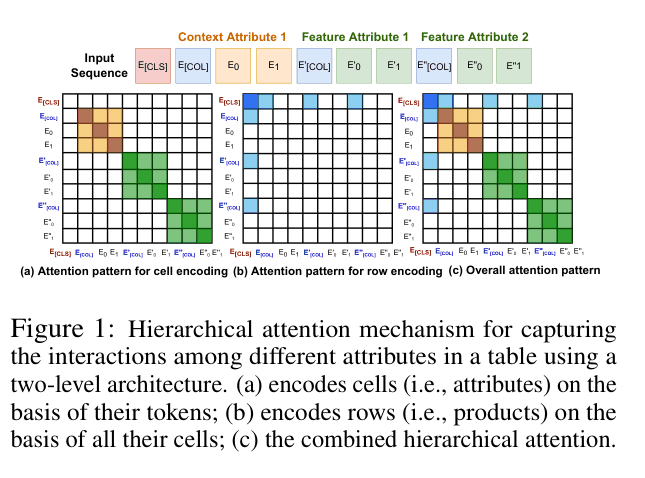

Hierarchical Attention for Wide Text-Rich Tables

- Decomposes table modeling into two levels: first encoding individual cells (attributes) using a local+global attention window, then encoding rows (products) by attending only to cell representations ([COL] tokens).

- Introduces specific pre-training objectives for tables: correcting swapped cells (structure-aware) and predicting product categories (row-aware), alongside standard masked language modeling.

- Enables conditional encoding where feature attributes (short specs) explicitly attend to context attributes (long descriptions) to verify consistency.

Architecture

The hierarchical attention mechanism. (a) Cell encoding: tokens attend locally within the cell. (b) Row encoding: [CLS] attends to [COL] tokens. (c) Combined view.

Evaluation Highlights

- +16% PR AUC improvement on attribute applicability classification over state-of-the-art baselines (DistillBERT, Longformer) on Amazon Product Catalog data.

- +11% PR AUC improvement on attribute value validation tasks compared to baselines.

- Reduces pre-training time by ~64% compared to Longformer on wide tables (21.63 vs 60.56 hours/epoch) due to sparse hierarchical attention.

Breakthrough Assessment

7/10

Strong practical contribution for the specific domain of text-rich tabular data. The hierarchical attention mechanism effectively addresses the length limitation of Transformers for wide tables, showing significant empirical gains.