📊 Experiments & Results

Evaluation Setup

Length generalization tasks on formal languages

Benchmarks:

- Counter languages (CNT) (Formal Language Recognition)

- Regular languages (PARITY) (Formal Language Recognition)

- Star-free languages (DYCK-(1,2)) (Formal Language Recognition)

- Unambiguous polynomials (RDP-1) (Formal Language Recognition) [New]

- Left-deterministic polynomials (LDP-1, LDP-2) (Formal Language Recognition) [New]

Metrics:

- Accuracy (max and mean over 5 runs)

- Statistical methodology: Reported mean and standard deviation across 5 random seeds

Key Results

| Benchmark | Metric | Baseline | This Paper | Δ |

|---|---|---|---|---|

| Transformer recognition results align exactly with LTL[P] theory: perfect generalization on LTL[P] languages, failure on strictly more complex ones. | ||||

| LDP-2 | Accuracy (Max) | 100.0 | 100.0 | 0.0 |

| RDP-1 | Accuracy (Max) | 100.0 | 90.0 | -10.0 |

| PARITY | Accuracy (Max) | 100.0 | 52.1 | -47.9 |

| DYCK-(1,2) | Accuracy (Max) | 100.0 | 83.4 | -16.6 |

| Language modeling experiments confirm the same boundary: perfect per-token accuracy for LTL[P] languages, errors for others. | ||||

| LDP-1 | Accuracy (Mean) | Not reported in the paper | 97.3 | Not reported in the paper |

Experiment Figures

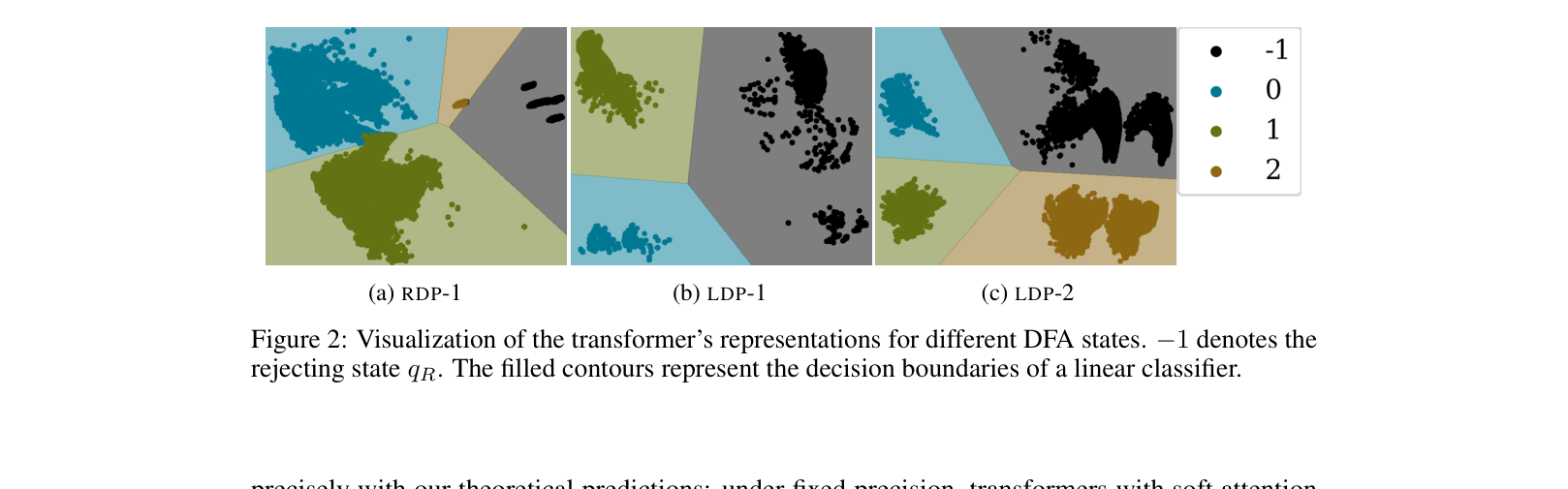

Visualization of transformer hidden state representations projected to 2D for different DFA states

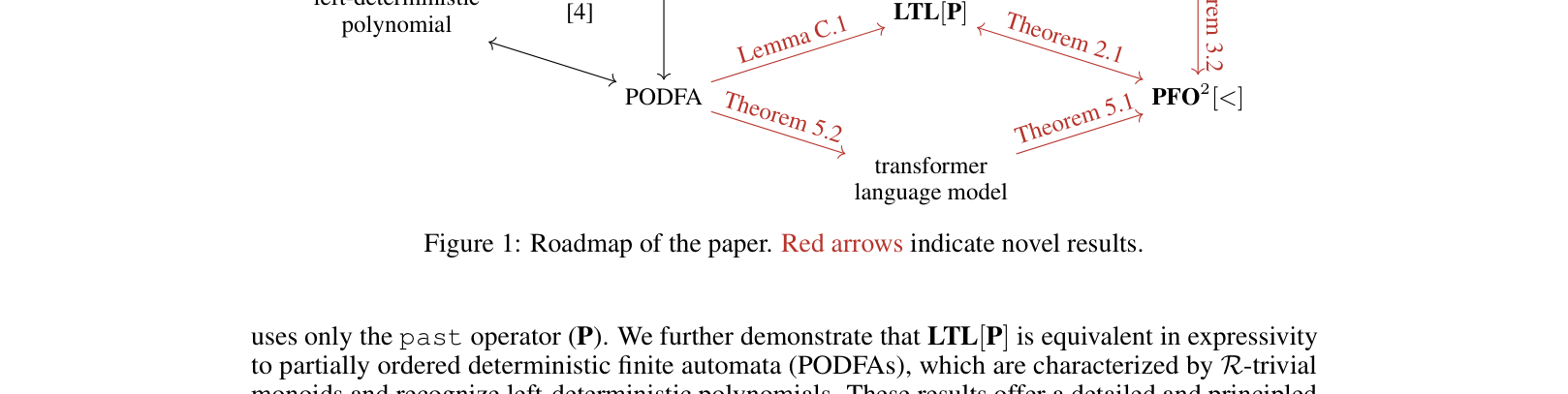

Venn diagram/Hierarchy of language classes and logic fragments

Main Takeaways

- Fixed-precision soft-attention transformers without positional encodings are strictly limited to recognizing Left-Deterministic Polynomials (LTL[P]).

- They cannot recognize general star-free languages (like RDP-1), contrary to theories assuming arbitrary precision or hard attention.

- The 'fixed precision' assumption creates a 'bounded attention span' effect: soft attention cannot effectively attend to unbounded history, acting more like a finite window.

- Probing hidden states reveals that for solvable languages (LDP-1), states are linearly separable, while for unsolvable ones (RDP-1), they are entangled.