📝 Paper Summary

Commonsense Reasoning

Multiple-Choice Question Answering

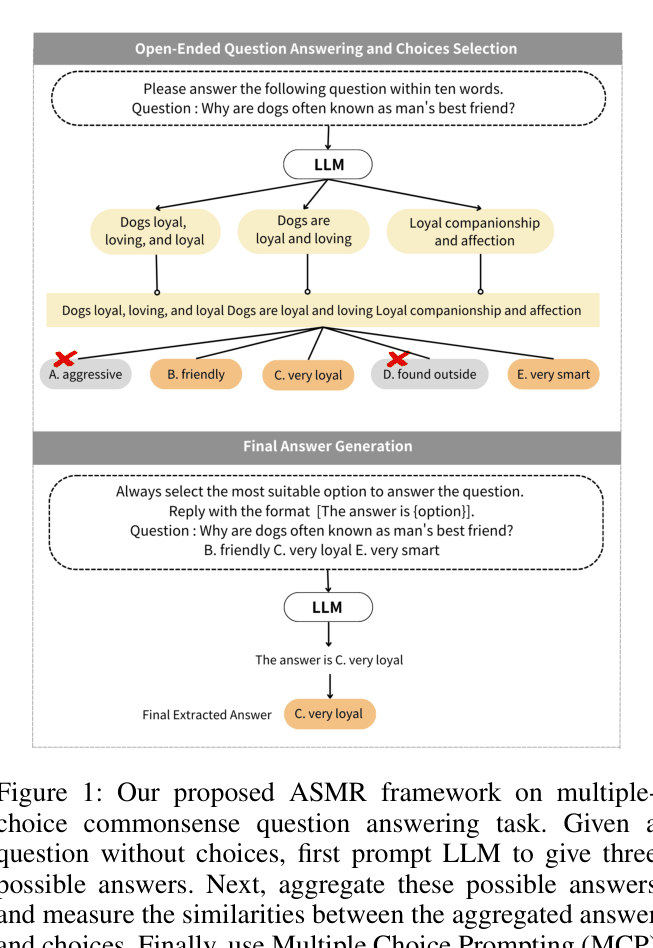

ASMR improves multiple-choice commonsense reasoning by first prompting an LLM to generate open-ended answers, then using those answers to semantically retrieve the most relevant choices before final prediction.

Core Problem

Providing all answer choices directly to an LLM in multiple-choice tasks restricts the model's potential to access its internal commonsense knowledge, often leading to distraction by plausible incorrect options.

Why it matters:

- LLMs frequently suffer from biases like majority label bias and recency bias when presented with multiple choices

- Direct multiple-choice prompting limits the model to comparing given options rather than first formulating its own understanding, unlike human reasoning processes

Concrete Example:

Question: 'Why are dogs often known as man’s best friend?' Choices: A. aggressive, B. friendly, C. very loyal... A standard LLM (MCP) incorrectly picks 'B. friendly' because it seems plausible. ASMR first generates the open-ended answer 'loyal', which matches choice C, leading the model to correctly select 'C. very loyal'.

Key Novelty

Aggregated Semantic Matching Retrieval (ASMR)

- Mimic human reasoning by generating a preliminary open-ended answer before seeing the choices

- Use the semantic similarity between the generated open-ended answer and the provided options to filter/retrieve the most relevant choices

- Re-prompt the LLM with only the top-k semantically relevant choices to make the final decision

Architecture

The proposed ASMR framework workflow compared to standard approaches

Evaluation Highlights

- +15.3% accuracy improvement over the previous SOTA (MCP) on the SIQA dataset using ASMR-C (Concatenation) with top-3 retrieval

- Consistently outperforms Zero-Shot Self-Consistency (ZS-SC) and Multiple Choice Prompting (MCP) across CSQA, SIQA, and ARC datasets

- ASMR-C achieves 60.9% accuracy on CSQA (vs 51.2% baseline) and 72.6% on ARC-Easy (vs 58.9% baseline)

Breakthrough Assessment

7/10

Significant performance gains (+15% on SIQA) using a simple, model-agnostic prompting strategy that aligns well with human intuition. However, it relies on existing components (SimCSE) rather than new architecture.