📝 Paper Summary

LLM Inference Acceleration

Efficient Decoding

This survey systematically categorizes parallel text generation methods into AR-based and Non-AR-based paradigms to address the speed bottlenecks of sequential large language model inference.

Core Problem

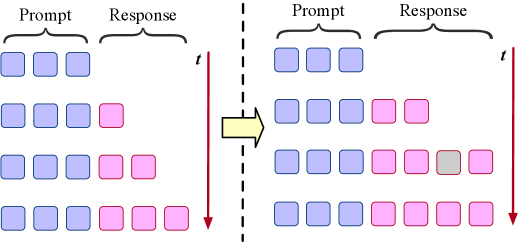

Autoregressive generation in LLMs produces tokens strictly sequentially (one at a time), causing generation latency to increase linearly with sequence length and leading to poor hardware utilization.

Why it matters:

- Limits responsiveness in latency-sensitive applications like real-time dialogue and interactive systems

- Causes suboptimal hardware utilization (GPUs/TPUs) due to memory-bandwidth constraints and idle periods between token generations

Concrete Example:

In a standard LLM, generating a 100-token response requires 100 separate forward passes. Even if the GPU could process multiple tokens at once, the sequential dependency forces it to wait, leaving computational resources idle and making long-form generation slow.

Key Novelty

Unified Taxonomy of Parallel Text Generation

- Categorizes methods into AR-compatible (preserving causal dependencies via blocks or speculation) and Non-AR (breaking dependencies for full parallelism)

- Defines parallel generation as any process where the ratio of inference steps to output tokens is less than 1

- Identifies three sub-types for AR-based (Draft-and-Verify, Decomposition-and-Fill, Multiple Token Prediction) and three for Non-AR (One-Shot, Masked, Edit-Based)

Architecture

A taxonomy tree categorizing Parallel Text Generation methods

Breakthrough Assessment

8/10

Provides a timely and necessary systematization of a rapidly exploding field. While it doesn't propose a new model, its taxonomy clarifies the relationship between speculative decoding and diffusion-based generation.