📝 Paper Summary

Tabular Deep Learning

In-Context Learning (ICL)

TabForestPFN improves tabular classification by pretraining an ICL-transformer on a mix of realistic and complex synthetic data (generated via decision trees), enabling the model to learn complex decision boundaries during fine-tuning.

Core Problem

Neural networks for tabular data struggle to match tree-based methods (like XGBoost) because they learn overly simple decision boundaries and often fail to generalize on small datasets.

Why it matters:

- Tabular data is ubiquitous in industry (finance, healthcare, ads), yet deep learning breakthroughs have not translated effectively to this domain

- Current neural networks suffer from 'simplicity bias', failing to capture the complex, non-smooth decision boundaries often found in real-world tabular data

- Prior ICL approaches like TabPFN focus on realistic priors but lack the explicit ability to model high-complexity boundaries needed for some datasets

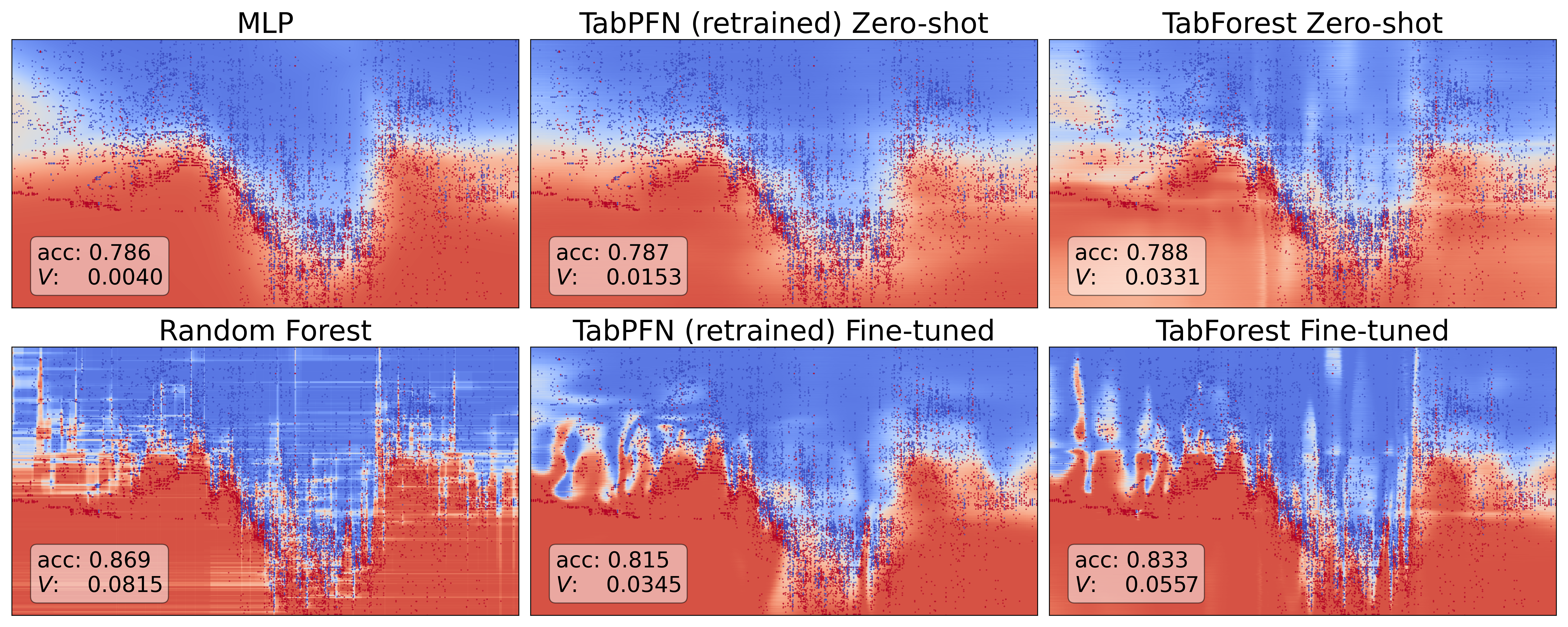

Concrete Example:

On the 'Electricity' dataset, a standard TabPFN produces a smooth, simple decision boundary that misses fine-grained patterns, whereas tree-based methods (and the proposed TabForestPFN) create jagged, complex boundaries that better fit the data.

Key Novelty

TabForestPFN: Hybrid Pretraining on 'Forest' and 'Realistic' Data

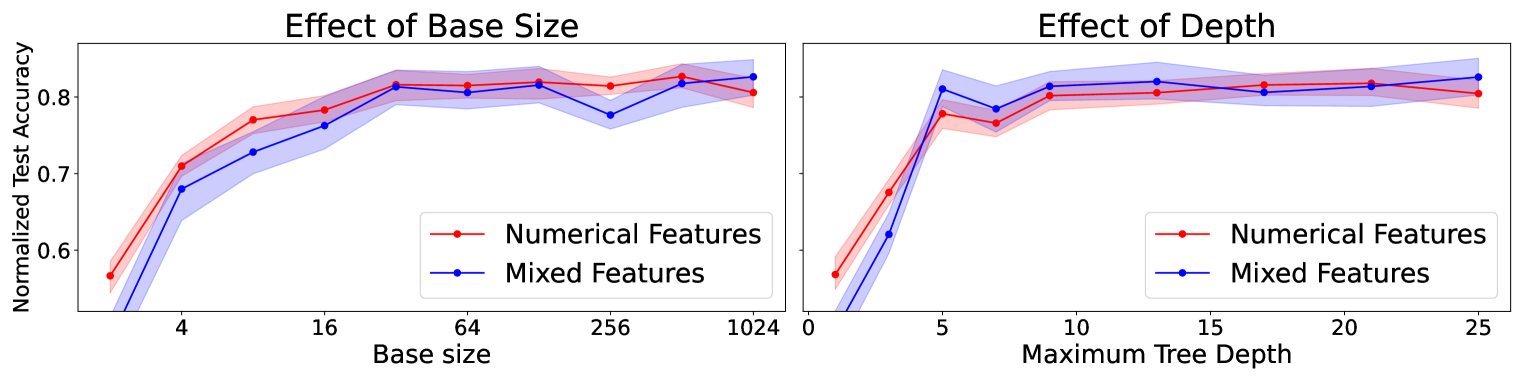

- Introduces a 'Forest Dataset Generator' that fits decision trees to random noise to create synthetic datasets with highly complex, jagged decision boundaries

- Demonstrates that fine-tuning ICL-transformers (unlike zero-shot) allows them to adapt these complex boundaries to real data

- Combines this new generator with the original TabPFN generator to create a single model (TabForestPFN) that excels at both zero-shot (via realistic prior) and fine-tuning (via complex boundary prior)

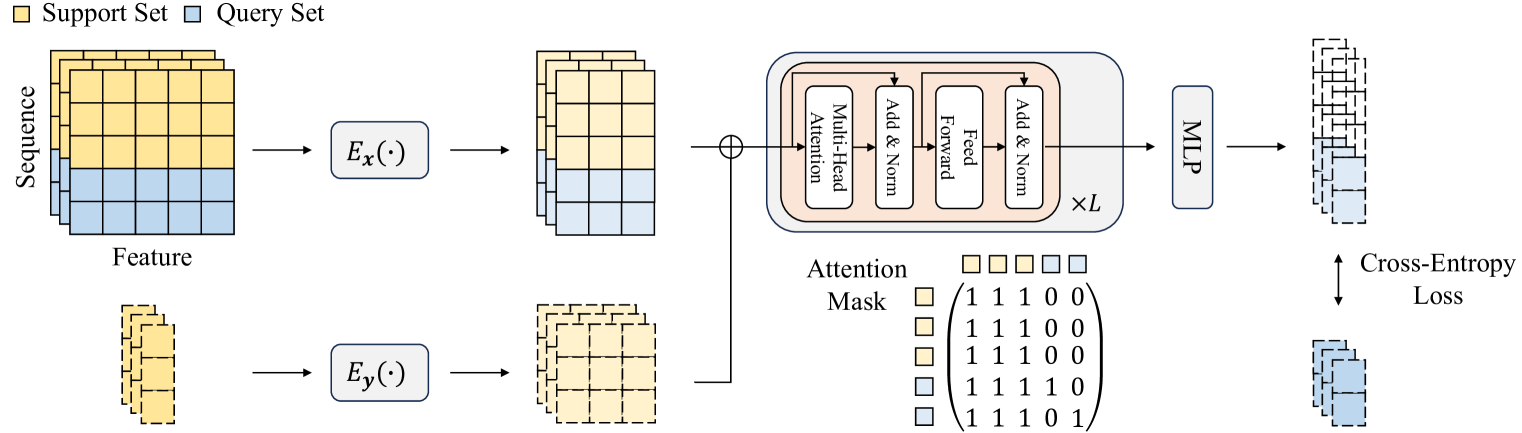

Architecture

The transformer architecture used for TabPFN and TabForestPFN.

Evaluation Highlights

- Fine-tuned TabForestPFN achieves the best average rank (2.0) on the WhyTrees benchmark, outperforming XGBoost (rank 3.1) and original TabPFN (rank 3.9)

- On the TabZilla benchmark, fine-tuning improves win-rate vs zero-shot significantly: fine-tuning wins on 73% of datasets larger than 1,000 samples

- TabForestPFN matches the fine-tuning performance of TabForest on complex tasks while retaining the superior zero-shot performance of TabPFN

Breakthrough Assessment

8/10

Significantly advances tabular deep learning by identifying 'decision boundary complexity' as the key missing link and solving it via a novel synthetic data generator. The performance competitive with XGBoost is a major milestone.