📝 Paper Summary

Multilingual Large Language Models

Cross-lingual In-context Learning

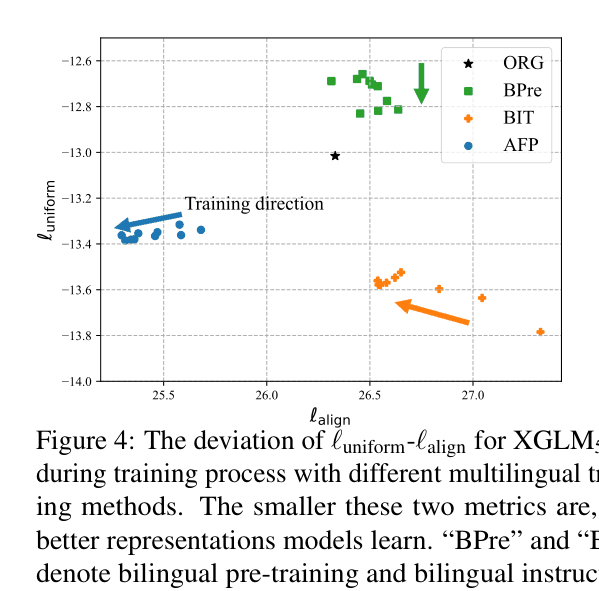

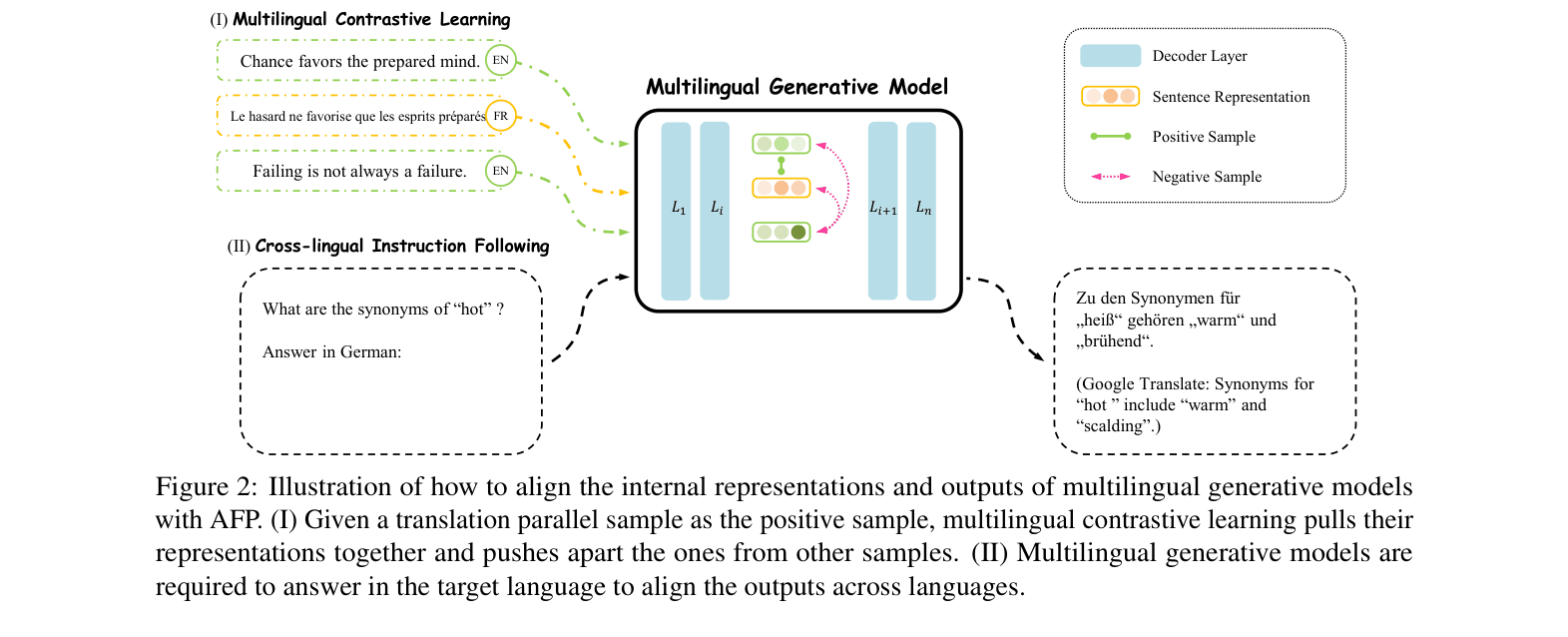

AFP aligns multilingual generative models by pulling internal representations of translation pairs closer and enforcing output alignment via cross-lingual instruction following to improve cross-lingual transfer.

Core Problem

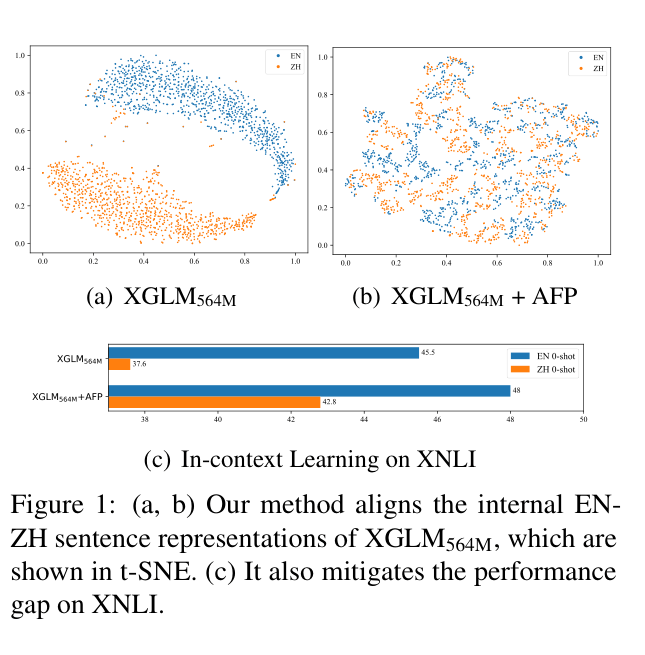

Multilingual generative models exhibit a performance bias toward high-resource languages and maintain isolated internal representation distributions for different languages, hindering effective knowledge transfer.

Why it matters:

- High-resource bias leaves low-resource languages with significantly worse performance (e.g., 27.5% gap between English and Telugu in GPT-4)

- Isolated representation clusters mean models learn languages separately rather than transferring concepts, limiting the utility of multilingual pre-training

- Re-training massive models to fix these biases is computationally prohibitive; lightweight alignment methods are needed

Concrete Example:

When visualizing sentence representations in XGLM, English and Chinese sentences with the same meaning form two distinct, separated clusters. This separation prevents the model from effectively using English knowledge to answer Chinese prompts in zero-shot settings.

Key Novelty

Align a Fter Pre-training (AFP)

- Aligns internal model states by treating translation pairs as positive examples in contrastive learning, pulling their vector representations together within the decoder

- Aligns model outputs by forcing the model to generate responses in a target language given a source language context (Cross-lingual Instruction Following), rather than just matching the input language

Architecture

The AFP framework illustrating the two alignment modules: Multilingual Contrastive Learning (MCL) and Cross-lingual Instruction Following (CIF).

Evaluation Highlights

- Reduces the relative performance gap between English and Chinese on XNLI by 6.53% for XGLM 564M using <1M parallel samples

- Improves average performance on 5 multilingual tasks (NLI, Reasoning, Paraphrase) by 2.6% across 52 languages

- Boosts zero-shot translation performance from 27.3 to 61.2 COMET score on average for XGLM models

Breakthrough Assessment

7/10

Effective lightweight framework that significantly boosts cross-lingual transfer with minimal data (<0.1‰ of pre-training tokens). While not a new architecture, it solves a critical alignment problem efficiently.