📝 Paper Summary

Mechanistic Interpretability

Multi-hop Reasoning

Internal Representations

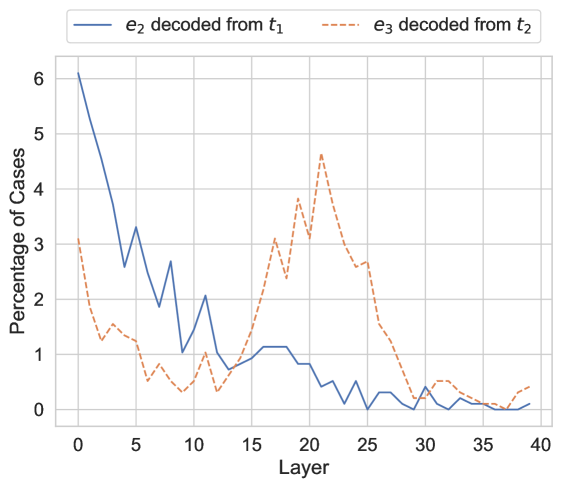

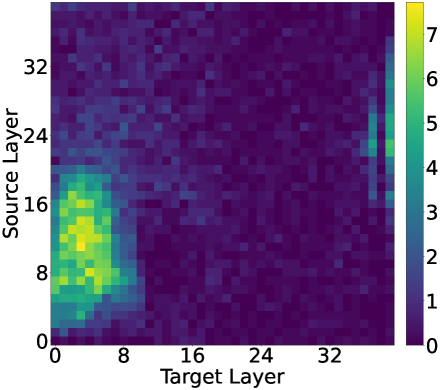

LLMs resolve the first hop of a multi-hop query in early layers but resolve the second hop in late layers; failures occur when the second hop happens too late for the necessary computation.

Core Problem

Large Language Models often fail to correctly answer multi-hop queries (e.g., 'The spouse of the performer of Imagine is') even when they possess the knowledge for each individual hop.

Why it matters:

- Latent multi-hop reasoning is critical for compositional generalization, allowing models to answer complex questions without explicit training on every combination

- Understanding internal mechanisms is essential for reliable model editing and debugging, as treating the model as a black box hinders targeted improvements

- Current interpretability methods (like vocabulary projection) often fail to detect entity resolution in early layers, leading to an incomplete understanding of how reasoning fails

Concrete Example:

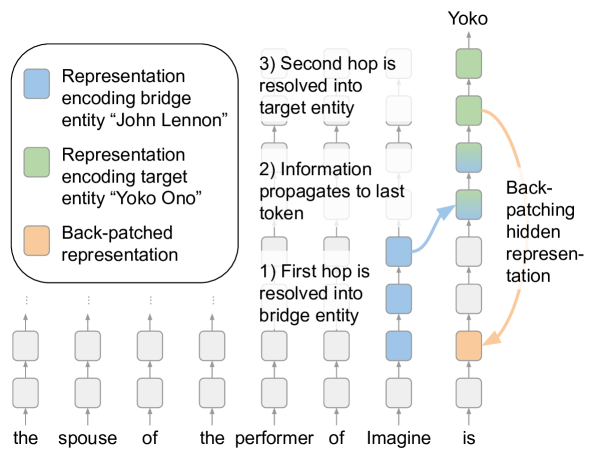

For the query 'The spouse of the performer of Imagine is', the model must first identify 'John Lennon' (hop 1) and then 'Yoko Ono' (hop 2). If the model identifies Lennon too late in its layers, the remaining layers may lack the specific functionality (MLP/Attention) needed to extract Ono, causing the model to output an incorrect answer despite knowing both facts individually.

Key Novelty

Sequential Latent Reasoning Pathway & Back-Patching Analysis

- Discovers that the 'bridge entity' (result of hop 1) is encoded in early layers at the first-hop token position, while the final answer (hop 2) appears only in late layers at the final token position

- Proposes 'back-patching': manually moving the hidden representation of the bridge entity from a later layer to an earlier layer to give the model more 'depth' to compute the second hop

Architecture

A conceptual diagram of the multi-hop reasoning process and the back-patching intervention.

Evaluation Highlights

- Up to 66% of initially incorrect multi-hop queries can be corrected by back-patching the bridge entity representation to an earlier layer

- In 41%-78% of cases, the bridge entity is successfully resolved in the hidden representation of the first hop's end-token, even when the final answer is wrong

- The second hop (final answer) is predominantly resolved by MLP sublayers in the upper layers of the model, specifically at the last token position

Breakthrough Assessment

7/10

Provides strong mechanistic evidence for why multi-hop reasoning fails (layer budget exhaustion) and introduces a novel intervention (back-patching) that significantly recovers performance without training.