📝 Paper Summary

Automated dataset curation

Preference learning

LLM self-improvement

Refine-n-Judge is an automated pipeline that iteratively refines LLM responses and uses a judge to verify quality improvements, creating preference-ranked datasets for fine-tuning.

Core Problem

Curating high-quality preference datasets for LLM fine-tuning is costly and unscalable when relying on human feedback, while unguided self-refinement often fails to yield actual improvements.

Why it matters:

- Human feedback is expensive, slow, and hard to scale for large datasets

- Unguided self-correction (like SELF-REFINE) often suffers from drift or verbosity bias without external verification

- High-quality training data is the critical bottleneck for aligning models with user intent

Concrete Example:

Without a judge, an LLM might continuously rewrite an answer, making it longer and more verbose without improving accuracy (drifting). Refine-n-Judge stops this by rejecting refinements that don't beat the previous version.

Key Novelty

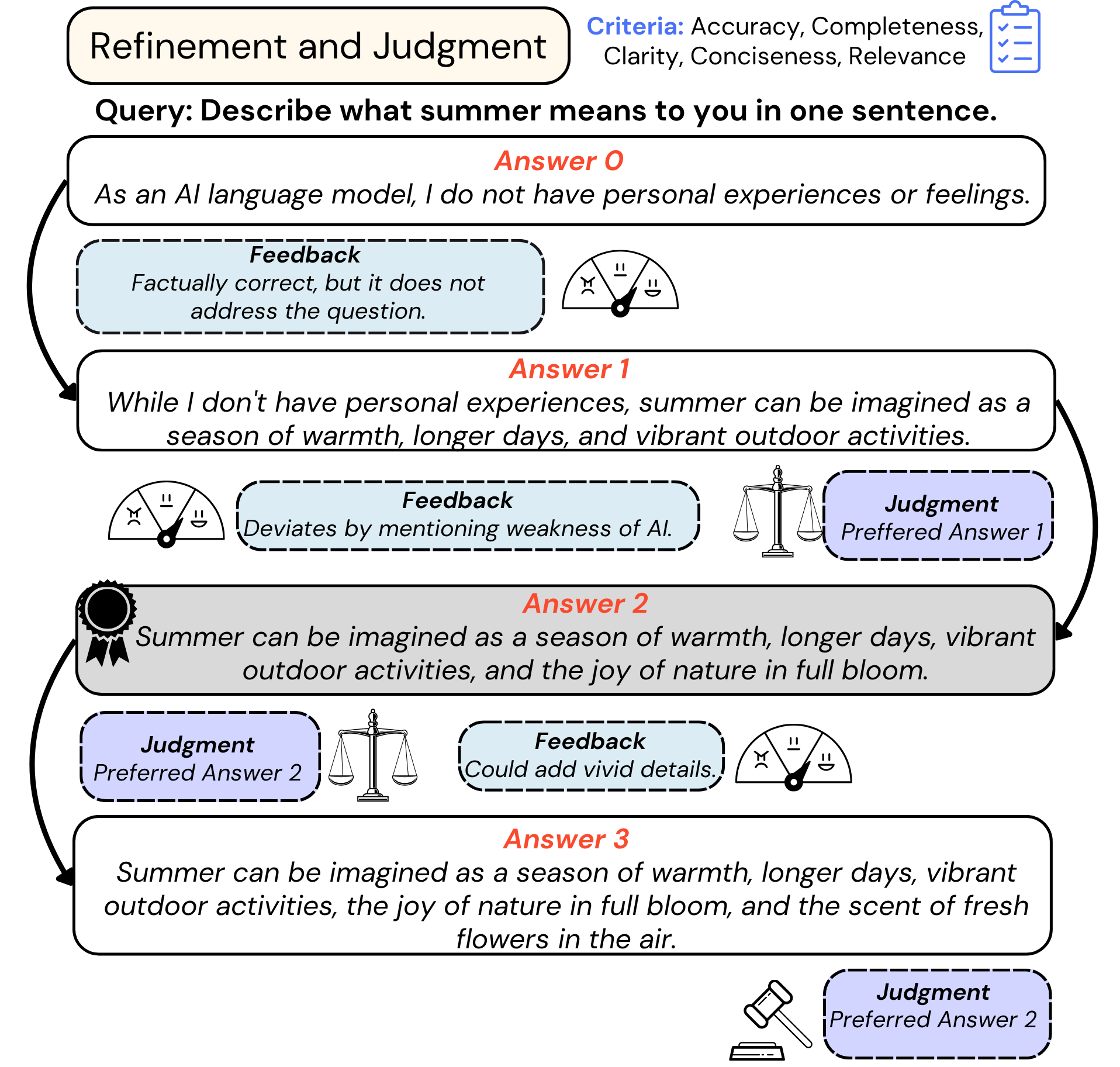

Iterative Refinement with Verification Loop

- Combines a 'Refiner' (generates improved answer candidates) and a 'Judge' (selects the better of two answers) into a single automated loop

- Unlike standard self-correction, refinements are only accepted if the Judge explicitly prefers the new version over the old one

- Produces 'preference chains' (sequences of improving answers) that can be used directly for fine-tuning, stopping automatically when quality plateaus

Architecture

The iterative Refine-n-Judge pipeline logic compared to a refiner-only approach.

Evaluation Highlights

- GPT-4 preferred Refine-n-Judge outputs 74% of the time compared to refinement-only pipelines without a judge

- Llama 3.3-70B fine-tuned on Refine-n-Judge data achieved a 91.8% win rate on AlpacaEval (vs 88.2% baseline)

- Llama 3.1-8B fine-tuned on the curated dataset saw a 5.5% absolute improvement on AlpacaEval compared to the original TULU dataset

Breakthrough Assessment

7/10

Effective, scalable method for synthetic data generation that mitigates self-correction drift. While the components (refiner/judge) are known, the integrated loop offers significant practical gains for fine-tuning.