📝 Paper Summary

Relational Reasoning

Neuro-symbolic AI

LLM Reasoning

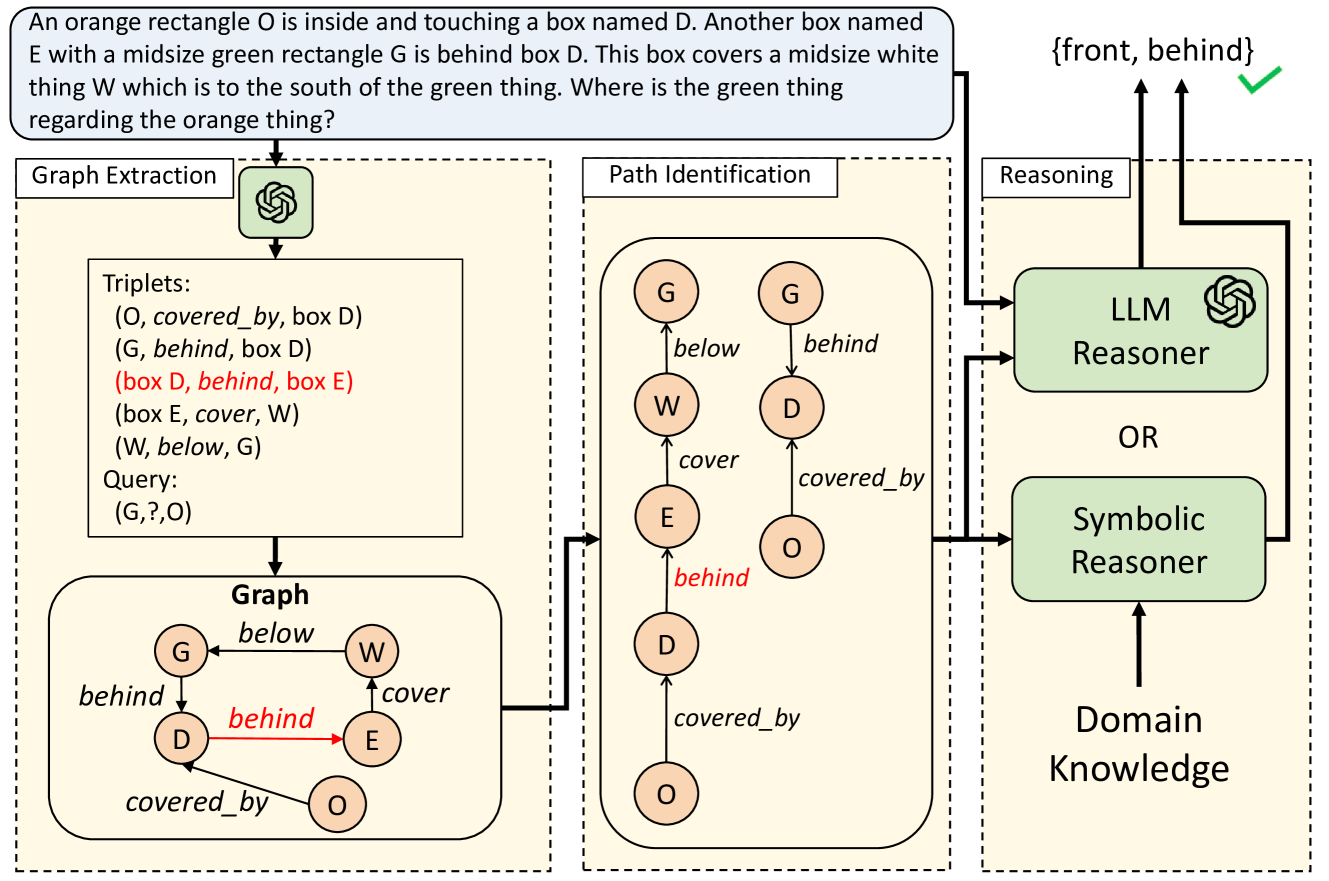

Path-of-Thoughts improves relational reasoning by using a single LLM call to extract a graph, identifying multiple reasoning paths between queried entities, and aggregating results to mitigate hallucinations and ambiguity.

Core Problem

LLMs struggle with multi-hop relational reasoning (e.g., kinship, spatial) due to shallow reasoning and hallucinations, while existing neuro-symbolic methods are brittle to extraction errors and require complex, task-specific translation.

Why it matters:

- Multi-hop reasoning is essential for planning, navigation, and logic tasks where LLMs typically fail compared to symbolic solvers.

- Current neuro-symbolic approaches often require many LLM calls or highly specialized symbolic modules that break when the LLM makes minor extraction errors.

- Pure prompting methods (CoT) often get distracted by irrelevant context in long stories.

Concrete Example:

In a story where 'A is west of B' and 'C is north of A', an LLM might hallucinate a direct relation or get confused by irrelevant details. Current symbolic methods might extract a wrong fact and fail completely. PoT extracts a graph and finds multiple paths (e.g., A->B->C) to verify the relationship, mitigating single-point failures.

Key Novelty

Path-of-Thoughts (PoT)

- Decomposes reasoning into three stages: graph extraction, path identification, and reasoning, using a single LLM call for extraction.

- Mitigates LLM errors by finding *multiple* independent reasoning paths between entities in the extracted graph, rather than relying on a single chain.

- Uses the graph structure to filter out irrelevant context, passing only relevant reasoning chains to the final solver (LLM or symbolic).

Architecture

The 3-stage pipeline of Path-of-Thoughts: (1) Graph Extraction from story, (2) Path Identification between query nodes, (3) Reasoning to produce the answer.

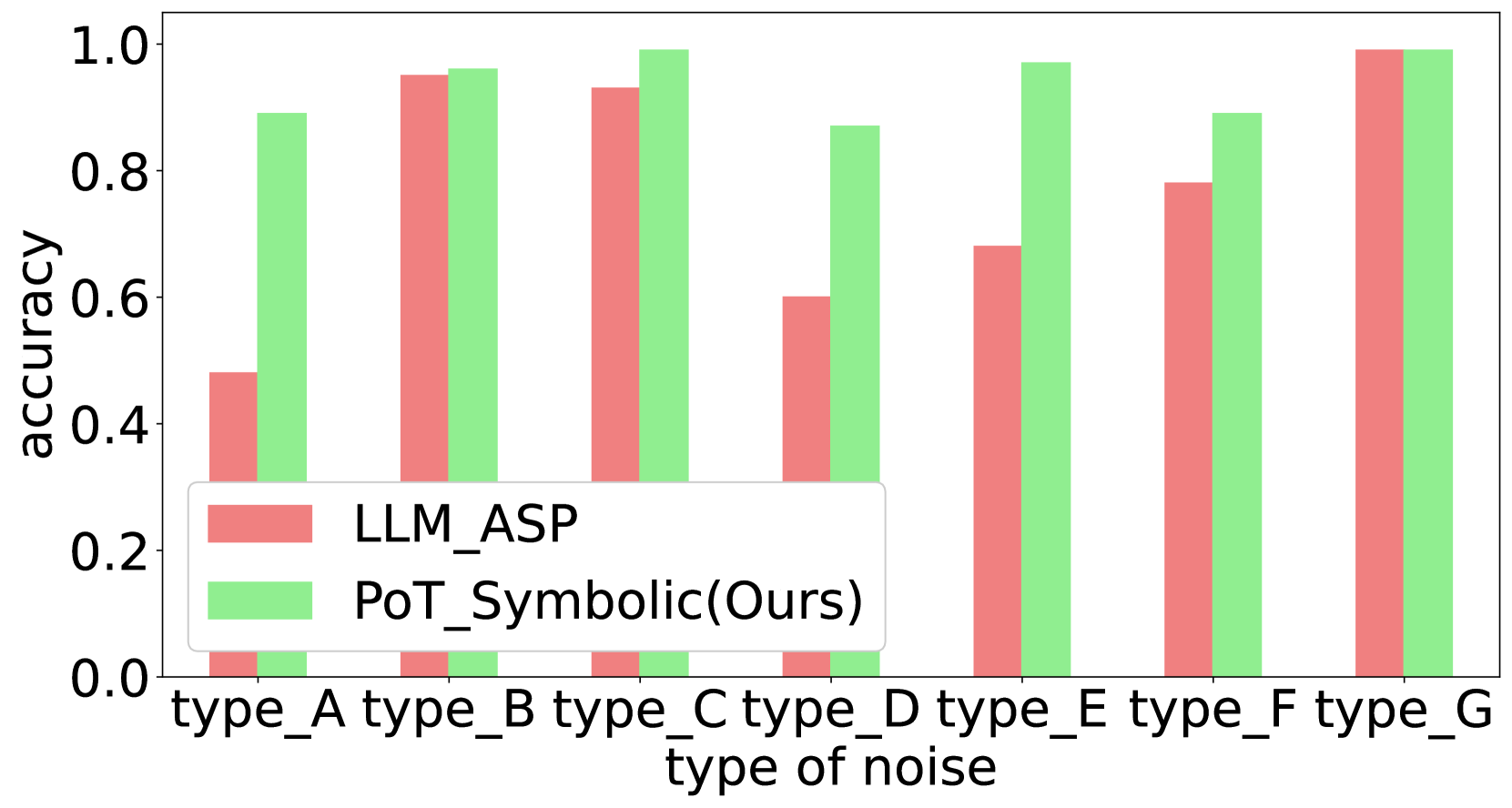

Evaluation Highlights

- Surpasses state-of-the-art baselines by up to 21.3% on benchmark datasets like CLUTRR and StepGame.

- Achieves higher accuracy than Chain-of-Thought (CoT) and CoT-SC on complex Chinese kinship tasks involving over 500 relation types.

- Demonstrates superior robustness to LLM extraction errors by successfully reasoning even when the initial graph contains noise, thanks to multi-path validation.

Breakthrough Assessment

7/10

Strong empirical results (+21%) and a practical approach to checking LLM hallucinations via graph path consistency. The single-call extraction is efficient, though the core novelty is an evolutionary step in neuro-symbolic reasoning rather than a paradigm shift.