📝 Paper Summary

Preference-based Reinforcement Learning (PbRL)

Reward Learning

World Models

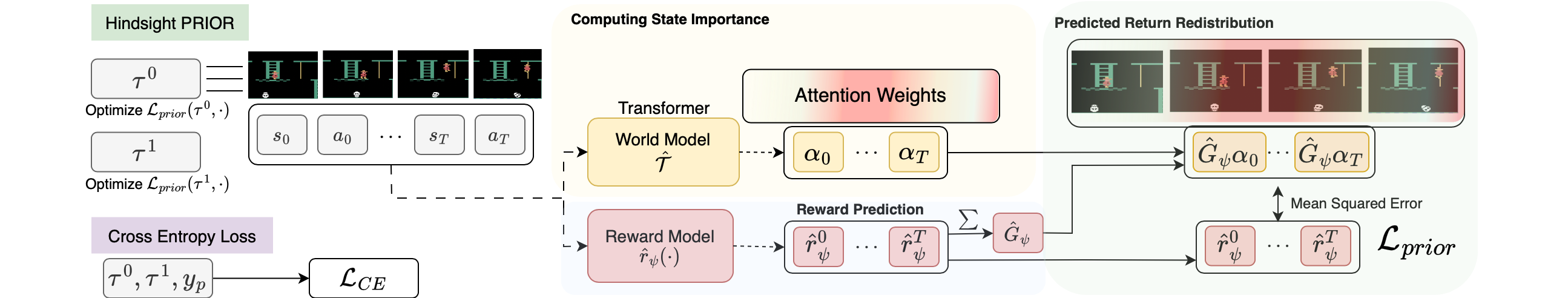

Hindsight PRIOR improves reward learning from human preferences by using an attention-based world model to identify important states in hindsight and redistributing predicted returns to those states.

Core Problem

Current PbRL methods lack a credit assignment strategy, making it difficult to determine which parts of a trajectory contributed to a preference label, leading to data inefficiency and misaligned reward functions.

Why it matters:

- Without credit assignment, many possible reward functions can explain a preference, requiring large amounts of feedback to disambiguate.

- Misaligned reward functions (that fit training data but fail to generalize) lead to sub-optimal policies when deployment environments differ slightly.

- Reducing human feedback requirements is critical for scaling alignment techniques to complex tasks where supervision is expensive.

Concrete Example:

In Montezuma's Revenge, an agent might receive a positive preference for a long trajectory because of a single key jump. Standard PbRL might smear the reward across all steps (including walking), whereas the true reward should be concentrated on the setup and execution of the jump.

Key Novelty

PRIor On Reward (PRIOR)

- Uses an attention-based world model to predict future states; the model's attention weights serve as a proxy for 'state importance' (states that are most predictive of the future).

- Redistributes the total predicted return of a trajectory to individual state-action pairs proportional to this importance, creating a dense supervision signal for the reward model.

- Combines the standard preference cross-entropy loss with this auxiliary 'hindsight' regression loss to guide reward learning.

Architecture

The Hindsight PRIOR framework. It illustrates the flow of a trajectory through a Policy, then to a World Model (TWM) to extract Attention Maps, which are then used to redistribute the Predicted Return (from the Reward Function) to create 'Target Rewards' for updating the Reward Function.

Evaluation Highlights

- Recovers significantly more reward on average compared to baselines: +20% on MetaWorld and +15% on DMC (p < 0.05).

- Achieves ≥ 80% success rate on MetaWorld tasks with as little as half the amount of feedback required by baselines.

- Demonstrates robustness to incorrect/noisy preference feedback, maintaining higher performance than PEBBLE and other baselines when feedback error rates increase.

Breakthrough Assessment

7/10

Offers a logically sound and empirically effective solution to the credit assignment problem in PbRL using world models. The gains in sample efficiency and robustness are significant.