📝 Paper Summary

Offline Preference Optimization

LLM Alignment

AlphaDPO improves model alignment by replacing the static reference model in DPO with an adaptive implicit reference that scales reward margins based on the policy's confidence.

Core Problem

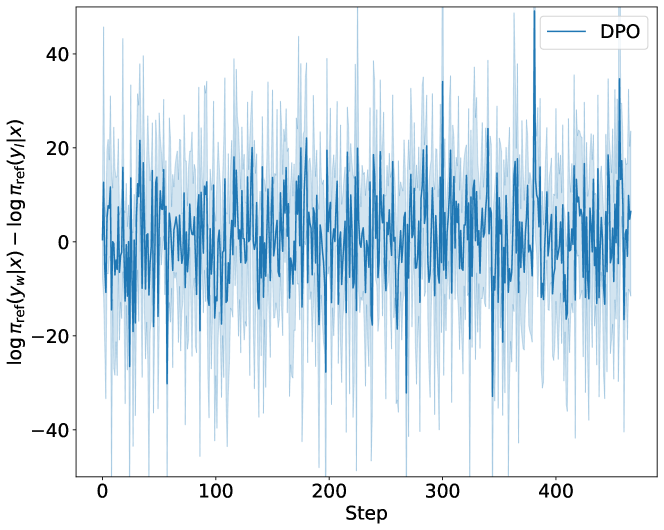

Existing alignment methods either rely on static reference models that degrade as the policy updates (DPO) or assume a uniform reward margin that ignores instance-specific preference strengths (SimPO).

Why it matters:

- Static reference models in DPO fail to provide meaningful discrimination between preferred and rejected responses once the policy shifts significantly

- Uniform margins in SimPO (Simple Preference Optimization) force the model to learn the same separation for ambiguous pairs as for obvious ones, leading to suboptimal learning on noisy data

- Offline alignment needs to balance exploitation of preference data with exploration without the complexity of Reinforcement Learning

Concrete Example:

In DPO, if the reference model assigns equal low probability to both a high-quality answer and a low-quality answer, it fails to guide the policy. In SimPO, a subtle preference pair (slightly better) and a distinct pair (vastly better) are forced to have the same reward margin $\gamma$, potentially causing overfitting or underfitting.

Key Novelty

Implicit Adaptive Reference Model (AlphaDPO)

- Constructs a theoretical 'implicit' reference model $\hat{\pi}_{ref}$ that interpolates between the static supervised baseline and a uniform distribution

- Introduces a smoothing parameter $\alpha$ to control this interpolation: $\alpha=0$ recovers SimPO (uniform ref), while $\alpha=1$ recovers DPO (static ref)

- Adapts the reward margin per instance based on the divergence between the current policy and the original reference, adding a normalized discrepancy term to the loss

Architecture

Comparison of reference model behaviors in DPO, SimPO, and AlphaDPO

Evaluation Highlights

- 58.7% Length-Controlled win rate on AlpacaEval 2 using Llama-3-8B-Instruct

- 35.7% win rate on Arena-Hard using Llama-3-8B-Instruct

- Demonstrates state-of-the-art performance across Mistral-7B, Llama-3-8B, and Gemma-2-9B without requiring multi-stage training

Breakthrough Assessment

8/10

Provides a theoretically grounded unification of two major alignment methods (DPO and SimPO) and achieves SOTA results on difficult benchmarks by addressing the core issue of static reference models.