📊 Experiments & Results

Evaluation Setup

Detecting LLM-generated text across multiple domains and source models using AUROC

Benchmarks:

- Fast-DetectGPT Benchmark (LGT Detection)

- MGTBench (LGT Detection)

Metrics:

- AUROC

- Statistical methodology: Not explicitly reported in the paper

Key Results

| Benchmark | Metric | Baseline | This Paper | Δ |

|---|---|---|---|---|

| ReMoDetect consistently outperforms zero-shot and supervised baselines across various aligned LLMs on the Fast-DetectGPT benchmark. | ||||

| Fast-DetectGPT Benchmark | Average AUROC | 91.9 | 95.8 | +3.9 |

| Fast-DetectGPT Benchmark (GPT-4) | AUROC | 90.6 | 97.9 | +7.3 |

| Fast-DetectGPT Benchmark (Claude 3 Opus) | AUROC | 92.6 | 98.6 | +6.0 |

| Fast-DetectGPT Benchmark | Average AUROC | 85.9 | 95.8 | +9.9 |

| MGTBench | Average AUROC | 82.6 | 93.4 | +10.8 |

Experiment Figures

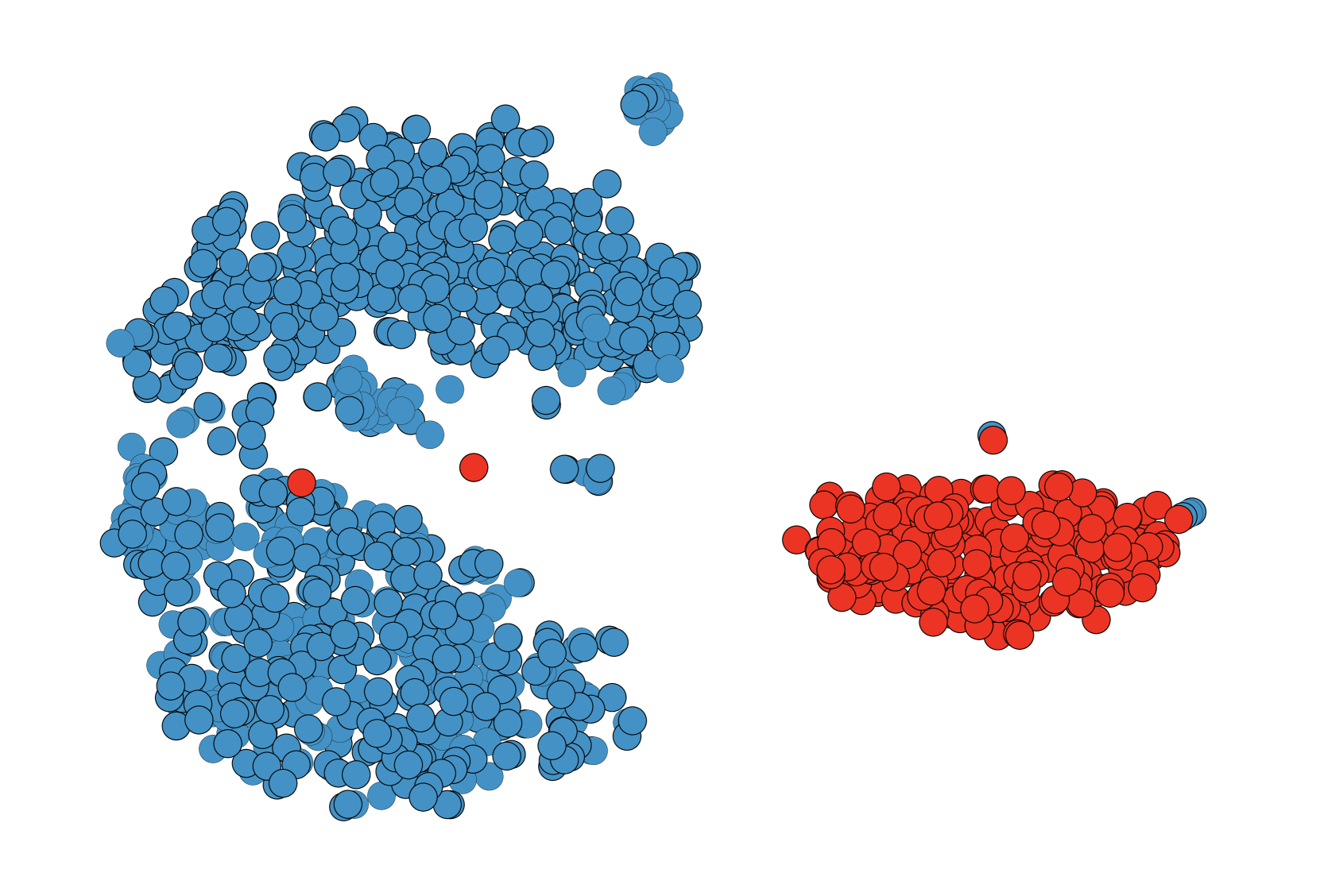

Distribution of predicted reward scores for Human-written text vs. Aligned LLM-generated text (GPT-4, etc.)

Main Takeaways

- Aligned LLMs (GPT-4, Claude) generate text with significantly higher reward scores than human text, validating the core hypothesis

- Continual preference fine-tuning with replay buffers effectively prevents overfitting, allowing the model to generalize to unseen LLMs

- The use of Human/LLM mixed texts improves the decision boundary, acting as effective data augmentation for the reward model

- ReMoDetect is more computationally efficient for inference than perturbation-based methods like DetectGPT as it requires only a single forward pass