📝 Paper Summary

Reinforcement Learning from Human Feedback (RLHF)

Direct Preference Optimization (DPO)

Offline Preference Optimization

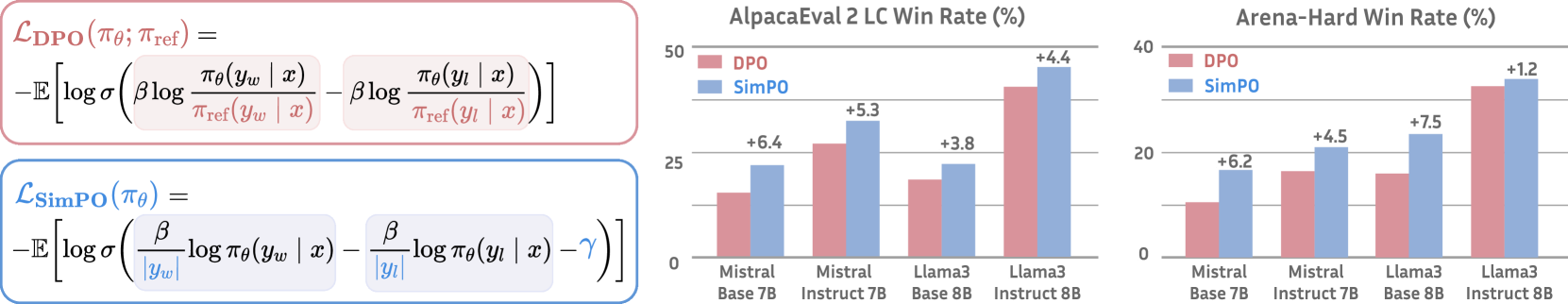

SimPO aligns preference optimization with generation by using length-normalized average log-likelihood as a reference-free reward and introducing a target margin, outperforming DPO without the memory cost of a reference model.

Core Problem

DPO requires a memory-intensive reference model and optimizes a reward (log-ratio) that mismatches the inference metric (average log-likelihood), often leading to poor likelihood rankings.

Why it matters:

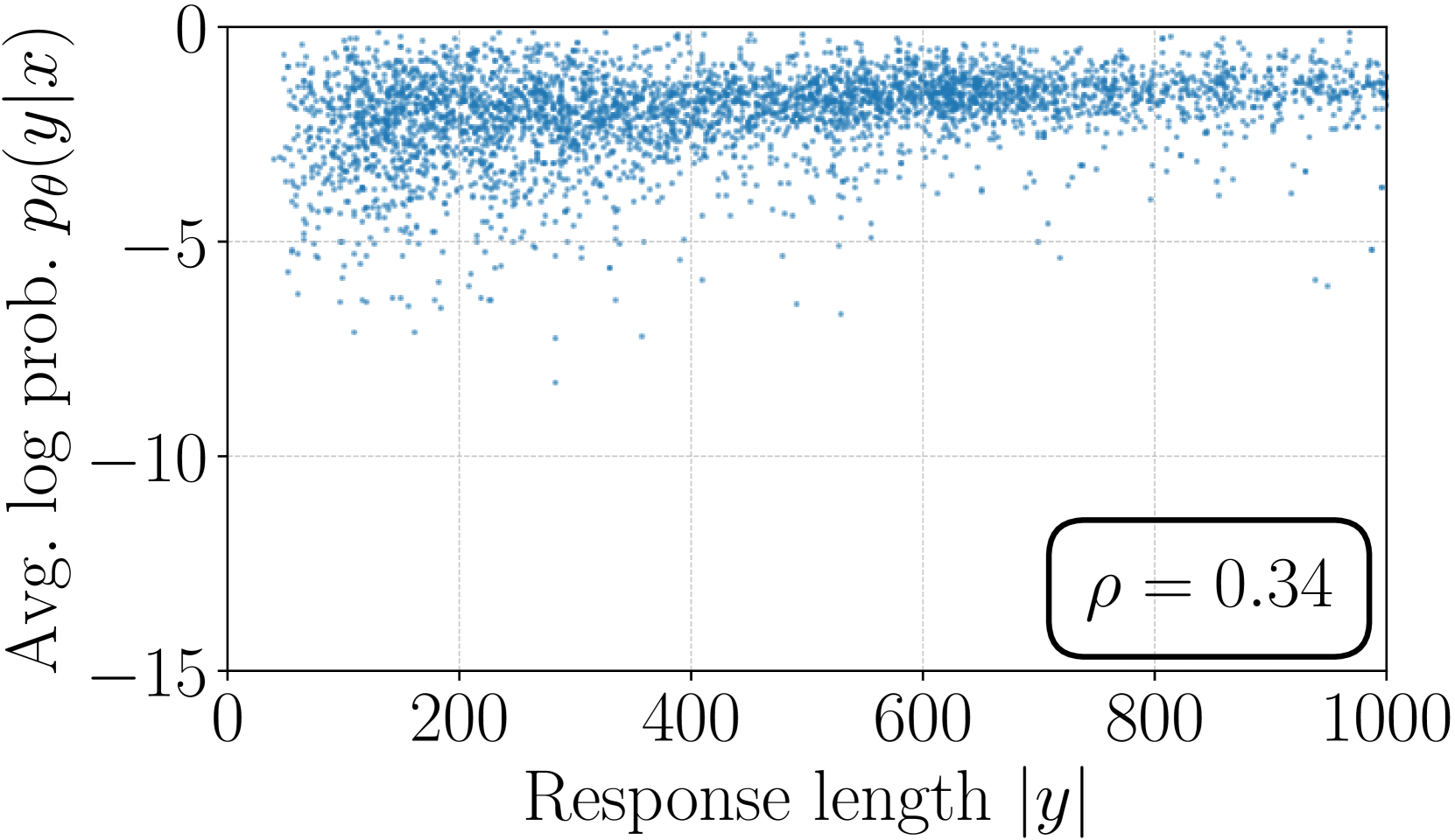

- The mismatch between training reward and generation metrics results in suboptimal performance, with only ~50% of training triples satisfying likelihood rankings in DPO models

- The requirement for a reference model doubles the memory footprint during training, increasing computational costs and hindering scalability

Concrete Example:

In DPO, a winning response might have a higher reward (log-ratio) than a losing response, but a lower average log-likelihood. During inference (which uses likelihood), the model may rank the losing response higher, failing to reflect the learned preference.

Key Novelty

Simple Preference Optimization (SimPO)

- Replaces DPO's reference-based reward with the policy model's average log-probability of the response, normalized by length to prevent verbosity bias

- Incorporates a target reward margin into the Bradley-Terry objective to enforce a specific gap between winning and losing response scores, improving class separation

Architecture

Conceptual comparison between DPO and SimPO objectives and workflows.

Evaluation Highlights

- Gemma-2-9B-it-SimPO achieves 72.4% length-controlled win rate on AlpacaEval 2, outperforming existing <10B models

- Achieves 59.1% win rate on Arena-Hard with Gemma-2-9B-it-SimPO, ranking 1st among models with <10B parameters

- Outperforms DPO by up to 6.4 points on AlpacaEval 2 and 7.5 points on Arena-Hard across various setups

Breakthrough Assessment

8/10

Significantly outperforms the widely used DPO while removing the need for a reference model (simplifying compute/memory). Empirical gains are substantial on high-quality benchmarks like Arena-Hard.