📝 Paper Summary

Alignment

Reinforcement Learning from Human Feedback (RLHF)

Text Summarization

SLiC-HF aligns language models to human preferences using a margin-based contrastive loss over generated sequences, eliminating the need for complex reinforcement learning optimization like PPO.

Core Problem

Standard RLHF using PPO (Proximal Policy Optimization) is computationally expensive, memory-intensive, and difficult to tune due to the need for separate value networks and online sampling during training.

Why it matters:

- PPO requires keeping multiple large models (policy, value, reward, reference) in memory, limiting the size of models that can be trained

- The sampling (rollout) step inside the PPO training loop significantly slows down optimization compared to standard supervised learning

- Reference-based metrics (ROUGE) fail to capture quality beyond the gold standard, but PPO's complexity creates a barrier to adopting human preference alignment

Concrete Example:

In summarization, a model might generate a technically accurate but boring summary. A PPO-based RLHF approach would attempt to fix this by running generation loops during training to estimate values, whereas SLiC-HF simply compares the probability of a 'good' summary vs. a 'bad' one offline.

Key Novelty

Sequence Likelihood Calibration with Human Feedback (SLiC-HF)

- Adapts Sequence Likelihood Calibration (SLiC) to use human preference rankings instead of similarity-to-reference metrics

- Uses a pairwise contrastive loss that forces the model to assign higher probability to the preferred sequence in a pair compared to the dispreferred one

- Enables learning from off-policy data (samples generated by other models) effectively, decoupling generation from optimization

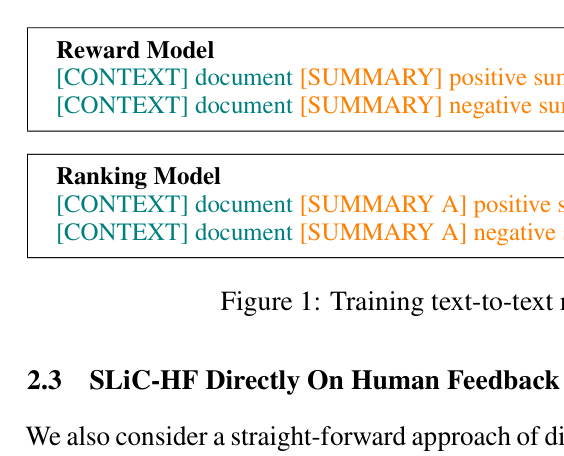

Architecture

Input text formats for training the Reward Model vs. the Ranking Model.

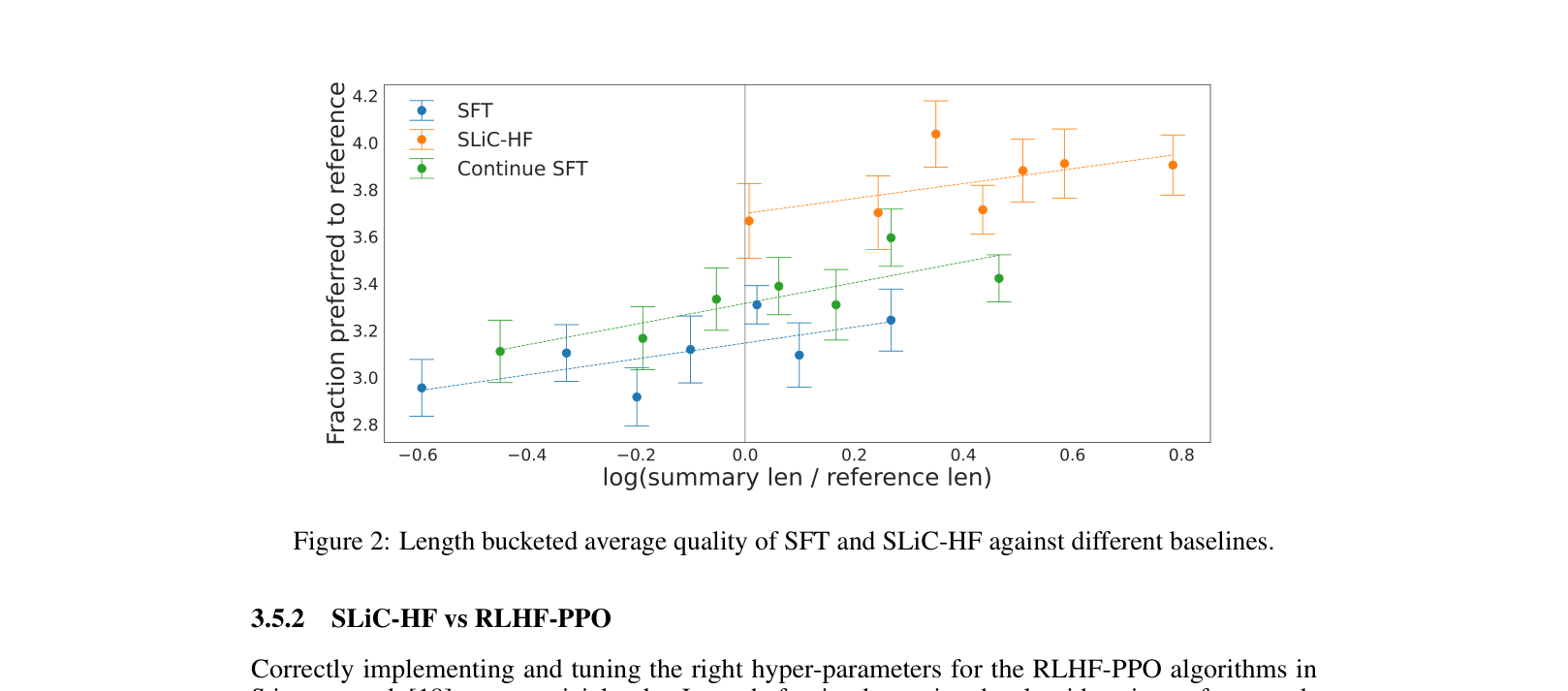

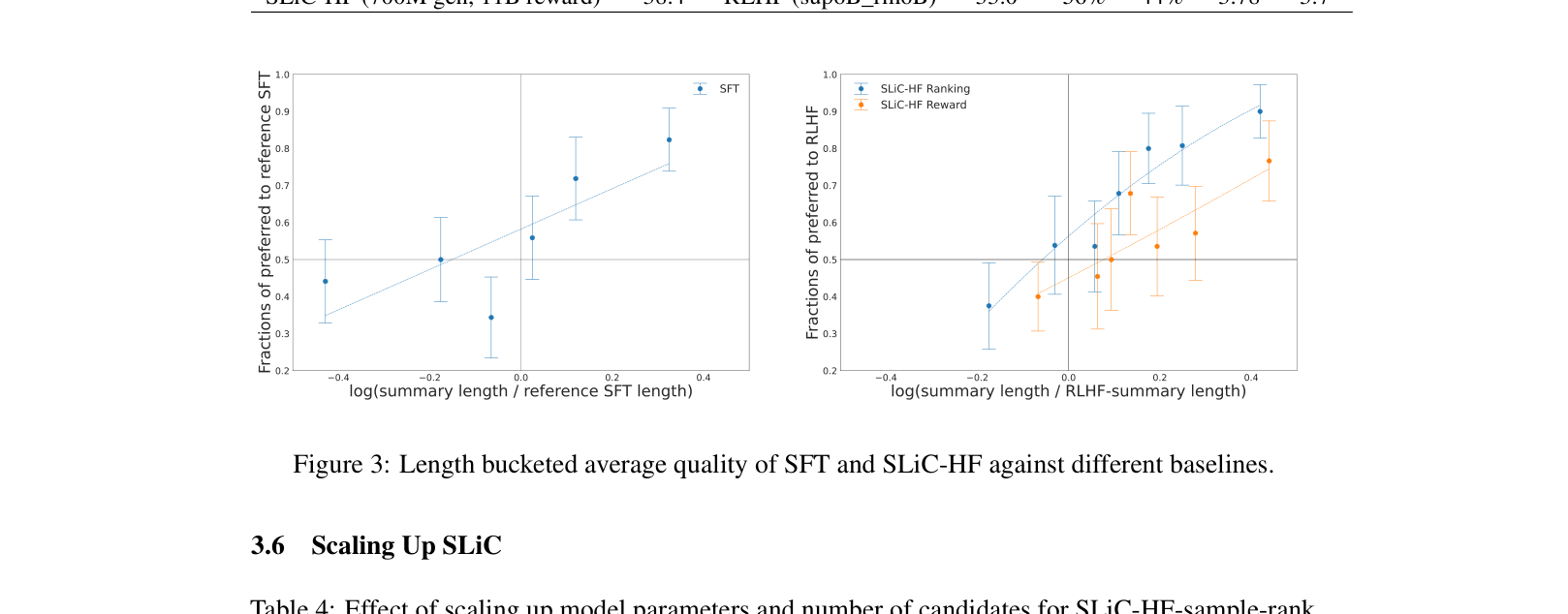

Evaluation Highlights

- SLiC-HF (T5-Large, 770M params) achieves a 66% win rate against a significantly larger RLHF-PPO baseline (6B params) in human evaluation

- Ranking-based filtering combined with SLiC-HF improves win rate against reference summaries from 44.96% (SFT) to 86.21% on TL;DR dataset

- Scaling the base model to T5-XXL (11B) yields a 96.10% win rate against human references according to the ranking model

Breakthrough Assessment

8/10

Provides a highly effective, simpler, and more efficient alternative to PPO for alignment. While similar to DPO (concurrent work), it demonstrates strong empirical results and off-policy capabilities.