📝 Paper Summary

Safe Reinforcement Learning

Offline Reinforcement Learning

FISOR enforces hard safety constraints in offline reinforcement learning by using reachability analysis to decouple reward maximization in safe regions from safety recovery in unsafe regions, training a diffusion policy via weighted regression.

Core Problem

Existing safe offline RL methods use soft constraints (limiting average cost), which allows for occasional catastrophic failures, and they struggle to balance the conflicting goals of reward maximization, safety, and behavior regularization.

Why it matters:

- Soft constraints are unacceptable in safety-critical domains like industrial control and autonomous driving, where even a single violation can be disastrous

- Jointly optimizing coupled objectives for safety and reward leads to unstable training and suboptimal policies in offline settings

Concrete Example:

In an autonomous driving scenario, a 'soft constraint' method might allow the car to drive on the sidewalk 1% of the time to maintain high average speed. FISOR identifies the sidewalk as an 'infeasible region' and strictly prioritizes steering back to the road (minimizing safety risk) over speed, while optimizing speed only when safely on the road.

Key Novelty

FeasIbility-guided Safe Offline RL (FISOR)

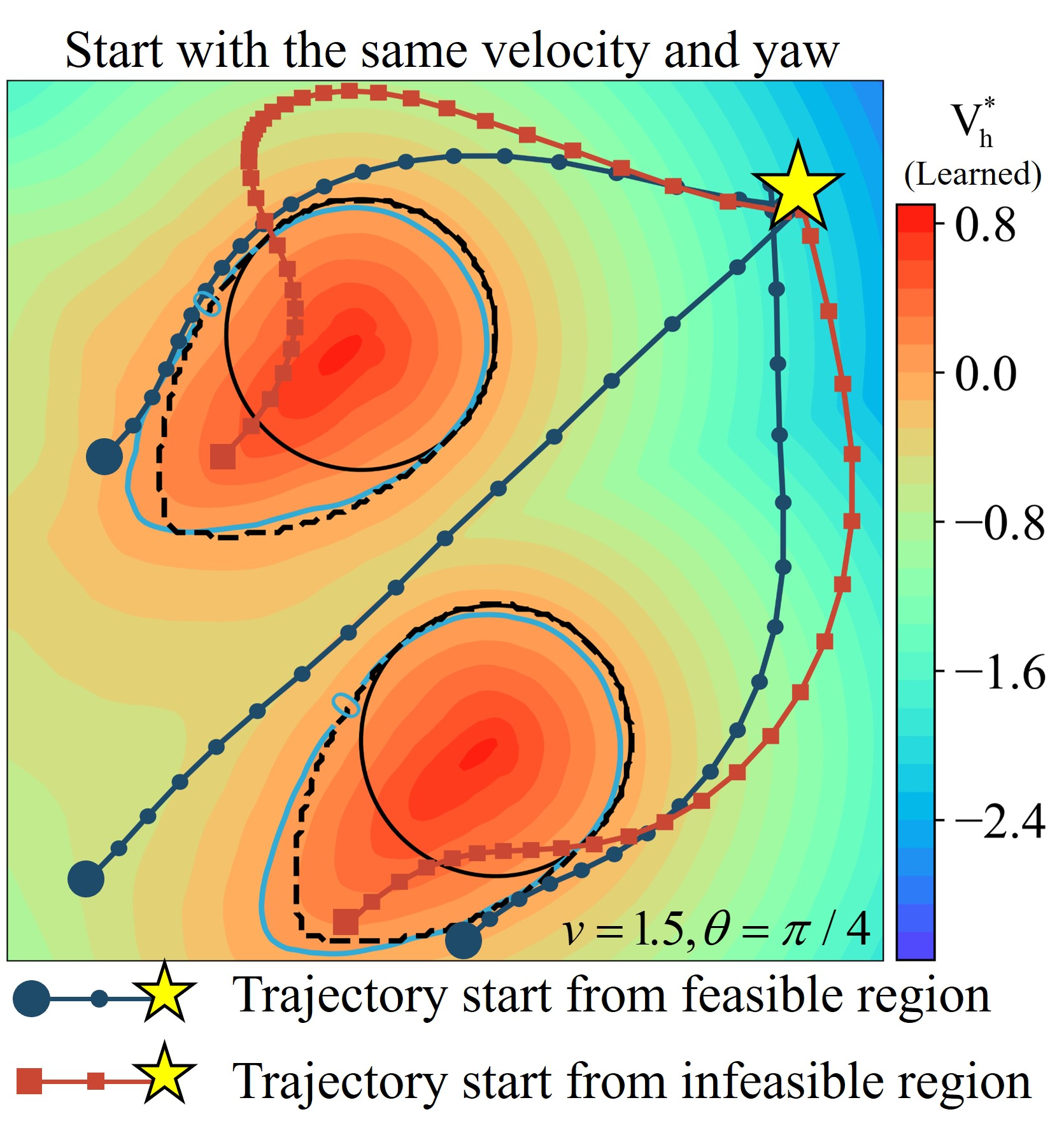

- Replaces soft constraints with Hamilton-Jacobi Reachability analysis to explicitly map out 'feasible regions' (states where safety is recoverable) using the offline dataset

- Decouples the learning objective: maximizes rewards only within feasible regions, while minimizing safety violation risks in infeasible regions

- Extracts the optimal policy using a diffusion model trained with a specific weighted regression loss, which is mathematically equivalent to energy-guided sampling but avoids training complex time-dependent classifiers

Architecture

Conceptual illustration of the Feasibility-Guided optimization strategy.

Evaluation Highlights

- Guarantees safety satisfaction (zero constraint violations) in all evaluated tasks on the DSRL benchmark

- Achieves top returns in most tasks compared to baselines like CPQ and RCRL

- Demonstrates versatility by outperforming baselines in safe offline imitation learning contexts

Breakthrough Assessment

8/10

Addresses a critical flaw in safe RL (soft vs. hard constraints) with a theoretically grounded reachability approach. The decoupling of objectives and use of diffusion for policy extraction is a significant methodological advance.