📊 Experiments & Results

Evaluation Setup

Continuous control tasks in simulation environments

Benchmarks:

- DeepMind Control Suite (DMC) (Locomotion (e.g., Humanoid, Dog, Acrobot))

- MetaWorld (MW) (Robotic Manipulation (e.g., Hammer, Push, Sweep))

Metrics:

- Mean Return (Performance)

- Critic Approximation Error (Overestimation proxy)

- Ratio of validation to training TD error (Overfitting proxy)

- Rank of penultimate layer representations (Plasticity proxy)

- Statistical methodology: 10 seeds per configuration; results marginalized (first, second, third order) to test robustness

Experiment Figures

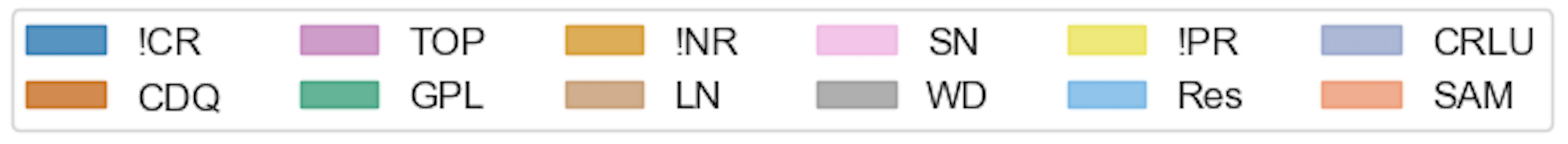

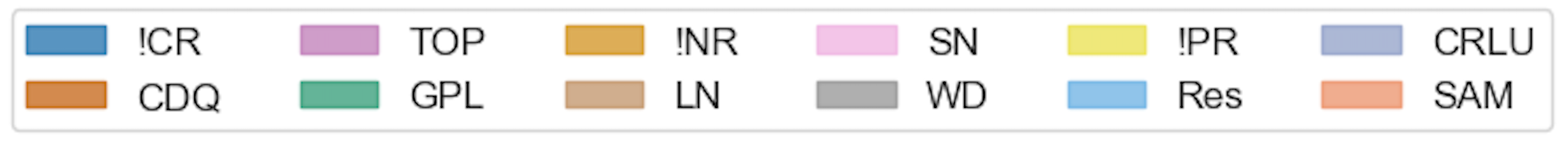

First-order marginalization plots showing the robustness of individual interventions (CR, NR, PR) across DMC and MetaWorld tasks.

Second-order marginalization heatmaps showing the performance of pairs of interventions across Replay Ratios (RR=2, RR=16).

Main Takeaways

- Network Regularization (Layer Norm, Spectral Norm) and Plasticity Regularization (Resets) are significantly more robust than Critic Regularization (CDQ, GPL, TOP) across benchmarks.

- A 'Bitter Lesson' finding: Generic neural network regularizers outperform specialized RL algorithmic fixes for stability issues like overestimation.

- Combining Layer Norm with Resets enables model-free SAC to solve difficult 'Dog' domain tasks in DMC, matching capabilities previously reserved for model-based methods.

- Performance is highly environment-dependent: Layer Norm dominates in DMC (Locomotion) but Spectral Norm is more robust in MetaWorld (Manipulation).

- Counter-intuitive interaction: When using strong network regularization (LN/SN), adding standard critic regularization (like Clipped Double Q-learning) often *degrades* performance rather than helping.