📝 Paper Summary

Reinforcement Learning

Continual Learning

Optimization Dynamics

The paper proposes Normalize-and-Project (NaP), a method that decouples effective learning rates from parameter growth to prevent plasticity loss in non-stationary reinforcement learning.

Core Problem

In deep networks with normalization, parameter growth causes the effective learning rate to decay implicitly; in non-stationary settings like continual RL, this decay happens too quickly, preventing the agent from learning new tasks (loss of plasticity).

Why it matters:

- Deep RL agents trained for long periods often lose the ability to learn from new data, a phenomenon known as loss of plasticity.

- Existing solutions like resetting units or regularization often fail to address the root cause: the coupling between weight magnitude and optimization step size in scale-invariant networks.

- Implicit learning rate schedules explain why weight decay can be harmful in value-based RL: it interferes with the necessary annealing of the effective learning rate.

Concrete Example:

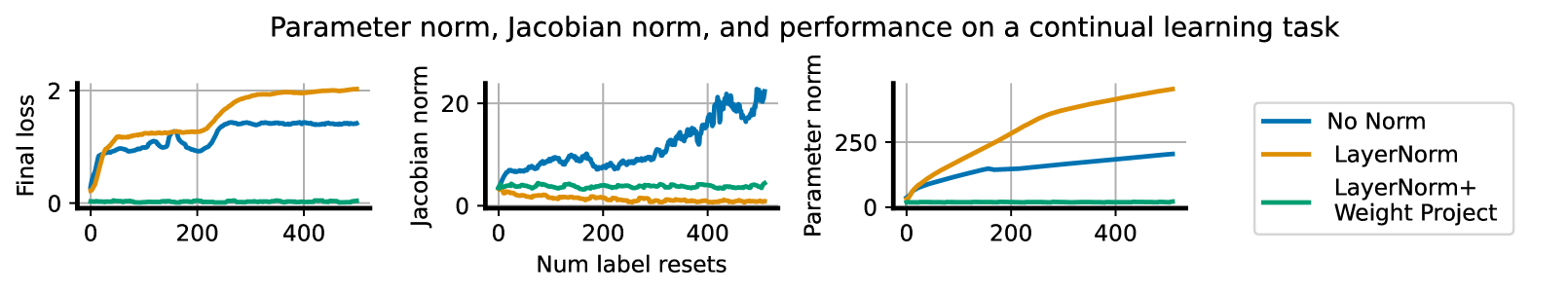

A Convolutional Neural Network trained on CIFAR-10 with cyclically re-randomized labels eventually stops learning new labels because its parameter norm grows so large that the effective learning rate drops to near zero, freezing the weights.

Key Novelty

Normalize-and-Project (NaP)

- Combines layer normalization (to control feature statistics) with periodic weight projection (to control gradient scaling).

- By forcing weights to stay on a fixed-norm sphere, NaP prevents the effective learning rate from decaying uncontrollably due to parameter growth, making the optimization schedule explicit.

Architecture

Conceptual workflow of the Normalize-and-Project (NaP) method.

Evaluation Highlights

- Maintains trainability over 500 consecutive label re-randomization tasks on CIFAR-10, whereas standard networks suffer exploding Jacobian norms and performance collapse.

- Outperforms a Rainbow agent baseline with freshly initialized parameters after 400M training frames (100M optimizer steps) on the Sequential Arcade Learning Environment.

- Successfully trains 400M parameter transformer models on C4 and vision models on ImageNet, matching or slightly improving upon base model performance in stationary settings.

Breakthrough Assessment

8/10

Provides a fundamental theoretical explanation for plasticity loss in normalized networks and offers a simple, architectural solution that works across diverse domains (RL, Vision, Language).