📝 Paper Summary

Sim-to-Real Reinforcement Learning

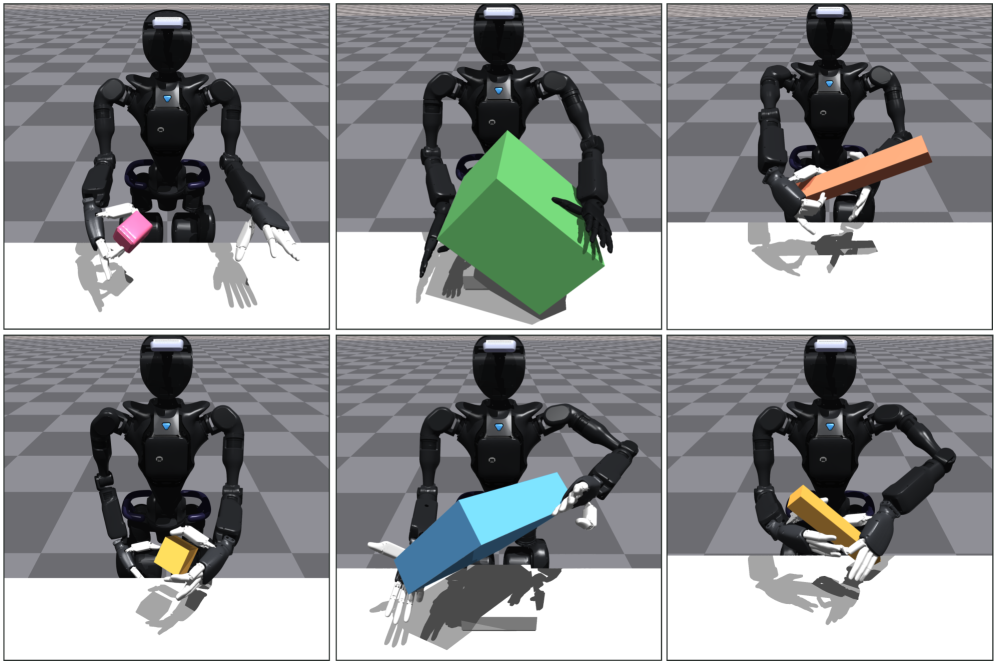

Dexterous Manipulation

Humanoid Robotics

A practical sim-to-real RL recipe enables humanoids to learn complex bimanual dexterous skills by combining automated physics tuning, contact-based rewards, and hybrid visual representations.

Core Problem

Learning generalizable bimanual manipulation on humanoids is difficult because existing methods rely on expensive human data or fail to transfer contact-rich policies from simulation due to hardware inaccuracies.

Why it matters:

- Humanoid robots have low-cost, noisy motors that make precise sim-to-real transfer of dexterous skills significantly harder than on industry-grade arms

- Standard RL exploration fails in high-dimensional bimanual spaces, while imitation learning scales poorly due to the high cost of collecting teleoperated demonstrations

- Prior sim-to-real success is largely limited to single-hand or state-based tasks, leaving vision-based bimanual manipulation an open challenge

Concrete Example:

In a 'bimanual handover' task, a robot must pass an object from one hand to another. Without the proposed contact-based rewards and automated tuning, standard RL policies fail to coordinate the precise timing and forces needed, causing the object to be dropped or the hands to miss each other entirely in the real world.

Key Novelty

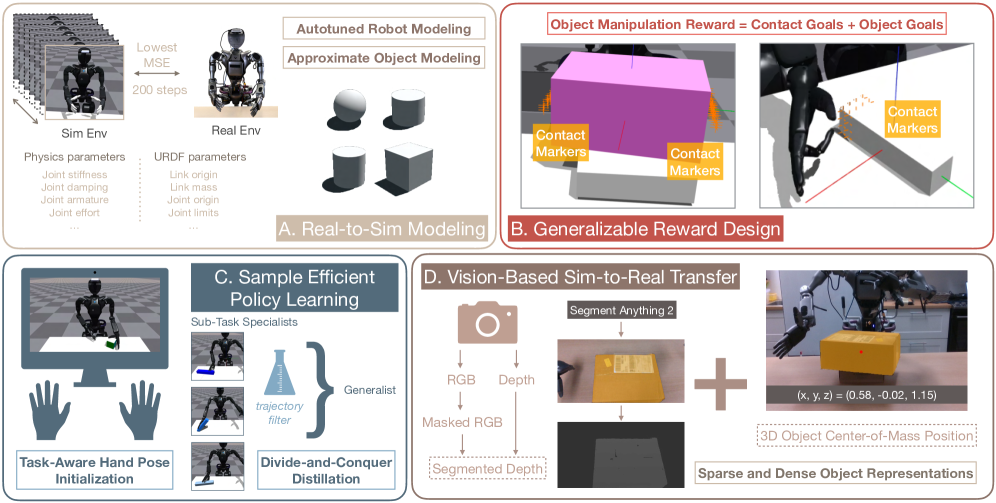

Integrated Sim-to-Real Recipe for Humanoids

- Automated Real-to-Sim Tuning: Uses a single real-world trajectory to auto-optimize simulator physics parameters (friction, damping) in parallel, matching real motor behavior in under 4 minutes

- Contact-Goal Rewards: Decomposes tasks into 'touch this point' goals using virtual 'contact stickers' on objects, guiding exploration for complex bimanual coordination without expert demonstrations

- Hybrid Object Representation: Combines robust 3D tracking (sparse) with segmented depth images (dense) to balance precise geometry perception with sim-to-real visual robustness

Architecture

Overview of the Sim-to-Real RL Recipe, including the Autotune module, Contact-Rich Reward design, and Real-World Deployment pipeline.

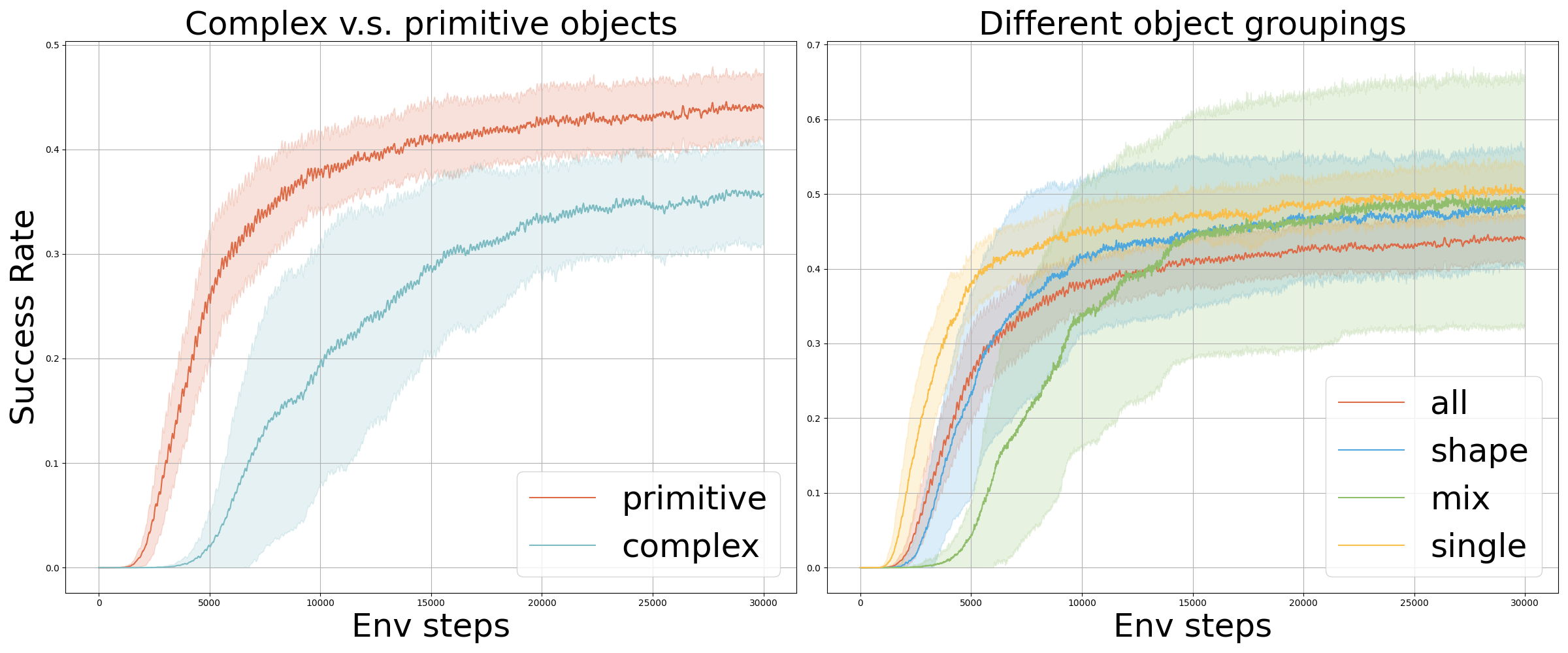

Evaluation Highlights

- Achieves 90% success rate on seen objects and 60-80% on novel objects across three tasks (grasp-and-reach, box lift, handover)

- Automated system identification module tunes simulator parameters in under 4 minutes using only a single real-world calibration trajectory

- Hybrid object representation (depth + 3D position) improves sim-to-real success on novel objects by 80-100% compared to using depth or pose alone

Breakthrough Assessment

8/10

Demonstrates a robust, working recipe for a notoriously difficult problem (vision-based bimanual dexterity on noisy hardware). The combination of automated sys-id and contact rewards is a strong practical contribution.