📊 Experiments & Results

Evaluation Setup

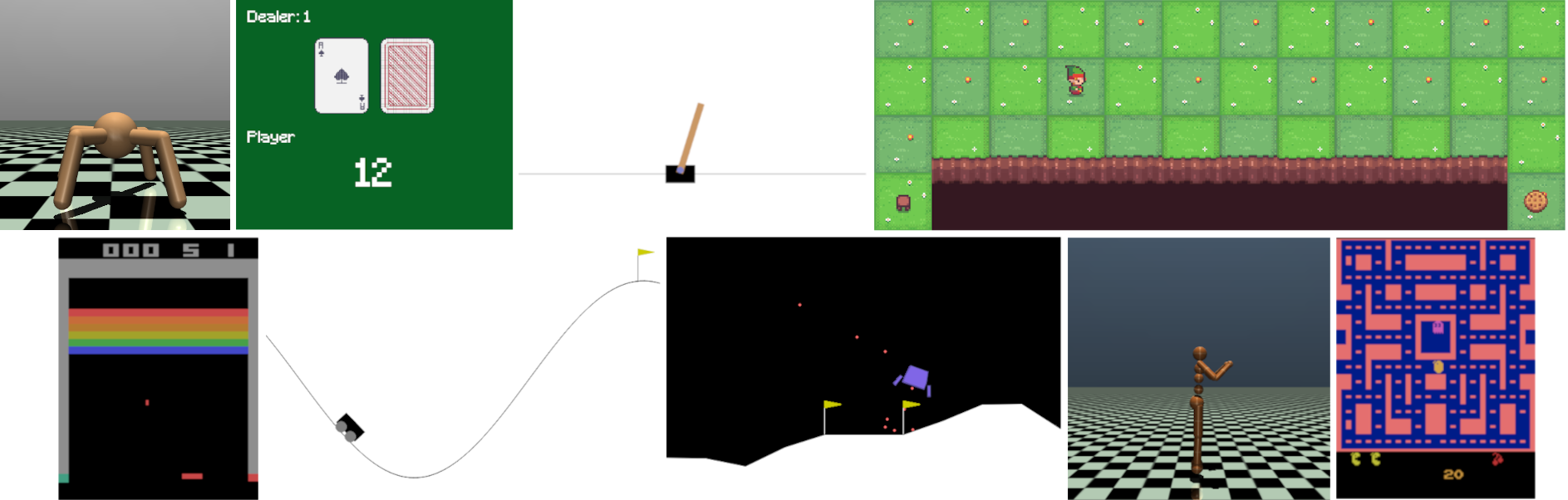

Standardized environments for benchmarking RL algorithms

Benchmarks:

- Classic Control (Simple physics control (Cartpole, Pendulum))

- Box2D (Continuous control with contact physics (Lunar Lander, Bipedal Walker))

- MuJoCo (Complex continuous robotics control)

- Toy Text (Discrete tabular MDPs (Frozen Lake, Taxi))

Metrics:

- Software Adoption (Downloads)

- Community Engagement (PRs, Issues)

- Compatibility (Supported Libraries)

- Statistical methodology: Not explicitly reported in the paper

Experiment Figures

Visual collage of supported environments (Cartpole, Lunar Lander, MuJoCo ants, Atari games)

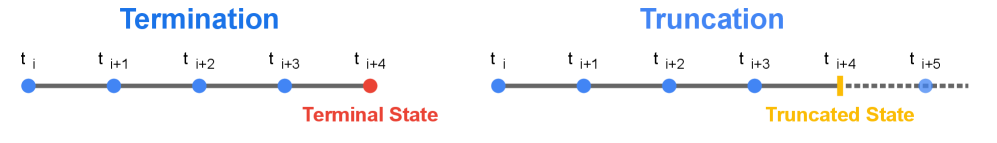

Visual explanation of Termination vs. Truncation

Main Takeaways

- Gymnasium has successfully replaced OpenAI Gym as the de facto standard, evidenced by 18M+ downloads and widespread library support (SB3, CleanRL, Ray Rllib).

- The introduction of `FuncEnv` bridges the gap between traditional OOP environments and modern hardware-accelerated RL research (JAX).

- Rigorous versioning and API checkers ensure that environments remain reproducible testbeds, addressing a major crisis in RL research reliability.