📝 Paper Summary

Data Selection for Fine-tuning

Efficient Training of LLMs

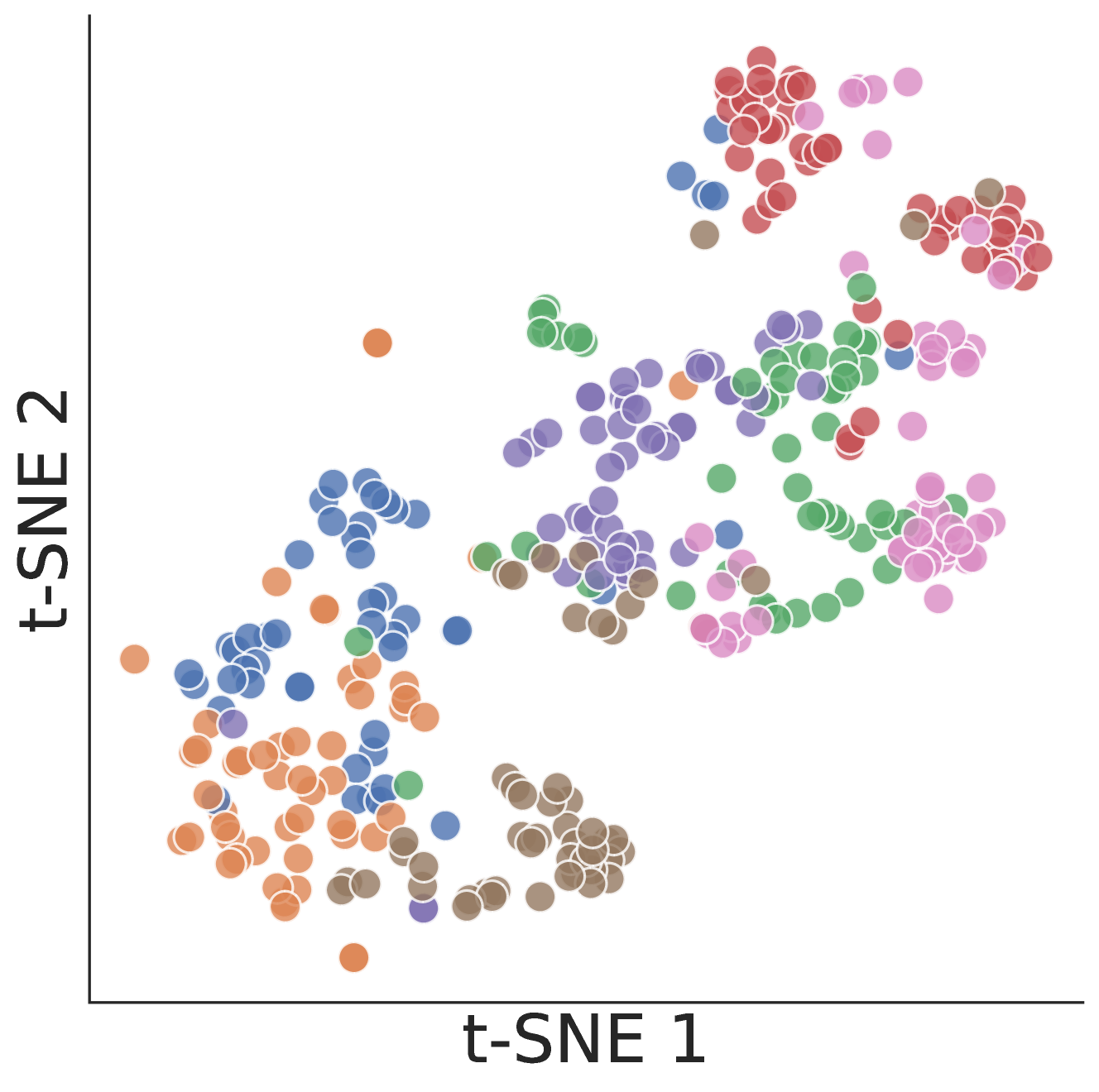

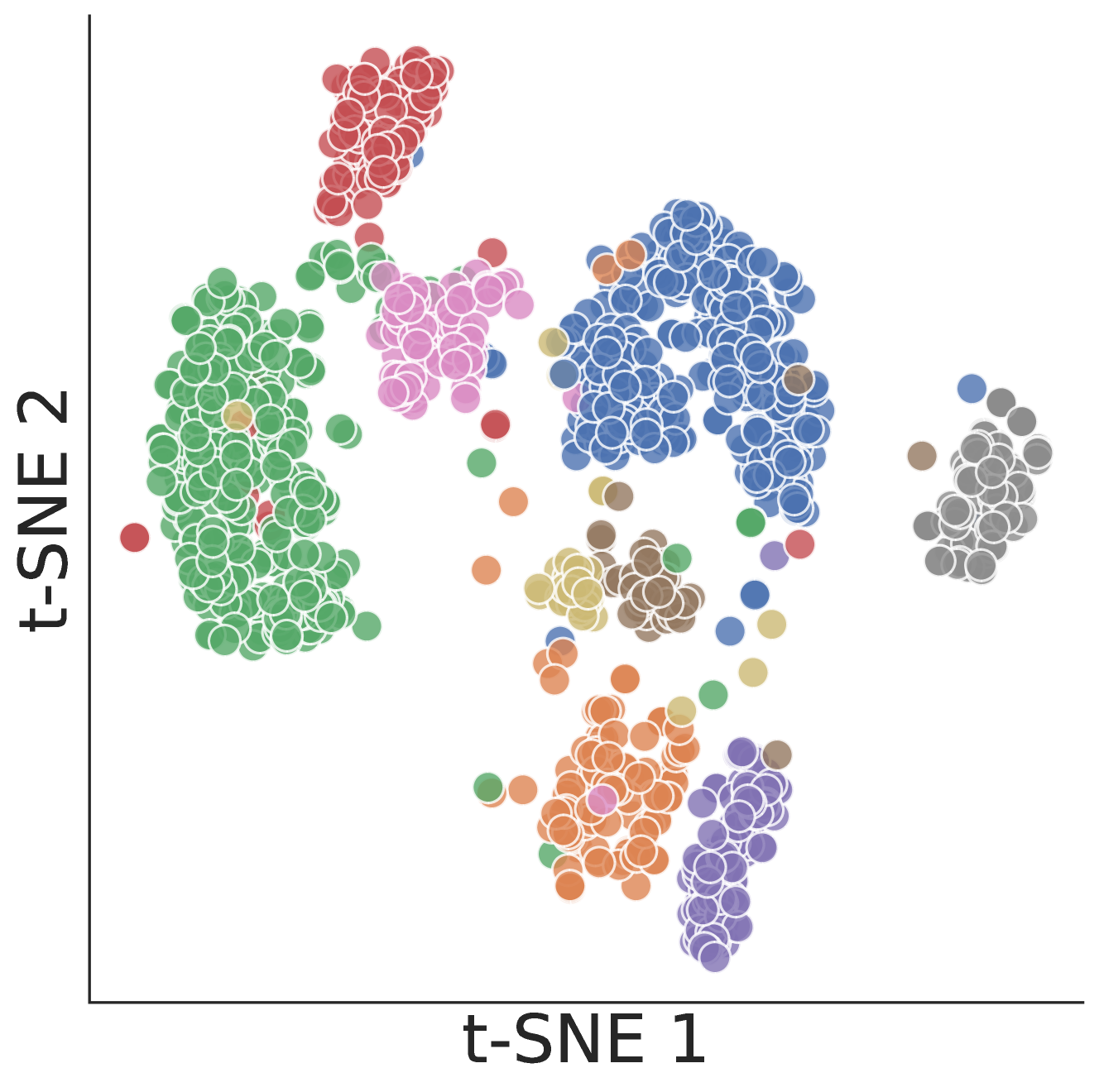

S2L selects high-quality fine-tuning data by clustering the training loss trajectories of a small proxy model, exploiting the finding that these trajectories predict gradient dynamics in much larger target models.

Core Problem

Selecting data for specialized domains (like math or medicine) is computationally expensive because heuristic metrics fail and using large models to score individual examples is prohibitive.

Why it matters:

- Specialized domains (math, medicine, code) require high-quality SFT data, but generalist metrics like perplexity often fail to identify the most effective training examples due to distribution shifts

- Scoring every training example with a target LLM (e.g., 7B+ parameters) to determine its value is too slow and resource-intensive for large datasets

Concrete Example:

Metrics like perplexity derived from a pre-trained model might rank data points poorly for fine-tuning because they don't capture learning dynamics. S2L avoids this by observing actual training progress (loss over time) on a cheaper proxy model.

Key Novelty

Trajectory-Based Scalable Data Selection

- Uses the sequence of loss values (trajectory) over the course of training a small 'proxy' model as a feature vector for each data point, rather than a single static score

- Clusters these trajectories to group examples with similar learning dynamics, then samples uniformly from clusters to ensure gradient diversity during training of the large target model

- Leverages the observation that training dynamics transfer across model scales, allowing a 70M parameter model to guide data selection for a 7B parameter model

Architecture

The conceptual workflow of the S2L data selection process.

Evaluation Highlights

- Matches the performance of the full MathInstruct dataset using only 11% of the original data (approx. 30K examples vs 260K)

- Achieves 32.7% accuracy on the MATH benchmark with just 50K training examples, improving the Phi-2 baseline by 16.6% (absolute percentage points implied by context)

- Outperforms state-of-the-art data selection algorithms (including GraNd and EL2N) by an average of 4.7% across 6 in-domain and out-of-domain evaluation datasets

Breakthrough Assessment

8/10

Offers a highly practical solution to the 'compute vs. data quality' trade-off. Theoretical grounding of loss trajectory transfer across model scales is significant for efficient LLM training.