📝 Paper Summary

LLM Fine-tuning

Alignment with Mixed-Quality Data

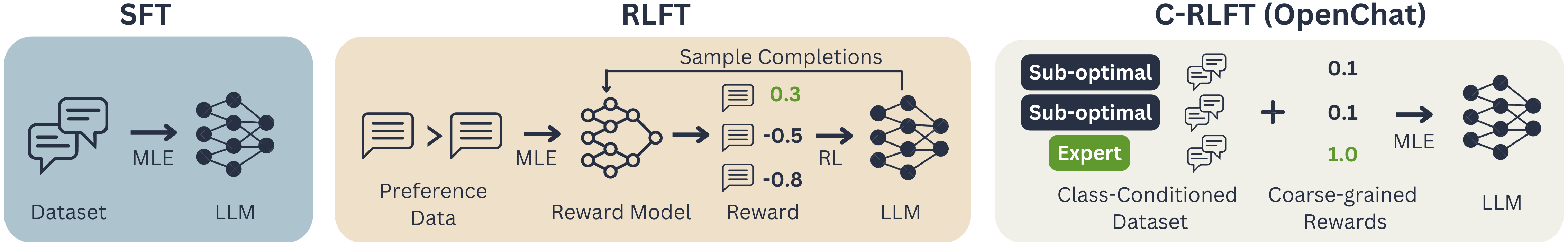

OpenChat aligns LLMs using mixed-quality data without preference labels by treating data sources as coarse rewards and training a class-conditioned policy via single-stage supervised learning.

Core Problem

Standard supervised fine-tuning treats all mixed-quality data equally, degrading performance, while RLHF requires expensive, high-quality human preference labels that are difficult to obtain.

Why it matters:

- Open-source SFT datasets (like ShareGPT) contain large amounts of sub-optimal data (e.g., from GPT-3.5) mixed with sparse expert data (GPT-4), hurting model quality if trained indiscriminately

- Collecting pairwise preference data for RLHF is costly and labor-intensive, creating a barrier for open-source model development

- Existing RLFT methods are complex and unstable, often requiring multiple stages and separate reward models

Concrete Example:

A dataset might mix high-quality GPT-4 responses with lower-quality GPT-3.5 responses. Standard SFT trains on both equally, causing the model to learn sub-optimal behaviors. OpenChat conditions the model on the source, allowing it to distinguish and generate only 'expert' quality outputs at inference.

Key Novelty

Conditioned-RLFT (C-RLFT)

- Treats different data sources (e.g., GPT-4 vs. GPT-3.5) as coarse-grained reward classes rather than using fine-grained individual preference labels

- Optimizes the policy by conditioning the LLM on these class labels during training (learning to mimic specific sources) and regularizing against a class-conditioned reference policy

- Simplifies the RL problem into a single-stage, supervised reward-weighted regression, avoiding the need for separate reward models or PPO training loops

Architecture

Conceptual framework of OpenChat showing the C-RLFT process. It illustrates separating mixed SFT data into expert and sub-optimal sets, training a class-conditioned policy, and performing inference with the expert condition.

Evaluation Highlights

- openchat-13b achieves the highest average performance among all 13b open-source language models on Alpaca-Eval, MT-bench, and Vicuna-bench

- openchat-13b surpasses gpt-3.5-turbo on Alpaca-Eval, MT-bench, and Vicuna-bench despite using mixed-quality training data

- Achieves top-1 average accuracy among all 13b open-source models on AGIEval, demonstrating generalization beyond instruction following

Breakthrough Assessment

9/10

Proposes a theoretically grounded yet extremely simple method (conditional SFT) to solve the complex RLHF problem for mixed-quality data, achieving SOTA results for its size class without preference labels.