📝 Paper Summary

Reasoning datasets

Knowledge distillation

Chain-of-thought (CoT) reasoning

The authors introduce a 1.4-million-entry reasoning dataset constructed by distilling DeepSeek-R1 and rigorously verifying outputs via sandboxes and reward models, enabling smaller models to surpass teacher baselines.

Core Problem

Open-source reasoning datasets are significantly smaller (<800k samples) than those used by proprietary models, limiting the community's ability to train effective reasoning models via Supervised Fine-Tuning (SFT).

Why it matters:

- DeepSeek-R1's success demonstrated that high-quality, large-scale SFT data (800k+ samples) is critical for inference-time scaling

- Existing open-source distillation efforts lack the scale and rigorous verification pipelines (using code execution and math checkers) needed to produce high-purity reasoning traces

- Without large-scale, verified reasoning data, open-source models lag behind distilled counterparts in complex math and coding tasks

Concrete Example:

A standard distilled model might hallucinate a reasoning step in a math problem without detection; this dataset uses 'math-verify' and reference answers to filter such errors, ensuring the model learns correct logic.

Key Novelty

Large-Scale Verified Distillation Pipeline (AM-DeepSeek-R1-Distilled)

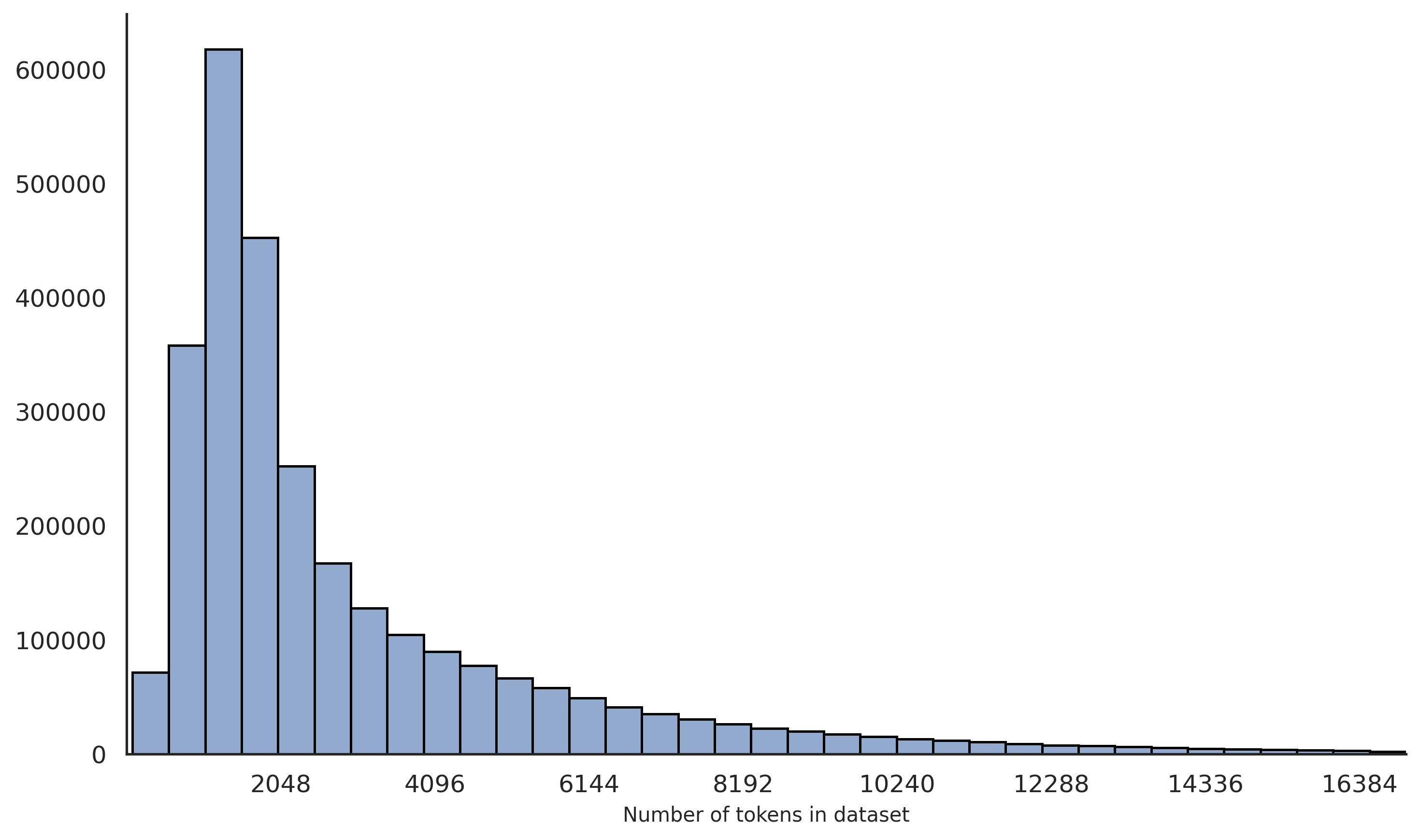

- Combines 500k curated open-source samples with 900k new samples distilled from DeepSeek-R1, scaled to 1.4 million total entries

- Implements a multi-stage verification system: math problems are checked against reference answers, code is executed in sandboxes, and general reasoning is scored by a reward model

Architecture

The Data Construction Pipeline showing the three main stages: Raw Data Collection, Distilling, and Rejection Sampling.

Evaluation Highlights

- +1.9% accuracy on MATH-500 for AM-Distill-Qwen-32B compared to DeepSeek-R1-Distill-Qwen-32B (96.2% vs 94.3%)

- +6.5% accuracy on AIME 2024 for AM-Distill-Qwen-72B compared to DeepSeek-R1-Distill-Llama-70B (76.5% vs 70.0%)

- Consistent improvements across GPQA-Diamond and LiveCodeBench benchmarks over DeepSeek-R1-Distilled baselines

Breakthrough Assessment

8/10

Provides a massive, rigorously verified dataset that allows open-source models to outperform the very models they were distilled from (DeepSeek-R1-Distilled series). Highly impactful resource.