📝 Paper Summary

Automated Theorem Proving (ATP)

Formal Reasoning

Autoformalization

Goedel-Prover achieves state-of-the-art automated theorem proving by training on a massive dataset of 1.64 million autoformalized statements and iteratively refining itself through expert iteration.

Core Problem

Training LLMs for formal theorem proving is bottlenecked by the extreme scarcity of high-quality formal mathematical statements and proofs compared to informal natural language math data.

Why it matters:

- Formal proofs allow machine verification of reasoning, unlike informal natural language reasoning which is error-prone and hard to verify

- Existing formal datasets like Lean Workbook are small (only 15.7K proofs), limiting the ability of models to learn complex proof strategies

- Manual formalization requires significant domain expertise and is not scalable

Concrete Example:

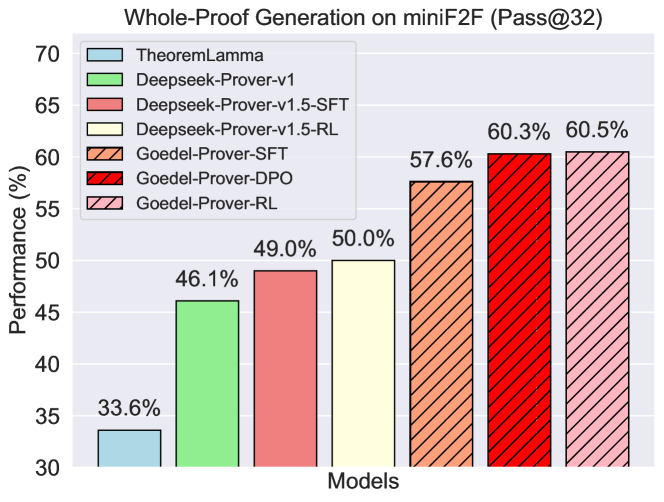

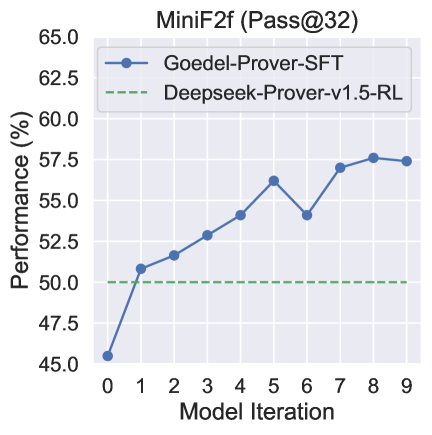

Previous open-source models like DeepSeek-Prover-V1.5 rely on reinforcement learning on proprietary datasets or small public sets, achieving only 50.0% on miniF2F. Goedel-Prover scales data availability by autoformalizing 860K Numina problems, enabling purely supervised training to reach 57.6%.

Key Novelty

Scale-driven Expert Iteration with Autoformalized Data

- Creates a massive formal dataset (Goedel-Pset-v1) by training two distinct formalizers to translate 1.6 million informal math problems into Lean 4 statements

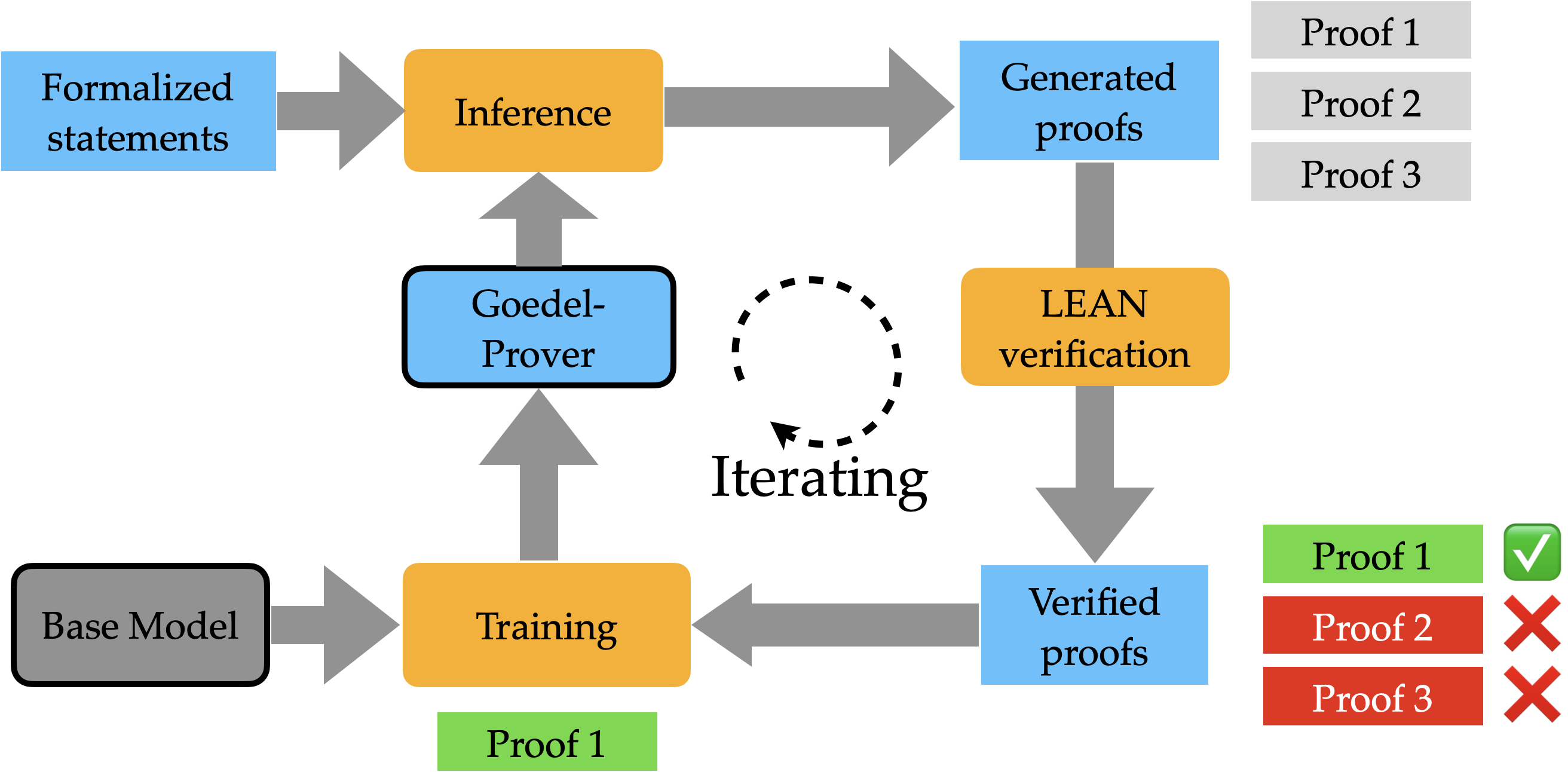

- Uses 'Whole-Proof' expert iteration: the model generates complete proofs without intermediate compiler interaction, verifies them, and retrains on the successful proofs in multiple rounds

Architecture

The Expert Iteration pipeline: Iterative loop of proof generation, verification, and retraining.

Evaluation Highlights

- 57.6% Pass@32 on miniF2F benchmark with SFT only, surpassing previous SOTA DeepSeek-Prover-V1.5-RL (50.0%) by 7.6%

- Solves 7 problems on PutnamBench (Pass@512), ranking #1 on the leaderboard

- Discovered 29.7K valid proofs for Lean Workbook problems, nearly doubling the 15.7K proofs found by prior provers

Breakthrough Assessment

9/10

Sets a new open-source SOTA on miniF2F and PutnamBench through a scalable data synthesis recipe. The release of 1.6M formal statements and ~30K new proofs is a major resource contribution.