📝 Paper Summary

Parameter-Efficient Fine-Tuning (PEFT)

Optimization for Large Language Models

The paper introduces a computationally cheap preconditioner for LoRA based on Riemannian geometry that stabilizes training and eliminates the need for manual learning rate tuning between low-rank matrices.

Core Problem

Standard LoRA training can be unstable and requires careful hyperparameter tuning because the two low-rank matrices (A and B) require significantly different learning rates to learn features effectively.

Why it matters:

- Tuning learning rates for large foundation models is computationally expensive and time-consuming

- Existing heuristics (like setting one learning rate much larger than the other) are brittle and lack theoretical guarantees

- Unstable feature learning can lead to poor convergence or degraded performance in downstream tasks

Concrete Example:

In standard LoRA, if you use the same learning rate for matrices A and B, feature learning may fail or vanish. Recent work (LoRA+) suggests setting the learning rate for B to be $16\times$ larger than A, but this is just a heuristic. This paper's method works with equal learning rates.

Key Novelty

Riemannian Preconditioned LoRA

- Treats the optimization of LoRA parameters not as standard Euclidean optimization, but as optimization on a low-rank matrix manifold using a novel Riemannian metric

- Derives a specific $r \times r$ preconditioner (where $r$ is the small rank) that scales gradients to correct the imbalance between matrices A and B

- The preconditioner acts as a gradient projector, effectively aligning updates with the column space of B and row space of A

Architecture

Pseudocode for the Riemannian Preconditioned LoRA (Scaled AdamW) optimization step.

Evaluation Highlights

- Achieves consistent convergence and performance improvement across GPT-2, RoBERTa, and Llama-2-7b fine-tuning tasks compared to standard LoRA and LoRA+

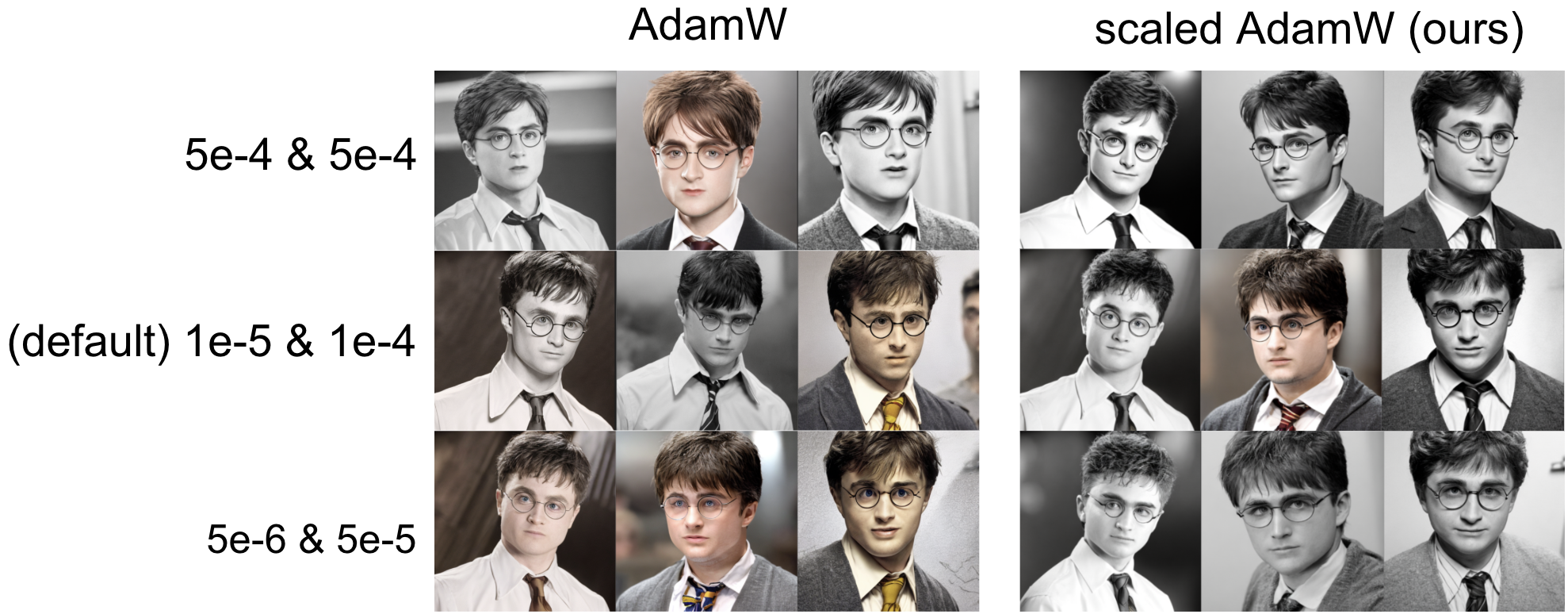

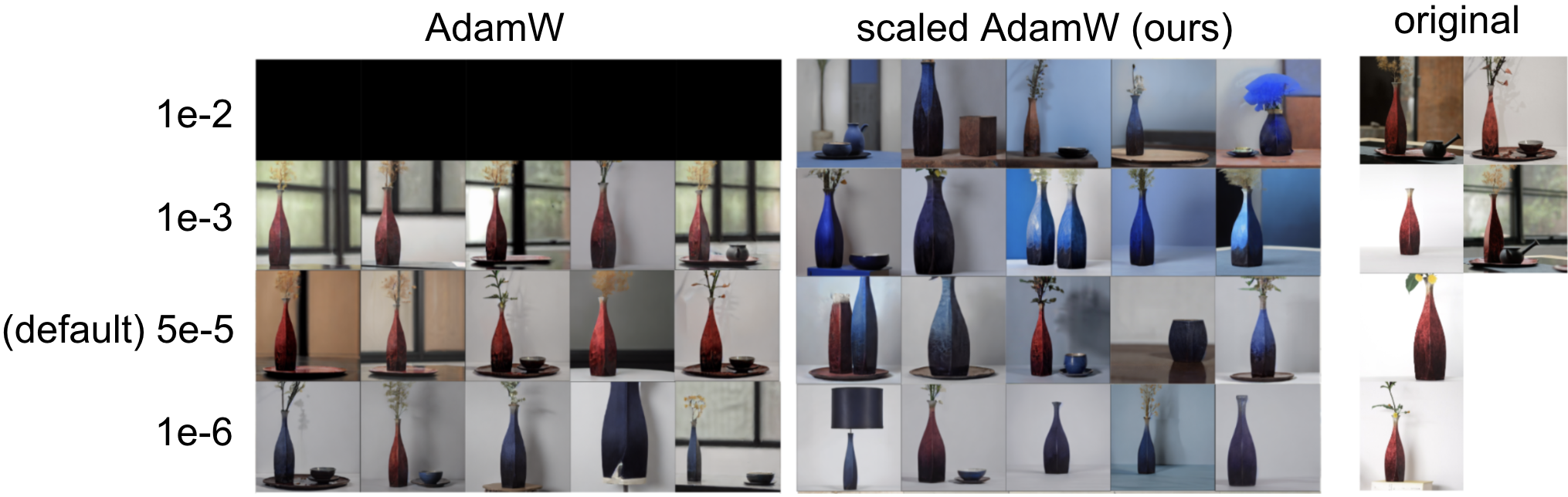

- Significantly improves image generation quality and training stability in text-to-image diffusion models compared to unscaled AdamW

- Eliminates the need for separate learning rate tuning: achieves stable learning with equal learning rates for A and B, unlike standard LoRA which requires $\eta_B \gg \eta_A$

Breakthrough Assessment

7/10

Provides a solid theoretical grounding (Riemannian geometry) for a practical problem in PEFT. The resulting algorithm is simple, virtually zero-cost, and robust, though it largely refines existing LoRA rather than proposing a new paradigm.