📝 Paper Summary

Parameter-Efficient Fine-Tuning (PEFT)

Large Language Model Optimization

Sparse Training

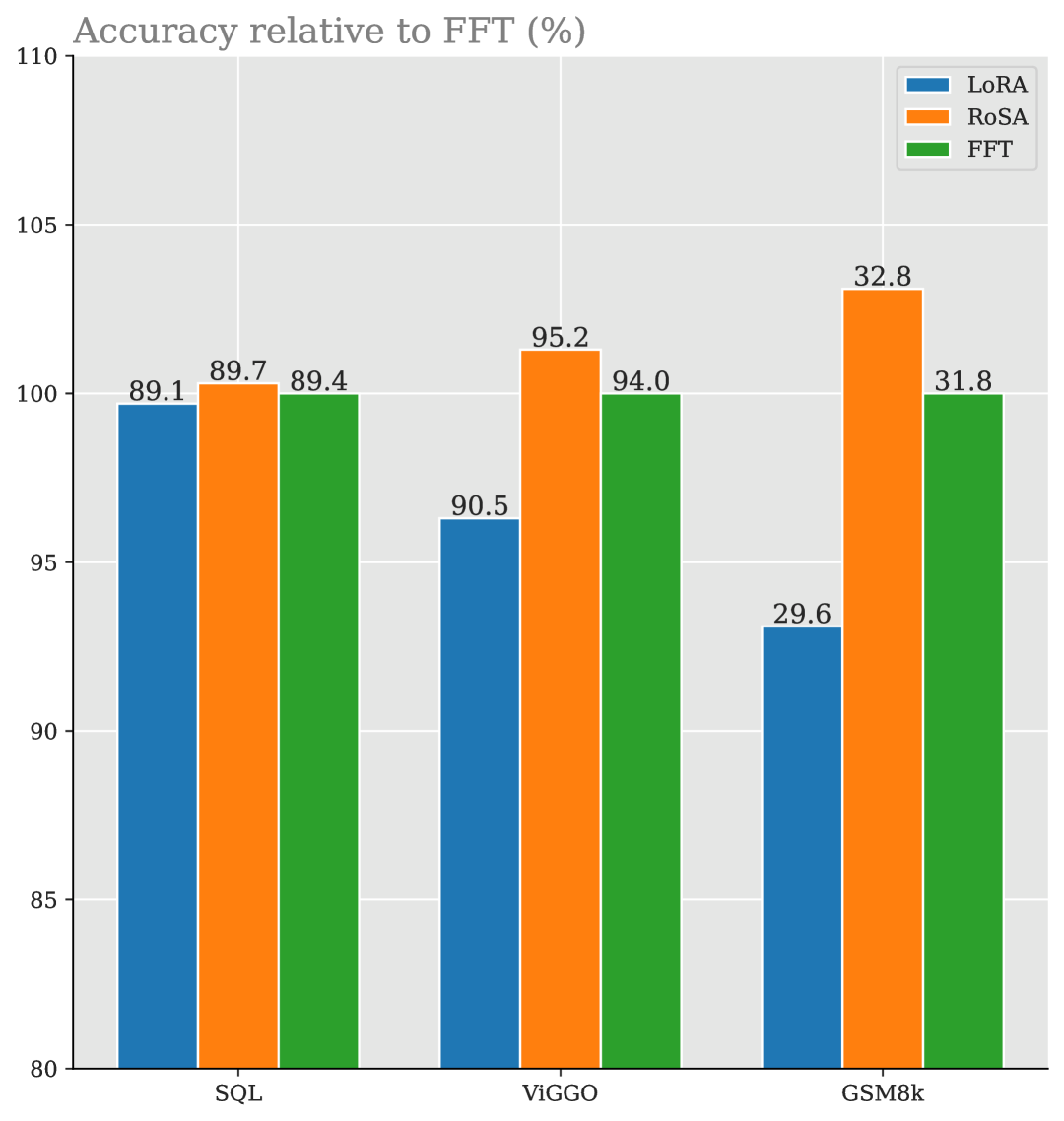

RoSA improves parameter-efficient fine-tuning by decomposing weight updates into simultaneous low-rank and sparse components—inspired by Robust PCA—to better capture the outlier-heavy structure of complex task adaptations.

Core Problem

Existing PEFT methods like LoRA often fail to match Full Fine-Tuning (FFT) accuracy on complex tasks (e.g., math, coding) because low-rank approximations cannot capture high-magnitude, sparse updates.

Why it matters:

- FFT is prohibitively expensive in memory for LLMs, restricting democratization of state-of-the-art model tuning.

- Current low-rank methods filter out important 'outlier' directions necessary for reasoning tasks, creating an accuracy gap.

- Pure sparse methods struggle with finding effective masks and lack efficient GPU support for unstructured sparsity.

Concrete Example:

When fine-tuning on GSM8K (math problems), LoRA's low-rank constraint fails to approximate the 'heavy-tailed' distribution of the true FFT update matrix, resulting in lower reasoning accuracy compared to full fine-tuning.

Key Novelty

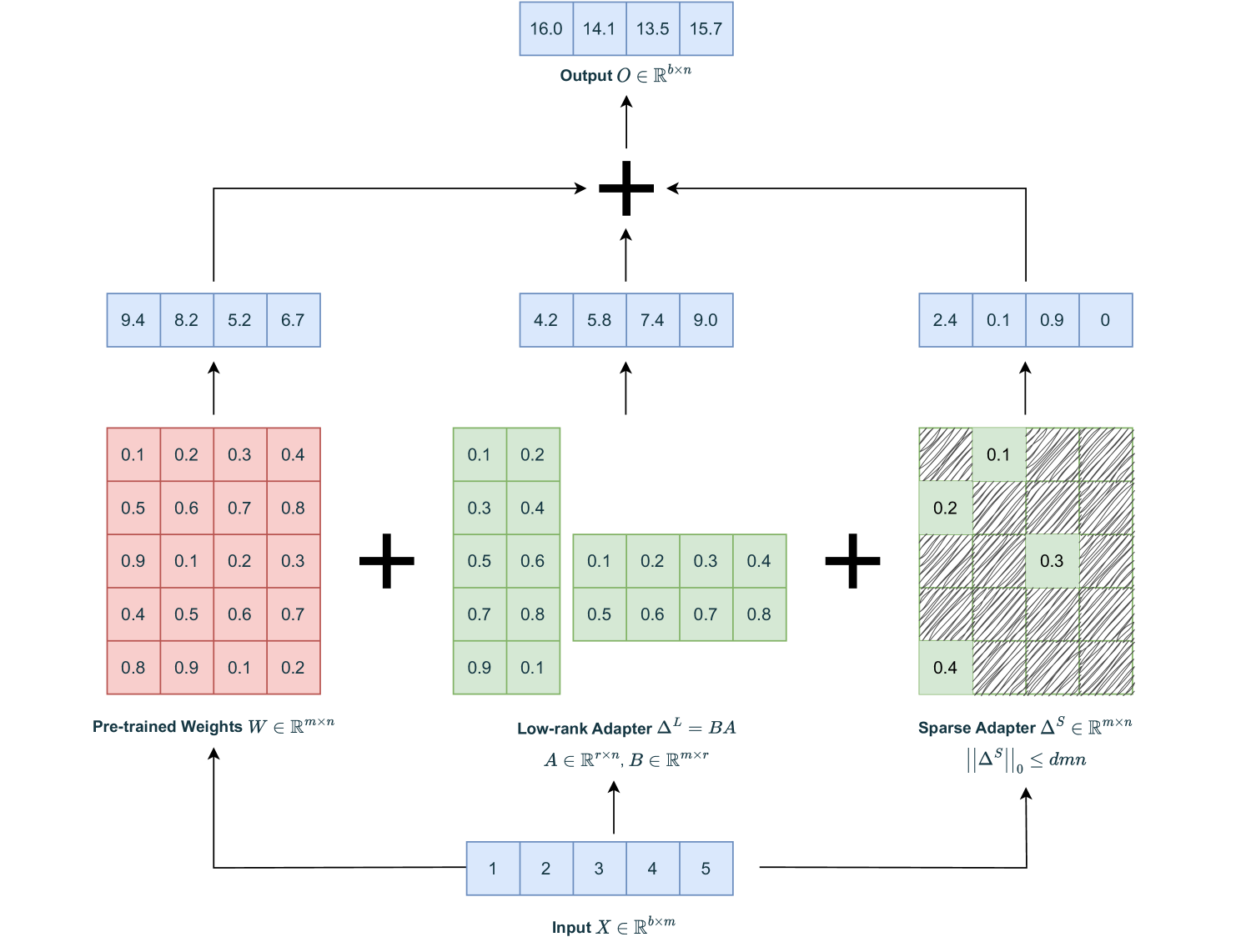

Robust Adaptation (RoSA)

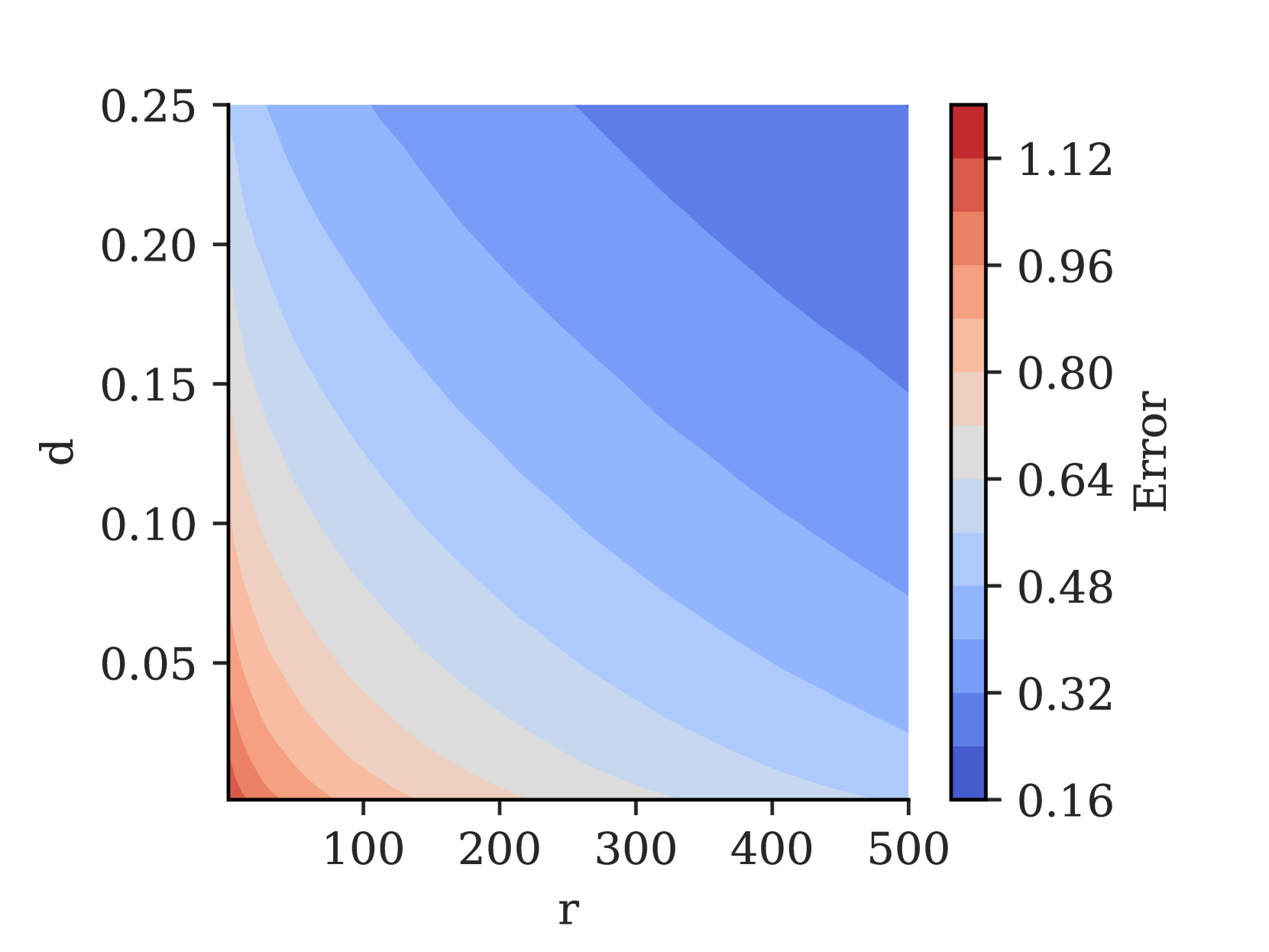

- Decomposes the fine-tuning update into two parallel adapters: a low-rank matrix (capturing general structure) and a sparse matrix (capturing high-magnitude outliers/details).

- Uses a 'warmup' phase to identify task-specific sparse masks based on gradients, rather than using random or static masks.

- Implements custom sparse GPU kernels (SDDMM) to make unstructured sparse training computationally efficient.

Architecture

Conceptual diagram of RoSA compared to FFT and LoRA.

Breakthrough Assessment

8/10

Addresses the critical accuracy gap of LoRA on hard tasks by effectively combining sparsity and low-rank structures, backed by a theoretical connection to Robust PCA and practical system optimizations.