📝 Paper Summary

Mechanistic Interpretability

Fine-tuning dynamics

Fine-tuning improves entity tracking not by creating new circuits, but by enhancing the existing circuit's ability to handle positional information and attend to correct objects.

Core Problem

While fine-tuning on generalized tasks (like math or code) improves performance on specific capabilities like entity tracking, it is unknown how this process alters the model's internal computational mechanisms.

Why it matters:

- Understanding whether fine-tuning fundamentally rewires a model or merely amplifies existing capabilities is crucial for safety and controllability.

- Entity tracking is a fundamental component of complex reasoning, yet the mechanistic effect of fine-tuning on this specific capability remains elusive.

- Prior work suggests fine-tuning might destroy OOD knowledge or shift weights, but lacks precise circuit-level explanations for performance gains.

Concrete Example:

In the task 'The apple is in box F... Box F contains the', a base model might fail to link 'Box F' to 'apple'. A math-tuned model succeeds. Does it use a new 'reasoning circuit' or just run the old one better?

Key Novelty

Mechanism Enhancement via Positional Augmentation

- Demonstrates that base and fine-tuned models use the *same* sparse attention circuit for entity tracking, rather than developing new pathways.

- Identifies that the performance boost comes from improved positional information handling: the circuit better tracks *where* the relevant entity is, rather than changing *how* it extracts the information.

- Introduces Cross-Model Activation Patching (CMAP) to transplant activations between models, proving that fine-tuned mechanisms can improve base model performance when grafted.

Architecture

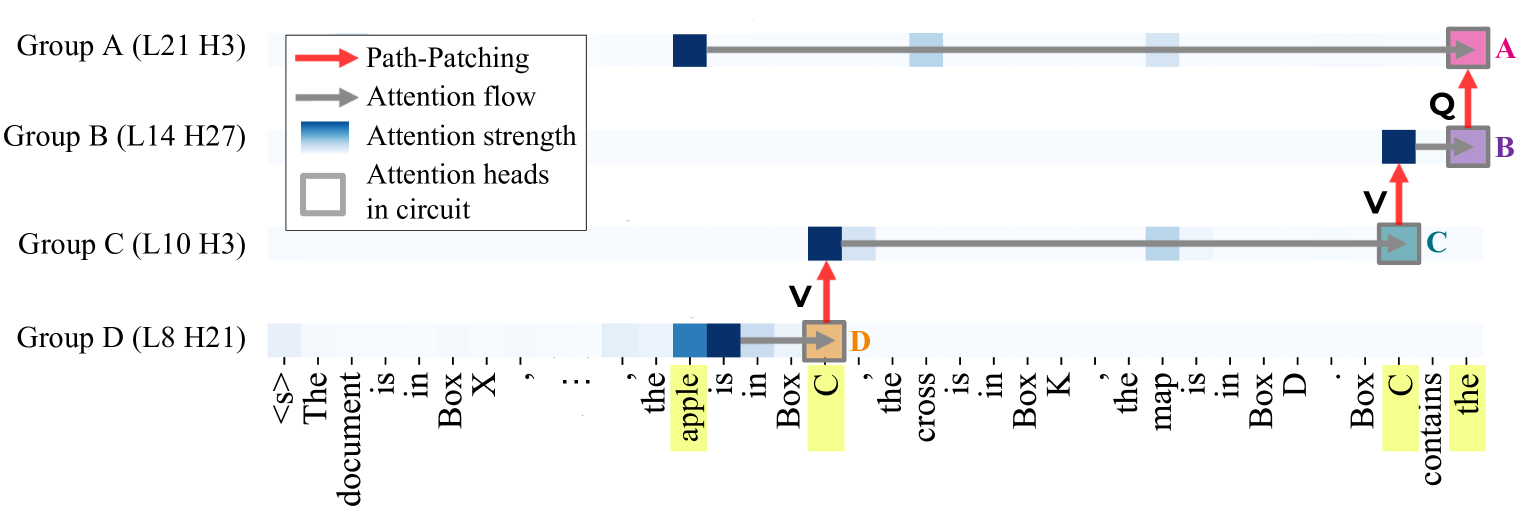

The Entity Tracking Circuit discovered in Llama-7B, showing four groups of attention heads (A, B, C, D) and their connectivity.

Evaluation Highlights

- The sparse entity-tracking circuit discovered in Llama-7B (72 heads) recovers 100% (faithfulness score 1.0) of the base model's performance.

- The *same* 72-head circuit from the base model recovers 97% of the performance in Vicuna-7B and ~88-89% in arithmetic-tuned models (Goat-7B/FLoat-7B).

- Fine-tuned models (Goat-7B, FLoat-7B) achieve near-perfect accuracy (>97%) on entity tracking compared to Llama-7B's 54%.

Breakthrough Assessment

8/10

Strong mechanistic evidence that fine-tuning preserves and amplifies existing circuits rather than creating new ones. Introduces novel patching methods (CMAP) and provides a clear case study on entity tracking.