📝 Paper Summary

Physics-Informed Neural Networks (PINNs)

Transfer Learning

Scientific Machine Learning

This paper systematically evaluates Low-Rank Adaptation (LoRA) against full and lightweight fine-tuning in PINNs, finding that LoRA significantly improves convergence speed with slight accuracy gains across varying physical scenarios.

Core Problem

Standard PINNs are limited to solving specific problems and require expensive retraining from scratch whenever problem conditions (boundaries, materials, geometries) change.

Why it matters:

- Retraining deep learning models for every minor variation in a physical system is computationally prohibitive for real-world engineering applications.

- Existing transfer learning studies in PINNs lack a systematic comparison of modern techniques like LoRA across diverse physical changes (geometry, material, boundary conditions).

- Traditional solvers handle parameter changes well, so AI for PDEs must demonstrate similar adaptability to be competitive.

Concrete Example:

In fluid dynamics, changing the inlet velocity profile of a Navier-Stokes problem typically requires a PINN to relearn the entire flow field from random initialization, wasting computational resources on physics it already learned.

Key Novelty

Systematic Evaluation of LoRA for PINNs (Strong and Energy Forms)

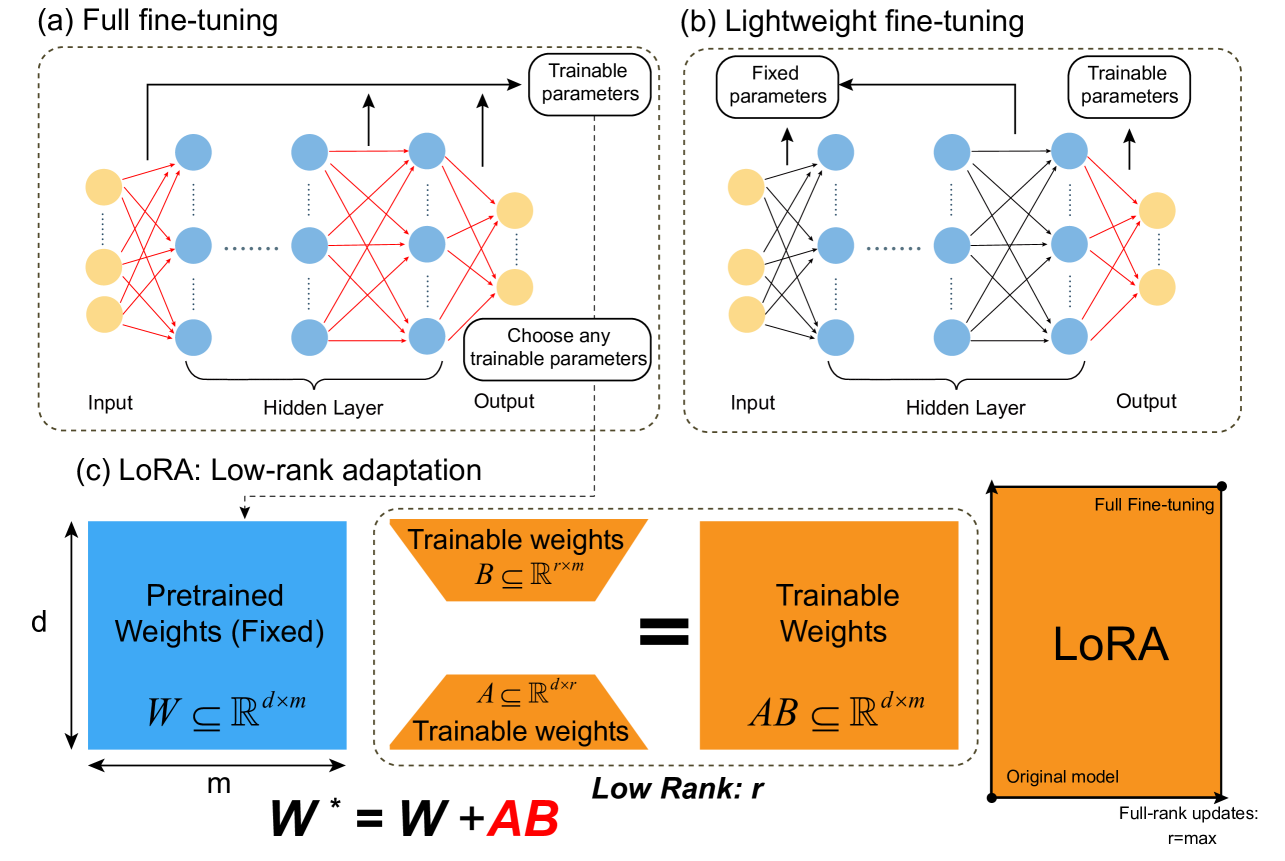

- Applies Low-Rank Adaptation (LoRA) to PINNs, decomposing weight updates into low-rank matrices to reduce trainable parameters while maintaining expressivity.

- Compares LoRA against Full Fine-Tuning and Lightweight Fine-Tuning across three distinct transfer scenarios: changing boundary conditions, changing material properties, and changing geometries.

- Investigates transfer learning performance in both Strong Form (differential equations) and Energy Form (variational/integral) of PINNs.

Architecture

Schematic comparison of Full Fine-Tuning, Lightweight Fine-Tuning, and LoRA architectures.

Evaluation Highlights

- LoRA and Full Fine-Tuning significantly improve convergence speed compared to training from scratch.

- LoRA provides a slight enhancement in accuracy across most tested scenarios (varying boundary conditions, materials, geometries) compared to baselines.

- Validates performance on Navier-Stokes equations (strong form) and elasticity problems (energy form) with varying complexities.

Breakthrough Assessment

7/10

While LoRA is established in LLMs, this systematic application and benchmarking in the context of PINNs (specifically covering geometry and material transfer in energy forms) provides valuable engineering guidance for AI-driven physics solvers.