📝 Paper Summary

Online Reinforcement Learning

Flow Matching Models

Robotic Control

ReinFlow fine-tunes flow matching policies by injecting learnable noise to create a tractable discrete-time Markov process, enabling stable online RL even with single-step inference.

Core Problem

Flow matching policies trained via imitation learning lack built-in exploration and struggle with discretization errors during RL fine-tuning, especially when using few denoising steps for fast inference.

Why it matters:

- Imitation learning policies often plateau due to imperfect demonstration data and embodiment gaps, requiring online interaction to improve.

- Existing RL methods for diffusion/flow models rely on approximations (like trace estimators) that are unstable or inaccurate at the low denoising step counts needed for real-time robot control.

- Robots need fast inference frequencies for dexterity, but reducing ODE solver steps typically degrades action quality without specific adaptation.

Concrete Example:

In a sparse-reward peg insertion task, a flow policy trained on expert data might fail to insert the peg if the initial state deviates slightly. Standard flow matching is deterministic during inference, preventing the exploration needed to correct this, while reducing inference to 1 step introduces large discretization errors that break standard log-probability calculations.

Key Novelty

Noise-Injected Flow as a Discrete-Time Markov Process

- Injects learnable, bounded noise directly into the flow trajectory during fine-tuning, converting the deterministic ODE path into a stochastic discrete-time Markov process with closed-form transition probabilities.

- Allows exact calculation of action log-probabilities without expensive ODE solvers or trace estimators, enabling stable policy gradient updates even when the policy uses only a single denoising step.

Architecture

Pseudocode for the ReinFlow algorithm, detailing the data collection, likelihood computation, and policy update loop.

Evaluation Highlights

- +135.36% average net growth in episode reward for Rectified Flow policies in legged locomotion tasks compared to pre-trained baselines.

- +40.34% average net increase in success rate for Shortcut Model policies in manipulation tasks using 4 or even 1 denoising step.

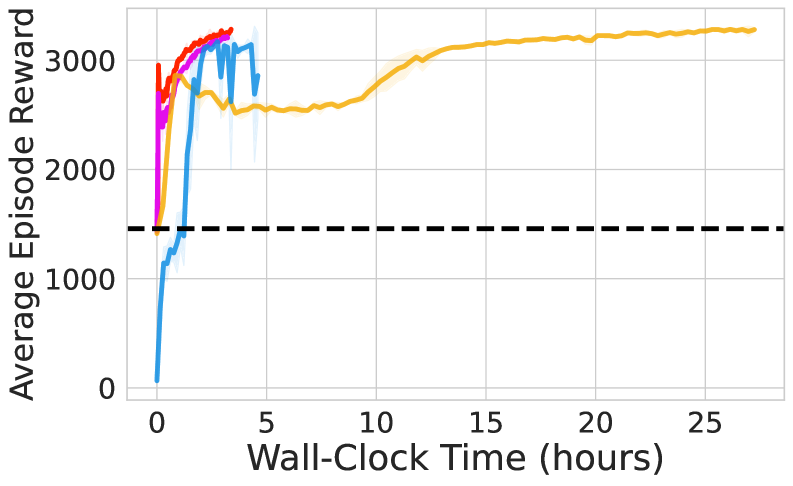

- Reduces wall-clock training time by 82.63% compared to the state-of-the-art diffusion RL method DPPO while achieving comparable or better performance.

Breakthrough Assessment

8/10

Significant for enabling stable online RL for flow models at 1-step inference, solving a major efficiency bottleneck. Strong empirical results across locomotion and manipulation with massive speedups over diffusion baselines.