📝 Paper Summary

Smart Contract Security

Vulnerability Detection

Explainable AI (XAI) for Code

Smart-LLaMA adapts a general LLM for smart contract security via domain-specific pre-training and fine-tuning on a novel dataset of code-explanation pairs to detect and explain vulnerabilities.

Core Problem

Existing smart contract vulnerability detection methods suffer from poor dataset quality (lacking explanations/locations), general LLMs' inability to grasp contract-specific semantics, and a lack of explainability in detection results.

Why it matters:

- Smart contract vulnerabilities (e.g., reentrancy) cause massive financial losses (e.g., the $60M DAO incident), making accurate detection critical

- Developers cannot easily fix bugs if tools only output binary labels without explaining 'why' or 'where', slowing down remediation

- Current datasets are limited in scope and detail, causing models to overfit simplistic features rather than learning true vulnerability patterns

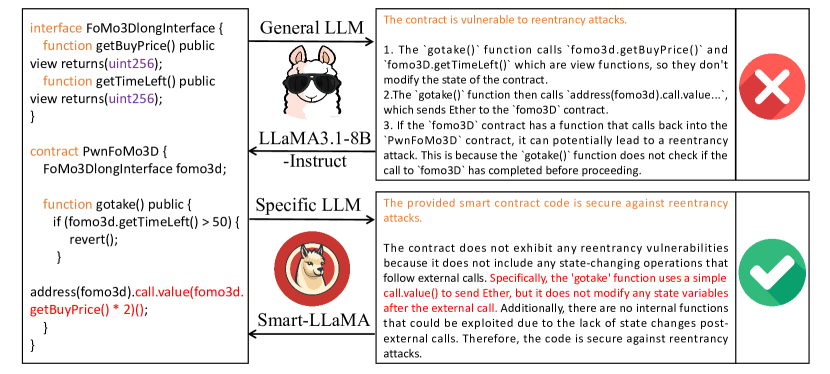

Concrete Example:

When analyzing a contract with a `gotake()` function involving an external call, a general LLaMA-3.1-8B-Instruct model incorrectly flags it as a reentrancy vulnerability. It fails to recognize that no state changes occur after the call, which makes the contract safe. Smart-LLaMA correctly identifies it as safe because it understands the specific state-change pre-requisites for reentrancy.

Key Novelty

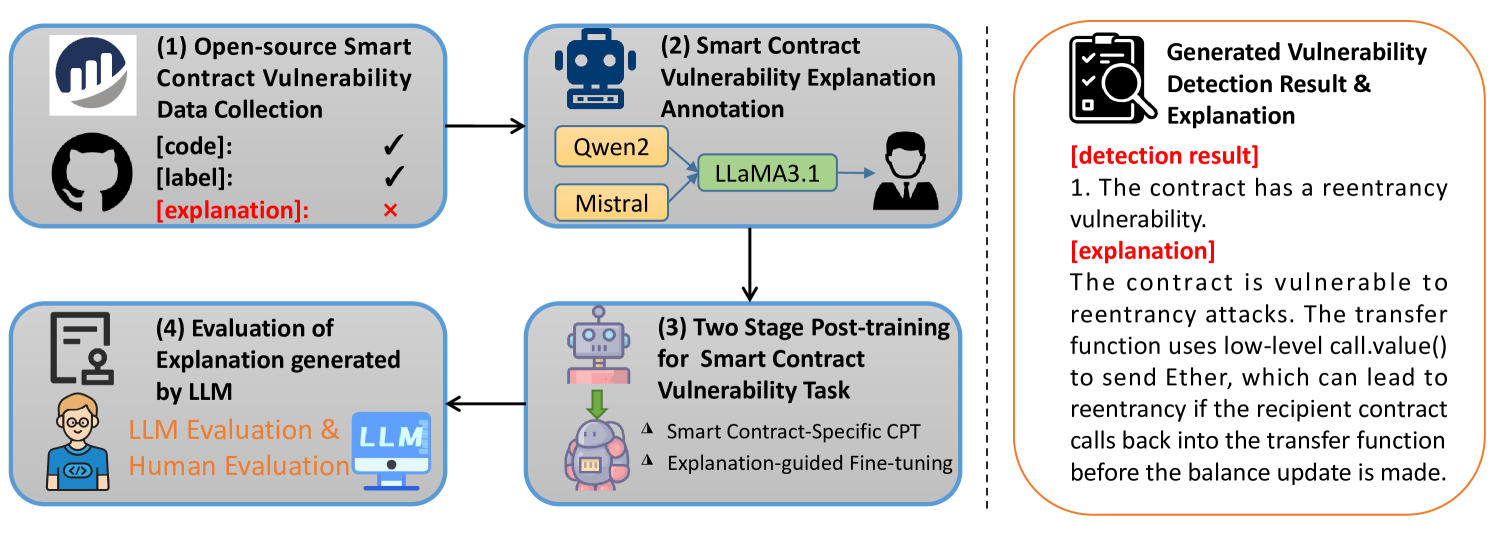

Two-Stage Post-Training with Explanation-Guided Dataset

- Constructs a high-quality dataset by using large teacher LLMs (Qwen2, Mistral) to generate explanations, filtering them via an LLM judge, and verifying with human experts

- Applies 'Smart Contract-Specific Continual Pre-Training' on raw contract code to adapt the model to Solidity syntax and semantics before fine-tuning

- Uses 'Explanation-Guided Fine-Tuning' where the model learns to output both a detection label and a detailed reasoning chain, bridging the gap between detection and understanding

Architecture

The overall workflow of Smart-LLaMA, illustrating the data collection, annotation, and two-stage training process

Evaluation Highlights

- Outperforms state-of-the-art methods by +7.35% F1 score on Reentrancy vulnerabilities and +9.55% F1 on Delegatecall vulnerabilities

- Achieves superior accuracy across all 4 vulnerability types, improving by +4.14% to +5.53% over previous best performers

- Human experts rated Smart-LLaMA's explanations as 'perfect' (4/4) for correctness in 69.5% of cases, significantly higher than the baseline LLaMA-3.1-8B-Instruct

Breakthrough Assessment

8/10

Significant contribution in dataset quality (explanations+locations) and a robust pipeline combining domain adaptation with instruction tuning. Strong empirical gains on difficult vulnerability types.