📝 Paper Summary

Reinforcement Learning from Human Feedback (RLHF)

Reward Modeling

Mixture-of-Experts (MoE)

UMM-RM upcycles a dense reward model into a Mixture-of-Experts with a mandatory shared expert during training to capture diverse preferences, then merges them back into a dense model to prevent exploitation of specific experts.

Core Problem

Dense reward models in RLHF are susceptible to reward hacking, where policies exploit spurious correlations or biases to maximize scores without aligning with human intent, especially under distribution shifts.

Why it matters:

- Models may generate unsafe or biased content while receiving high reward scores, decoupling the reward signal from true generation quality.

- Existing ensemble methods reduce hacking but incur high inference costs by requiring multiple forward passes.

- Standard sparse MoE reward models can overfit local regions, creating new 'speculative experts' that policies can exploit for high scores on bad outputs.

Concrete Example:

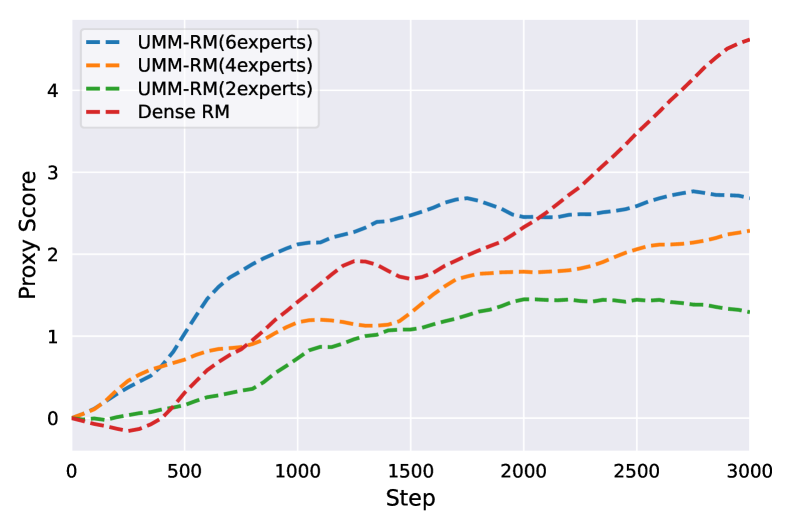

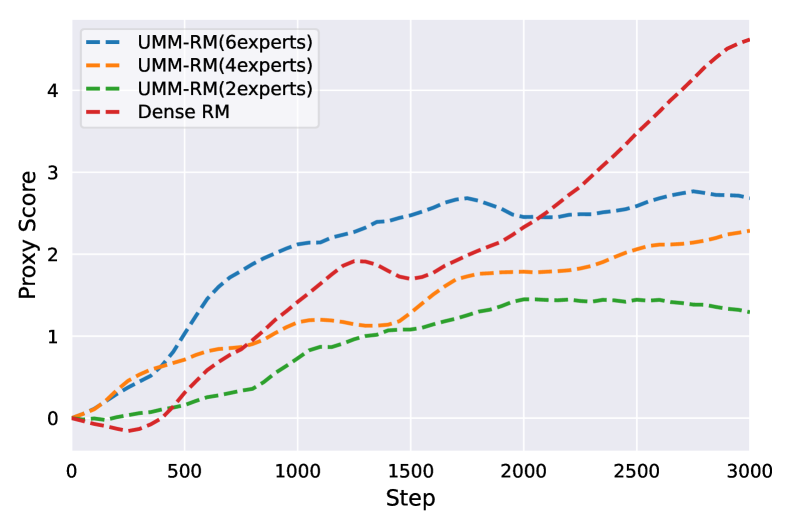

During PPO training with a standard dense reward model, the proxy reward score keeps rising monotonically, but the 'Gold RM' score (representing true preference) eventually collapses—indicating the policy is gaming the system. UMM-RM prevents this divergence.

Key Novelty

Upcycle-and-Merge MoE with Shared Expert

- Upcycles a dense model into an MoE where one 'shared expert' is always active (handling general instruction following/safety) while others are routed sparsely to capture fine-grained preferences.

- Post-training, the experts are merged back into a single dense model using learnable weights derived from gating statistics, smoothing out extreme, exploitable signals from individual experts.

Architecture

Conceptual flow: Upcycling dense FFN -> MoE with Shared Expert -> Training -> Merging back to Dense FFN.

Evaluation Highlights

- Achieved 67.2% accuracy on Anthropic HH-Helpful with Qwen2.5-0.5B, outperforming standard dense models.

- Win rate against SFT baseline increased from 51.5% (Dense RM) to 60.5% (UMM-RM 6-expert) on AlpacaFarm evaluation.

- Significantly reduced reward hacking during PPO: Gold RM scores remained stable while proxy scores increased, unlike the collapse seen in dense and standard MoE baselines.

Breakthrough Assessment

7/10

Offers a practical, computation-neutral solution to reward hacking. While the concept of merging experts isn't entirely new, applying it specifically to stabilize RLHF reward signals effectively addresses a critical safety bottleneck.