📝 Paper Summary

Stochastic Thermodynamics in RL

Information-Theoretic RL

Exploration-Exploitation Trade-off

The paper leverages principles from stochastic thermodynamics to reinterpret Reinforcement Learning as a free energy minimization problem, linking information processing costs to the exploration-exploitation trade-off.

Core Problem

Standard Bayesian learning approaches in MDPs often lack a principled way to account for the explicit cost of information gain during the exploration-exploitation trade-off.

Why it matters:

- Current formulations like BAMDPs focus on maximizing cumulative reward but do not inherently account for the thermodynamic cost of acquiring information.

- Existing information-theoretic methods rely on heuristic ideas like 'information ratio' rather than a fundamental physical basis.

- A lack of physical grounding obscures the global optimization problem being solved when balancing reward against uncertainty.

Concrete Example:

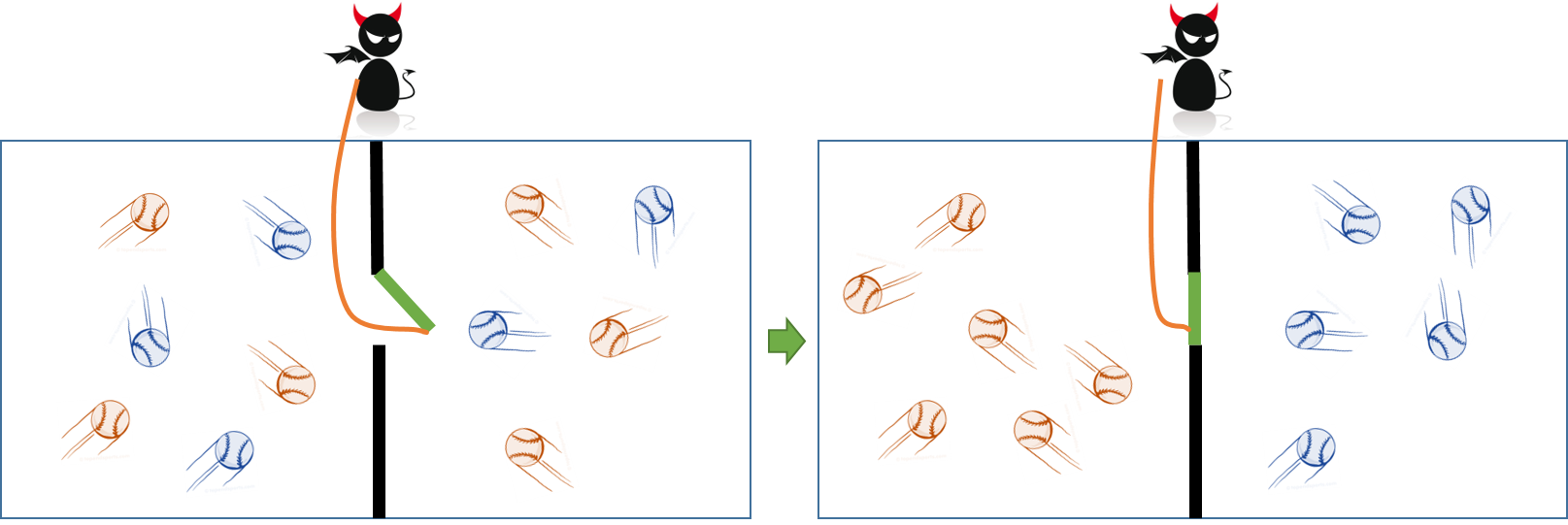

In Maxwell's demon thought experiment, a demon reduces entropy (and extracts work) by observing particles. Standard RL maximizes reward but lacks an equivalent 'energy cost' for the demon's observation (information gain), potentially leading to policies that ignore the 'work' required to process information.

Key Novelty

Thermodynamic Dual-Pronged Framework for RL

- Maps the RL exploration-exploitation problem to a thermodynamic system balancing drift dynamics (exploitation) and diffusion (exploration).

- Establishes a mathematical equivalence between the Bellman equation and the non-equilibrium second law of thermodynamics (Work ≥ Change in Free Energy).

- Interprets the optimal policy as the distribution that minimizes the work done on the system, where 'work' equates to the expected cumulative cost.

Architecture

Maxwell's Demon thought experiment setup (described in text)

Evaluation Highlights

- Theoretical derivation showing the Bellman equation is mathematically equivalent to minimizing free energy in a thermodynamic system.

- Proof that the optimal control distribution minimizes the KL divergence between the controlled dynamics and the passive dynamics weighted by exponentiated value.

- Establishes that the information used to extract work (reward) corresponds to the work supplied during the measurement process in a physical system.

Breakthrough Assessment

4/10

The paper provides a strong theoretical re-interpretation of RL through physics, solidifying connections between control theory and thermodynamics. However, it is purely theoretical with no empirical evaluation or practical algorithm implementation.