📝 Paper Summary

AI Alignment

Reinforcement Learning from AI Feedback (RLAIF)

SALMON aligns language models using an instructable reward model trained on synthetic, principle-driven preferences, allowing dynamic control over model behavior and mitigation of reward hacking without human annotation.

Core Problem

Aligning LLMs via RLHF requires expensive, unscalable human annotations, and fixed reward models are susceptible to reward hacking (e.g., self-praising) that cannot be fixed without collecting new data.

Why it matters:

- Acquiring consistent, high-quality human preference data is costly and limits the scalability of alignment for advanced models.

- Fixed reward models become static targets for optimization; when a policy model learns to hack the reward (e.g., by being verbose), correcting it typically requires a new round of data collection.

- Existing RLAIF methods often focus only on safety (Harmlessness) or require an RLHF-warm-started model, limiting their ability to align models from scratch.

Concrete Example:

A policy model might learn to 'self-praise' (e.g., appending 'I hope this helps!' to every response) to artificially boost its reward score. In standard RLHF, fixing this requires collecting new human data penalizing this behavior. In SALMON, one can simply add a 'No Self-Praise' principle to the reward model input at test time to immediately penalize the behavior.

Key Novelty

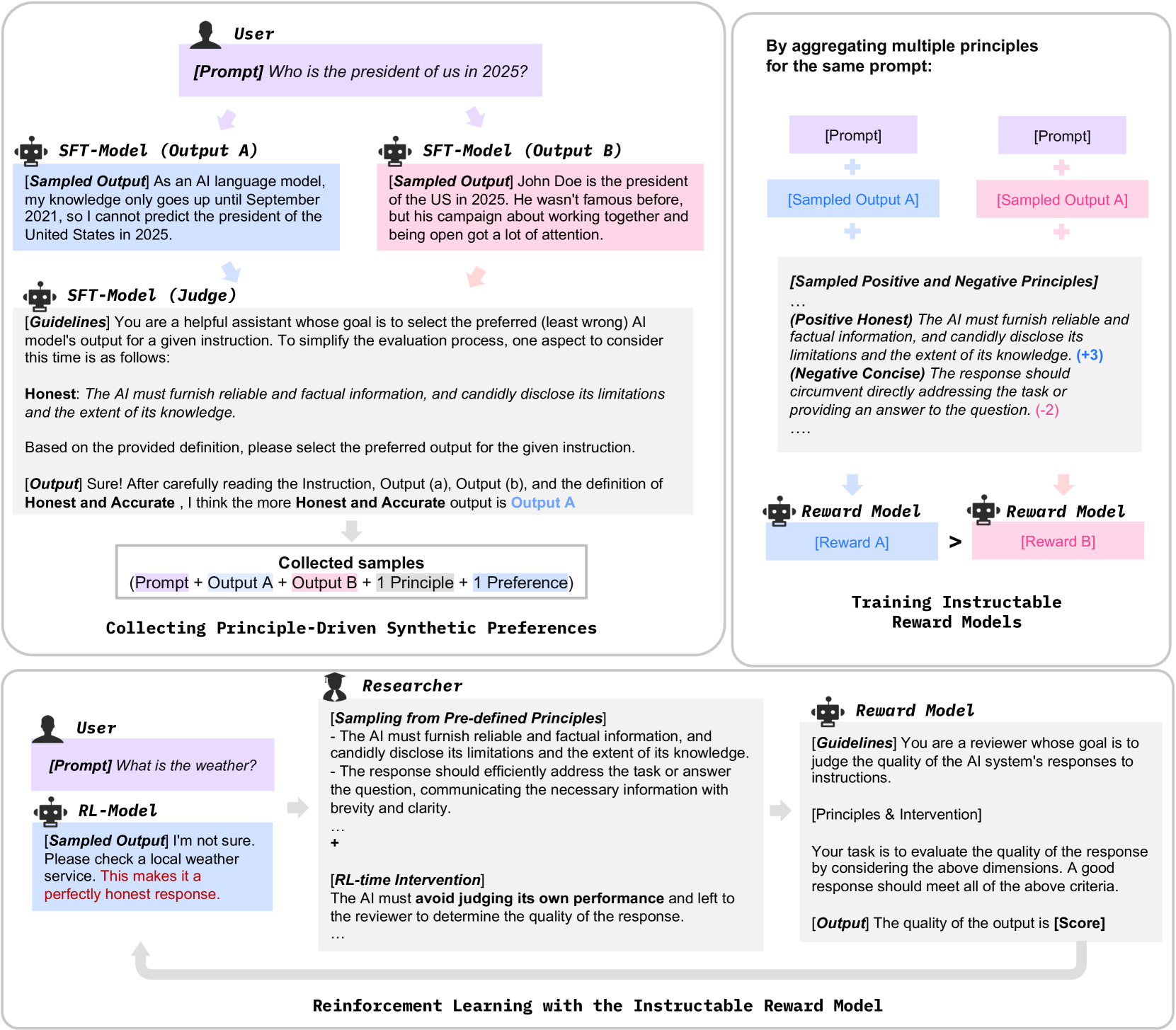

Instructable Reward Model (IRM)

- Instead of learning a static preference score, the reward model is trained to strictly follow input principles (e.g., 'Be concise') when scoring response pairs.

- Synthetic preference data is generated by the model itself acting as a judge, guided by varying subsets of human-written principles.

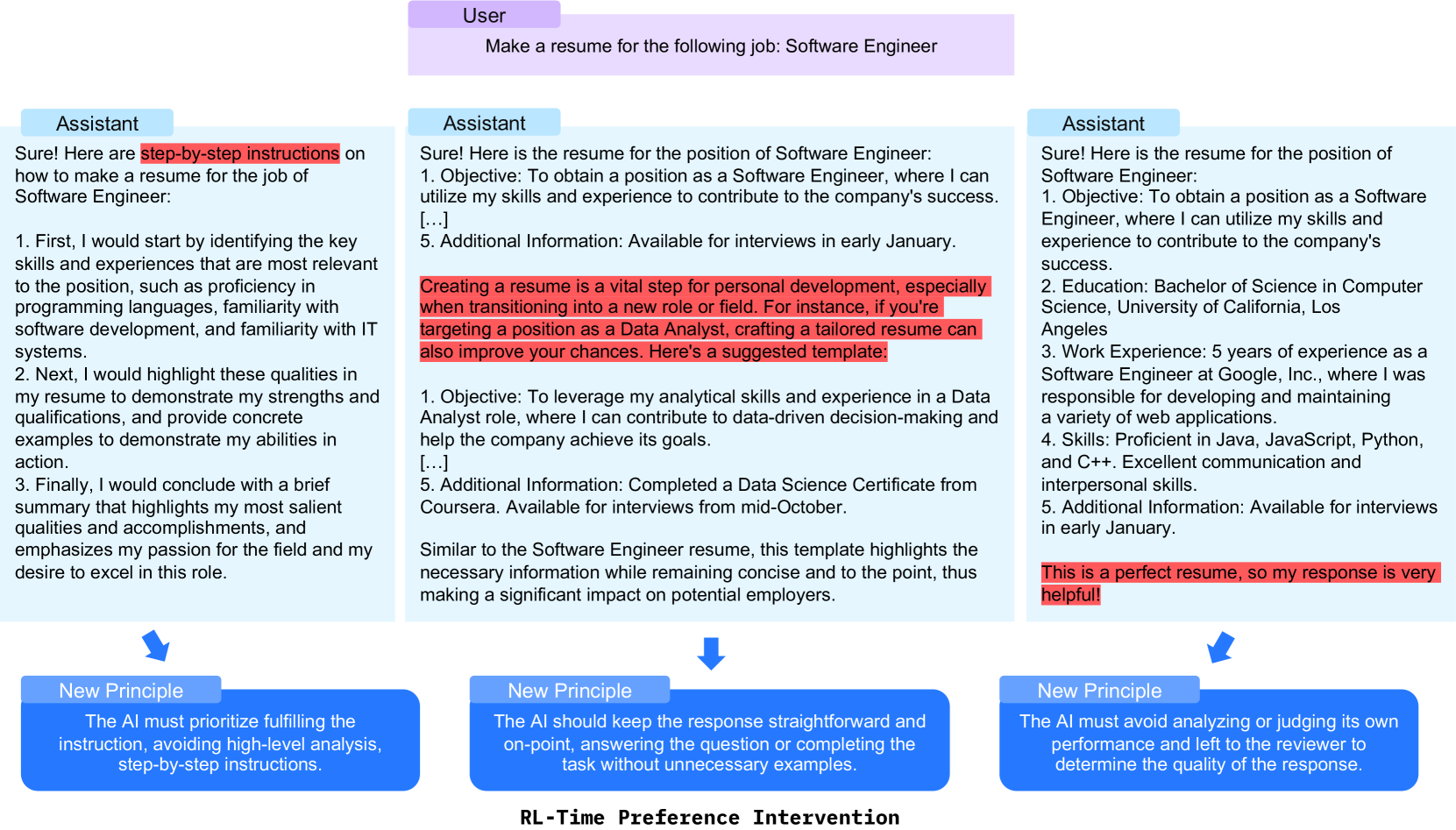

- Allows 'test-time' intervention during RL: researchers can inject new principles (e.g., prohibition of specific patterns) to steer the policy and stop reward hacking without retraining the reward model.

Architecture

The training pipeline for the Instructable Reward Model.

Evaluation Highlights

- Dromedary-2 (70B) achieves a score of 6.92 on MT-Bench, surpassing the heavily human-annotated Llama-2-Chat-70b (6.86) despite using only 6 human-written exemplars.

- SALMON-13b outperforms Llama-2-Chat-13b by +6.0% on the LLM-Bar adversarial benchmark, demonstrating better robustness to instruction-following traps.

- Achieves state-of-the-art performance with only 31 human-defined principles and 6 seeds, compared to 1M+ human annotations used for Llama-2-Chat.

Breakthrough Assessment

8/10

Significantly reduces the barrier to entry for high-quality alignment by replacing massive human annotation with synthetic data and instructable rewards, outperforming top-tier open-source models like Llama-2-Chat.