📝 Paper Summary

LLM-guided Reinforcement Learning

Sample Efficient RL

Q-shaping utilizes heuristics from large language models to directly modify Q-values during training, improving sample efficiency without altering the agent's optimal policy upon convergence.

Core Problem

Reinforcement learning is computationally expensive and sample inefficient, while standard acceleration methods like reward shaping often introduce bias (changing the optimal policy) or require difficult manual design.

Why it matters:

- Training complex agents (e.g., AlphaGo, bipedal robots) requires millions of steps and massive compute (e.g., 68 hours for a soccer robot), making efficiency critical.

- Existing solutions like reward shaping are slow to verify because one must wait for full training to see if the heuristic helped.

- LLM-based reward design often biases the Markov Decision Process (MDP), leading agents to suboptimal behaviors that satisfy the LLM's proxy reward rather than the true task.

Concrete Example:

In reward shaping, if an LLM gives a bonus for 'walking near the ball,' the agent might learn to just stand near the ball without kicking it. Q-shaping avoids this by treating the LLM's advice as a temporary exploration bias that vanishes at convergence.

Key Novelty

Q-Shaping Framework

- Extends Q-value initialization by allowing LLM-derived values to shape the Q-function throughout training, rather than just at the start.

- Guarantees 'unbiased learning,' meaning the heuristic values guide exploration but do not change the mathematical definition of the optimal policy (unlike non-potential-based reward shaping).

- Allows for rapid verification of heuristics: experimenters can see the impact of LLM guidance immediately via Q-value changes rather than waiting for policy convergence.

Architecture

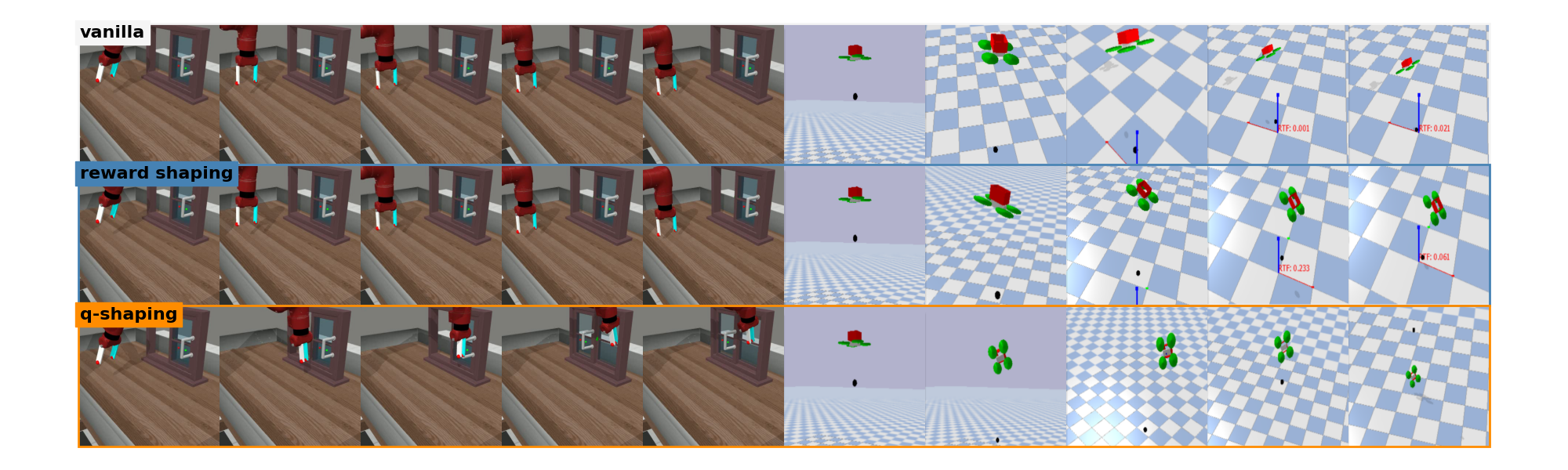

Illustrates the agent behavior across different algorithms (Standard RL, Reward Shaping, Q-shaping).

Evaluation Highlights

- +16.87% improvement in sample efficiency over the best baseline in each of the 20 tested environments.

- +253.80% peak performance improvement compared to LLM-based reward shaping methods (specifically T2R and Eureka).

Breakthrough Assessment

7/10

Offers a theoretically sound alternative to reward shaping with significant empirical gains. Addressing the 'bias' in LLM-guided RL is a critical and timely contribution.